WHY THIS MATTERS IN BRIEF

AI’s learn in fundamentally different ways to humans, and increasingly that seems to be their advantage in human-machine competitions.

Interested in the Exponential Future? Join our XPotential Community, future proof yourself with courses from our XPotential University, connect, watch a keynote, or browse my blog.

Interested in the Exponential Future? Join our XPotential Community, future proof yourself with courses from our XPotential University, connect, watch a keynote, or browse my blog.

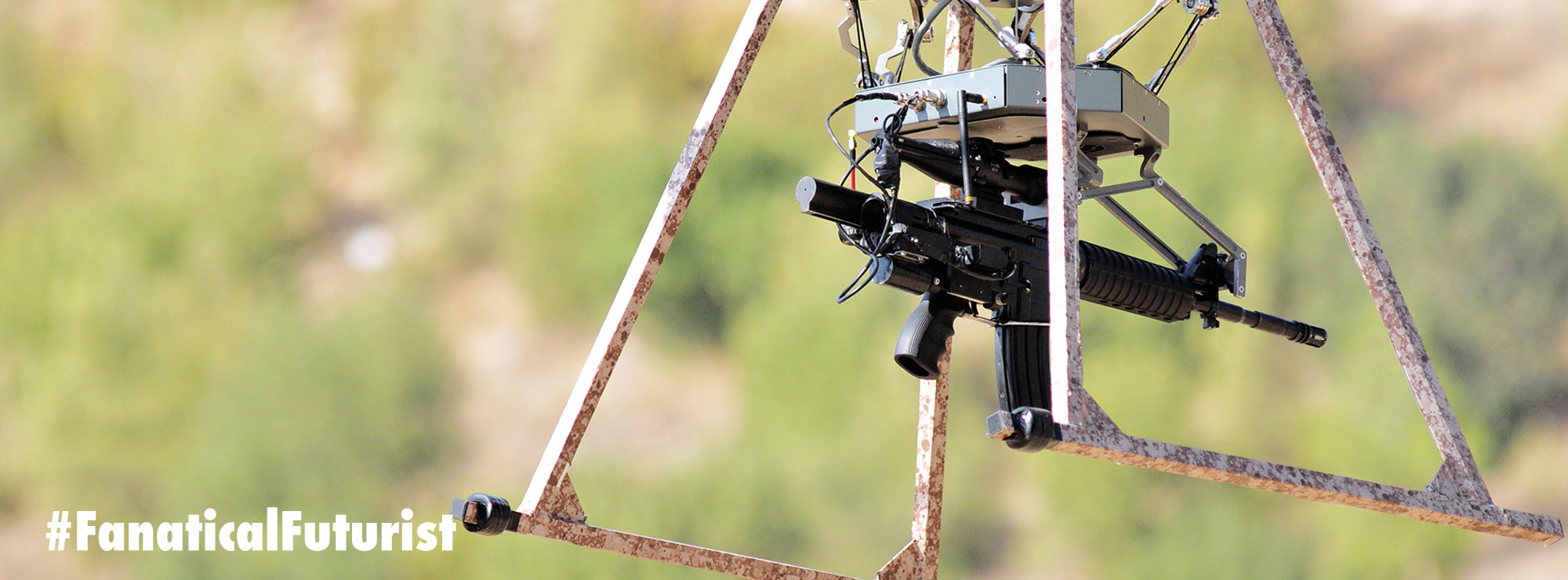

Who’s better in a dog fight – an Artificial Intelligence (AI) or highly trained US Topgun pilot? A couple of years ago it was an AI running on a Raspberry Pi that took some of the US Air Force’s top combat pilots to town and “changed the balance of global warfare,” and now, as the US military starts building its first fully autonomous fighter jets and drone squadrons, the never ending saga of machines outperforming humans has a new chapter after yet another AI beat a human fighter pilot in a virtual dogfight. The contest was the finale of the US military’s AlphaDogfight challenge, an effort to “demonstrate the feasibility of developing effective, intelligent autonomous agents capable of defeating adversary aircraft in a dogfight.”

In other words, a competition to develop the world’s first fully autonomous combat systems which, bearing in the mind the US Marines are jockeying to be the first to get their hands on the first breed of hunter killer drones and fully autonomous F-35’s, with the USAF close behind, will signal a new deadly era of warfare as AI increasingly becomes both co-pilot and eventually the pilot.

Last August DARPA selected eight teams ranging from large, traditional defense contractors like Lockheed Martin to small groups like Heron Systems to compete in a series of trials in November and January. In the final, which was held last Thursday, Heron Systems emerged as the victor against the seven other teams after two days of old school dogfights, going after each other using nose-aimed guns only. Heron then faced off against a human fighter pilot sitting in a simulator and wearing a virtual reality helmet, and won five rounds to zero.

The other winner in Thursday’s event was deep reinforcement learning, where AI algorithms get to try out a task in a virtual environment over and over again, which means they can learn new skills and tricks hundreds of millions of times faster than any human can, until they develop something like understanding. It’s this latter technology that, in the end, proved to be key in helping defeat the USAF’s best, and both Heron Systems and Lockheed Martin’s teams used it to deadly advantage.

Matt Tarascio, vice president of AI, and Lee Ritholtz, director and Chief Architect of AI said that trying to get an algorithm to perform well in air combat is very different than teaching software simply “to fly,” or maintain a particular direction, altitude, and speed. Software will begin with a complete lack of understanding about even very basic flight tasks, explained Ritholtz, putting it at a disadvantage against any human, at first.

“You don’t have to teach a human [that] it shouldn’t crash into the ground… They have basic instincts that the algorithm doesn’t have,” in terms of training. “That means dying a lot. Hitting the ground, a lot,” said Ritholtz.

Tarascio likened it to “putting a baby in a cockpit.”

Overcoming that ignorance requires teaching the algorithm that there’s a cost to every error but those costs aren’t equal. The reinforcement comes into play when the algorithm, based on simulation after simulation, assigns weights, or “costs,” to each manoeuvre, and then re-assigns those weights as experiences are updated.

Here, too, the process varies greatly depending on the inputs, including the conscious and unconscious biases of the programmers in terms of how to structure simulations.

“Do you write a software rule based on human knowledge to constrain the AI or do you let the AI learn by trial and error? That was a big debate internally. When you provide rules of thumb, you limit its performance. In our opinion they need to learn by trial and error,” said Ritholtz.

It’s also notable that this isn’t the first time that AI’s have beaten humans using this trial and error technique either – Google DeepMind’s AlphaZero took gaming grandmasters to town after only being given the rules of the games to play and no further instructions or advice, then it played those games hundreds of millions of times in simulation before obliterating its competition just four hours later. It also shows that, given the chance, AI’s will learn in their own ways and sometimes those ways are superior to human ways of learning and actively hunt for weaknesses in human strategies – something that as we both work with and compete against AI’s will be fascinating to watch.

Ultimately though this competition wasn’t about how quickly an AI can learn and Lockheed, like several other teams, had a fighter pilot advising the effort. They also were able to run training sets on up to 25 DGx1 servers at a time. But what they ultimately produced could run on just a single GPU which made the feat even more impressive because you can only wonder at what the system would be capable of if it had more computing power. Scarily when Google gave their AI’s more computing power and pit them against each other in a similar one on one competition they found that the AI’s with more computing power quickly became what they called “aggressive,” so watch this space … and pray for the successful development of an AI kill switch.

On the other side of the fence after the victory Ben Bell, the senior machine learning engineer at Heron Systems, said that their agent had been through at least 4 billion simulations and had acquired at least “12 years of experiences.”

It’s not the first time that an AI has bested a human fighter pilot in a contest. A 2016 demonstration showed that an AI-agent dubbed Alpha could beat an experienced human combat flight instructor. But the DARPA simulation on Thursday was arguably more significant as it pitched a variety of AI agents against one another and then against a human in a highly structured framework.

The AIs weren’t allowed to learn from their experiences during the actual trials, which Bell said was “a little bit unfair.” The actual contest did bear that out. By the fifth and final round of the matchup, the anonymous human pilot, call-sign Banger, was able to significantly shift his tactics and last much longer.

“The standard things that we do as fighter pilots aren’t working,” he said. But it didn’t matter in the end. He hadn’t learned fast enough and was defeated.

There-in lies a big future choice that the military will have to make. Allowing AI to learn more in actual combat, rather than between missions and thus under direct human supervision, would probably speed up learning and help unmanned fighters compete even better against human pilots or other AIs. But that would take human decision making out of the process at a critical point. Ritholtz said that the approach he would advocate, right now at least, would be to train the algorithm, deploy it, then “bring the data back, learn off it, train again, redeploy,” rather than have the agent learning in the air, although let’s face it eventually it’ll be learning anywhere and everywhere.

Timothy Grayson, director of the Strategic Technology Office at DARPA, described the trial as a victory for better human and machine teaming in combat, which was the real point. The contest was part of a broader DARPA effort called Air Combat Evolution, or ACE, which doesn’t necessarily seek to replace pilots with unmanned systems, yet, but does seek to automate a lot of pilot tasks.

“I think what we’re seeing today is the beginning of something I’m going to call human-machine symbiosis… Let’s think about the human sitting in the cockpit, being flown by one of these AI algorithms as truly being one weapon system, where the human is focusing on what the human does best [like higher order strategic thinking] and the AI is doing what the AI does best,” Grayson said.

Although to that latter point of leaving the human pilot to do what they do best and think about higher level strategy, well, that too is already being automated after the US military re-purposed the world’s most advanced Poker playing AI and got it to start playing war games. But that’s another story …