WHY THIS MATTERS IN BRIEF

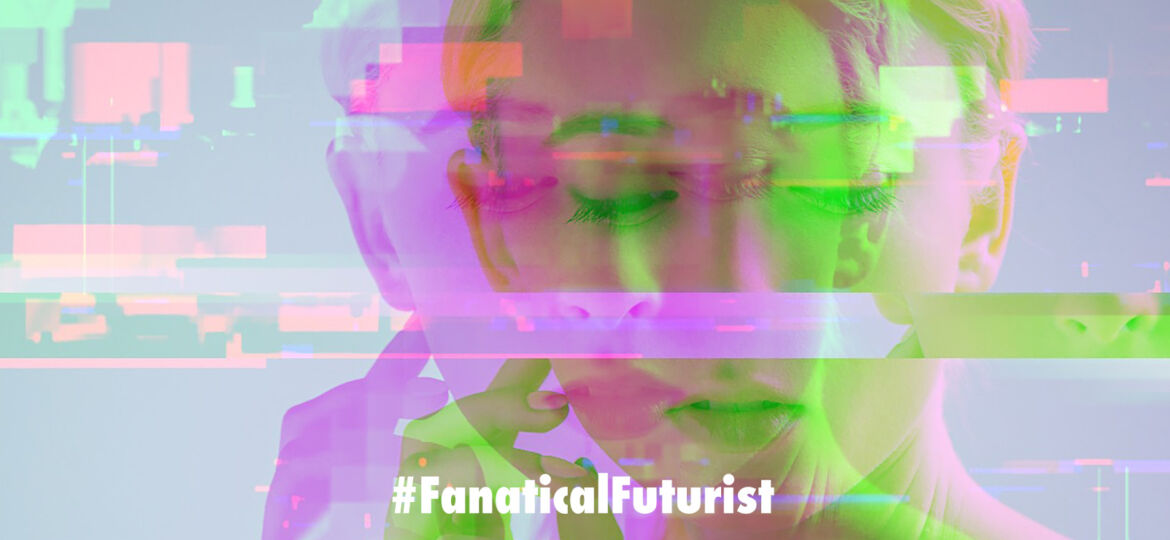

Can you believe what you see online? Increasingly no, and this latest high definition “DeepFake” tech is going to make it even harder.

Interested in the Exponential Future? Join our XPotential Community, connect, watch a keynote, or browse my blog.

Interested in the Exponential Future? Join our XPotential Community, connect, watch a keynote, or browse my blog.

How would you like to swap faces with people? Perhaps, like this guy, you’d like to be Elon Musk in your next company Zoom call? Up until now though face swapping technologies, or you could also call them DeepFakes, have been fairly easy to spot because the finished results are often low resolution or just plain look funky. But now a new paper published by Disney Research in partnership with ETH Zurich describes a fully automated, neural network-based method for swapping faces in photos and videos that’s high resolution.

Not only could that could make it suited for use in film and TV, which is Disney’s main reason for playing around with the tech, where high resolution results are key to ensuring that the final product is good enough to reliably convince viewers as to their reality, but it could also finally open the door to DeepFakes convincing enough to fool anyone and everyone which would then up the ante of what you feel you can trust online. Imagine, for example, the implications of having a Zoom call with a criminal who looks and sounds like your boss and tells you to transfer wads of cash into a Balkan bank account.

See the tech in action

In this case though the researchers specifically intend this tech for use in replacing an existing actor’s performance with a substitute actor’s face, for instance when de-aging or increasing the age of someone, as happened to Will Smith in Gemini Man, or potentially when portraying an actor who has passed away, as happened with Princess Leia in the last Star Wars movie.

They also suggest it could be used for replacing the faces of stunt doubles, who ironically themselves are being replaced with new technology, in cases where the conditions of a scene call for them to be used.

The new method is unique from other approaches in a number of ways, including that any face used in the set can be swapped with any recorded performance, making it possible to relatively easily re-image the actors on demand. The other is that it kindles contrast and light conditions in a compositing step to ensure the actor looks like they were actually present in the same conditions as the scene.

You can check out the results for yourself in the video, and as the researchers point out the effect is actually much better in moving video than in still images. There’s still a hint of “uncanny valley” effect going on here, but the researchers also acknowledge that, calling this “a major step toward photo-realistic face swapping that can successfully bridge the uncanny valley” in their paper.

Basically it’s a lot less nightmare fuel than other attempts I’ve seen, especially when you’ve seen the side-by-side comparisons with other techniques in the sample video. And, most notably, it works at much higher resolution, which is key for actual entertainment industry use.

The examples presented are a super small sample so it remains to be seen how broadly this can be applied. The subjects used appear to be primarily white, for instance. Also, there’s always the question of the ethical implication of any use of face-swapping technology, especially in video, as it could be used to fabricate credible video or photographic “evidence” of something that didn’t actually happen – which is a whole new can of worms.

Given, however, that the technology is now in development from multiple quarters it’s essentially long past the time for debate about the ethics of its development and exploration. Instead, it’s welcome that organizations like Disney Research are following the academic path and sharing the results of their work, so that others concerned about its potential malicious use can determine ways to flag, identify and protect us all against any bad actors when they too get their hands on this tech – which won’t be too far away.