WHY THIS MATERS IN BRIEF

As we come to rely on robots and automated systems more in our daily lives their ability to accurately perceive our world is crucial.

If I showed you a single picture of a room it’s likely you’d be able to tell me about it right away, such as the fact there’s a table with a chair in front of it, that they’re probably about the same size, about this far from each other, and with the walls this far away. Either way it’d be enough for you to be able to draw a rough map of the room. Machine vision systems don’t yet have this deeply human intuitive understanding of “space,” but the latest research from DeepMind has now bought it within reach after a successful demonstration.

The teams paper was published in the journal Science and it details a system whereby a neural network, knowing practically nothing, can look at one or two static 2D images of a scene and reconstruct a reasonably accurate 3D representation of it.

Most machine vision algorithms work via what’s called supervised learning, where they’re fed a huge amount of information that’s already been labelled with everything outlined and named.

The new system from DeepMind though has no such knowledge to draw on and it wasn’t fed any information. It works entirely independently of the way we see the world, such as how objects’ colours change toward their edges, how they get bigger and smaller as their distance changes and so on.

It works, roughly speaking, like this. One half of the system is its “representation” part, which can observe a given 3D scene from some angle, encoding it in a complex mathematical form called a vector. Then there’s the “generative” part, which, based only on the vectors created earlier, predicts what a different part of the scene would look like.

Think of it like someone handing you a couple of pictures of a room, then asking you to draw what you’d see if you were standing in a specific spot in it. Again, this is simple enough for us, but computers have no natural ability to do it, their sense of “sight,” if we can call it that, is extremely rudimentary, and of course machines lack imagination, which is something else DeepMind has given its AI’s recently.

“It wasn’t at all clear that a neural network could ever learn to create [3D] images in such a precise and controlled manner,” said lead author of the paper, Ali Eslami, in a statement, “however we found that sufficiently deep neural networks can learn about perspective, occlusion and lighting, without the need for any human engineering. This was a super surprising finding.”

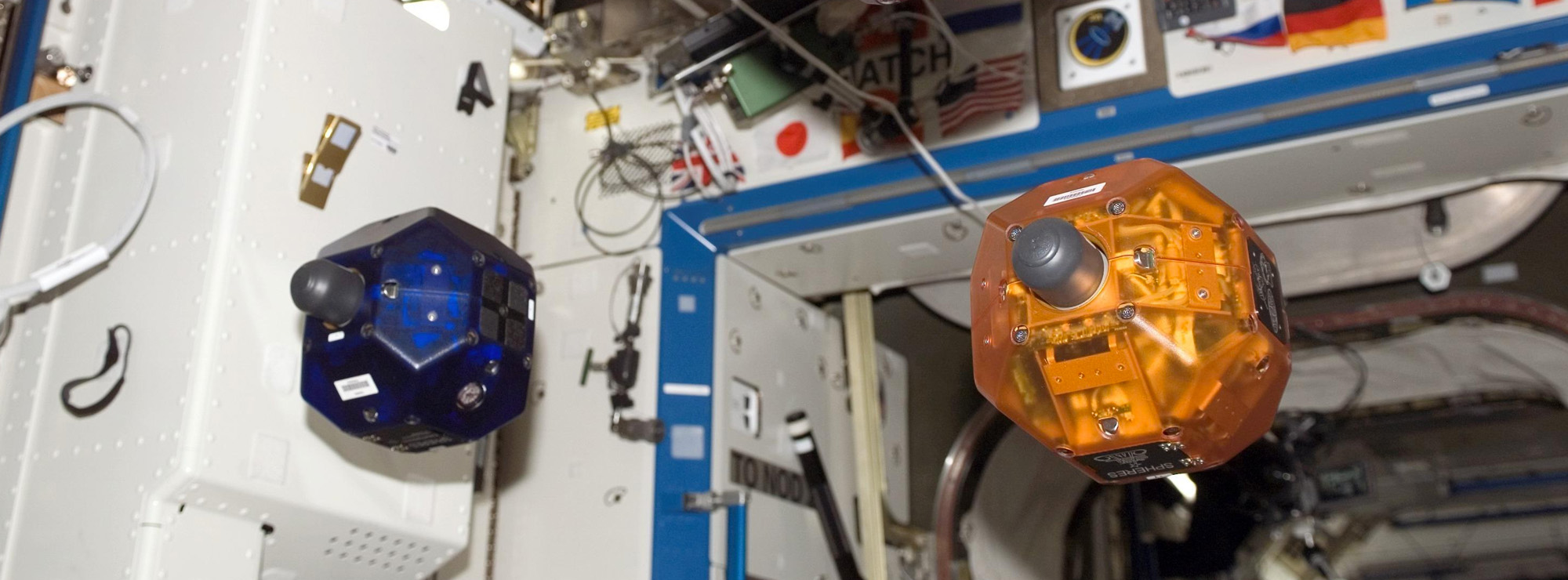

It also allows the system to accurately recreate a 3D object from a single viewpoint. This kind of ability is critical for drones, robots, such as Amazon’s warehouse picking robots, as well as perhaps tomorrow’s surgical robots, and self-driving cars, because they have to navigate the real world by sensing it and reacting to what they see. With limited information, such as some important clue that’s temporarily hidden from view, they can freeze up or make illogical choices which, for example, if the AI operating a self-driving car could be lethal.

With a system like this though their robotic brains could make reasonable assumptions about, say, the layout of a room, or objects on the side of the road, without having to ground truth every inch.

“Although we need more data and faster hardware before we can deploy this new type of system in the real world,” Eslami said, “it takes us one step closer to understanding how we may build agents that learn by themselves.”