WHY THIS MATTERS IN BRIEF

An AI debating whether AI is “good or bad” with people sounds like a good marketing ploy, but it has real world implications for human decision making.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

IBM have just announced that their Artificial Intelligence (AI) system, Project Debater, that last year debated with humans about the pros and cons of everything from climate change to space travel, has just completed another round of debating this time on a topic that’s closer to home – the dangers of AI. And it only narrow convinced the audience that the technology will do more good than harm which either makes it a lousy debater or much more human-like than we think – after all let’s face it we humans are just as rubbish at coming to a conclusion when trying to decide whether AI will be ultimately good or bad for society. And by society I mean us – after all, on the one hand AI will help us create new stand out drugs and healthcare treatments that help us live longer, and help us create better music and games, but on the other it will automate jobs, and help spawn fully autonomous hunter killer robots – so it’s no wonder noone can figure out where they stand on the issue of AI.

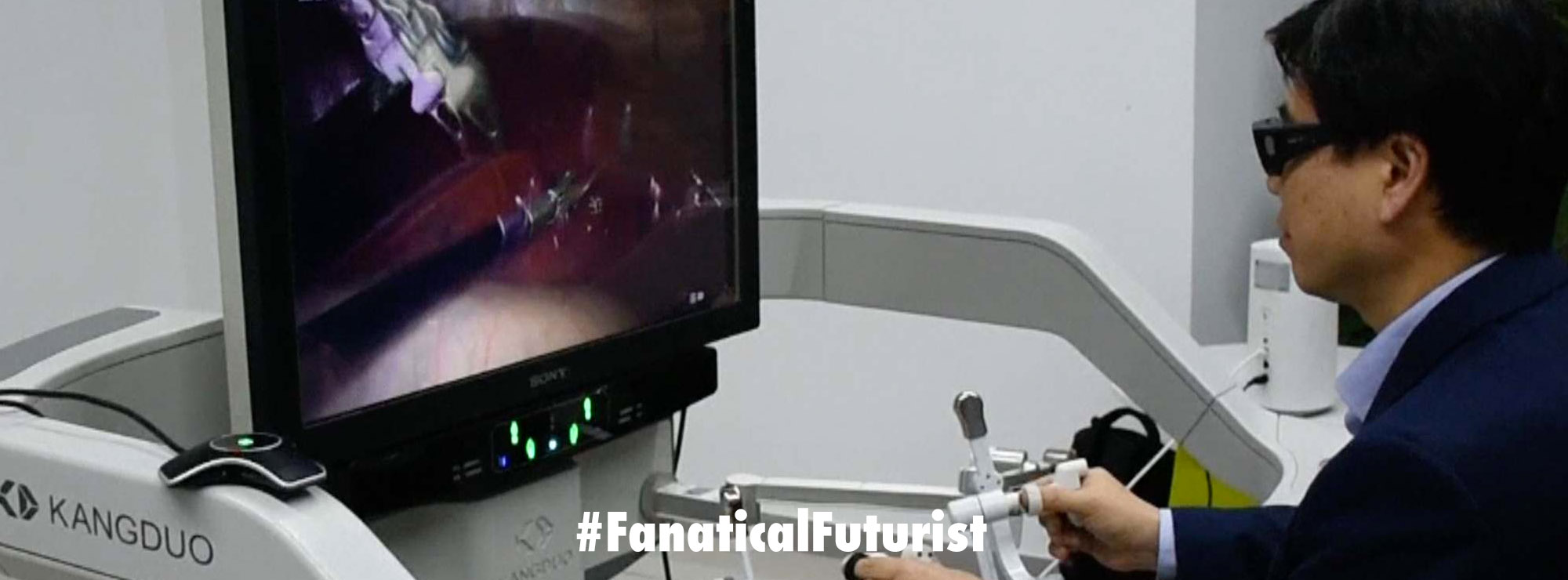

Project Debater, which at first glance looks like an interesting marketing gimmick but is actually a serious attempt to try to create AI systems that aid human decision making as well as bolster AI’s own decision making capabilities, spoke on both sides of the argument, with two human teammates for each side helping it out. Talking in a female American voice to a crowd at the University of Cambridge, and the AI gave each side’s opening statements, using arguments drawn from more than 1100 human submissions made ahead of time.

Project Debater at the Cambridge Union Society. Courtesy: IBM

On the proposition side, arguing that AI will bring more harm than good, Project Debater’s opening remarks were darkly ironic.

“AI can cause a lot of harm,” it said. “AI will not be able to make a decision that is the morally correct one, because morality is unique to humans. AI companies still have too little expertise on how to properly assess datasets and filter out bias,” it added. “AI will take human bias and will fixate it for generations.”

The AI used an application known as “speech by crowd” to generate its arguments, analysing submissions people had sent in online. Project Debater then sorted these into key themes, as well as identifying redundancy – submissions making the same point using different words.

The AI argued coherently but had a few slip-ups. Sometimes it repeated itself – while talking about the ability of AI to perform mundane and repetitive tasks, for example – and it didn’t provide detailed examples to support its claims.

While debating on the opposition side, which was advocating for the overall benefits of AI, Project Debater argued that AI would create new jobs in certain sectors and “bring a lot more efficiency to the workplace.” But then it made a point that was counter to its argument.

“AI capabilities caring for patients or robots teaching schoolchildren – there is no longer a demand for humans in those fields either.”

The pro-AI side narrowly won, gaining 51.22 per cent of the audience vote.

Project Debater argued with humans for the first time last year, and in February this year lost in a one-on-one against champion debater Harish Natarajan, who also spoke at Cambridge as the third speaker for the team arguing in favour of AI.

IBM now has plans to use the speech-by-crowd AI as a tool for collecting feedback from large numbers of people. For instance, it could be used by governments seeking public opinions about policies or by companies wanting input from employees, said IBM engineer Noam Slonim. Although, let’s face it – as I’ve mentioned many times before in keynotes what happens when these types of AI systems, like the ones in the Quantitative (Quants) and investment industries do today, suck in huge volumes of fake news and fake content in order to form their opinions and advice? Carnage? Feel free to debate that one…

“This technology can help to establish an interesting and effective communication channel between the decision maker and the people that are going to be impacted by the decision,” it added.