WHY THIS MATTERS IN BRIEF

Researchers are trying to create a new form of AI, or Creative Machines, that can generate their own content from scratch, and the systems are improving all the time.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

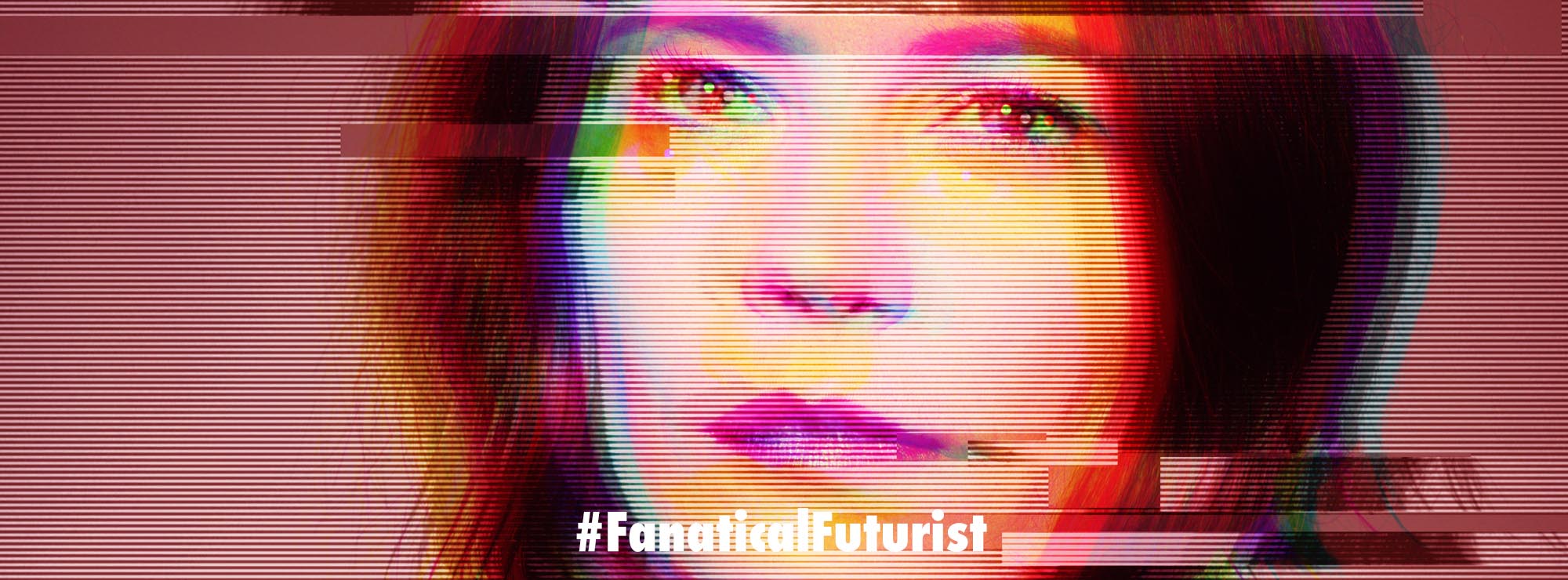

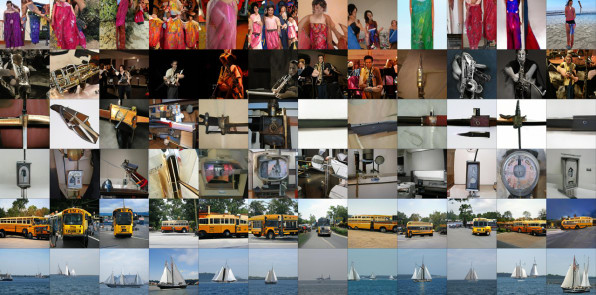

For years, artists and researchers have been experimenting with training neural networks to generate images that look real, but most of them look like strangely distorted, grotesque caricatures of how a computer thinks the world looks.

However those days might be behind us after a Google intern and two researchers from Google’s DeepMind division released a paper, currently under review for a 2019 conference, featuring AI-generated images that blow everything else out of the water – literally.

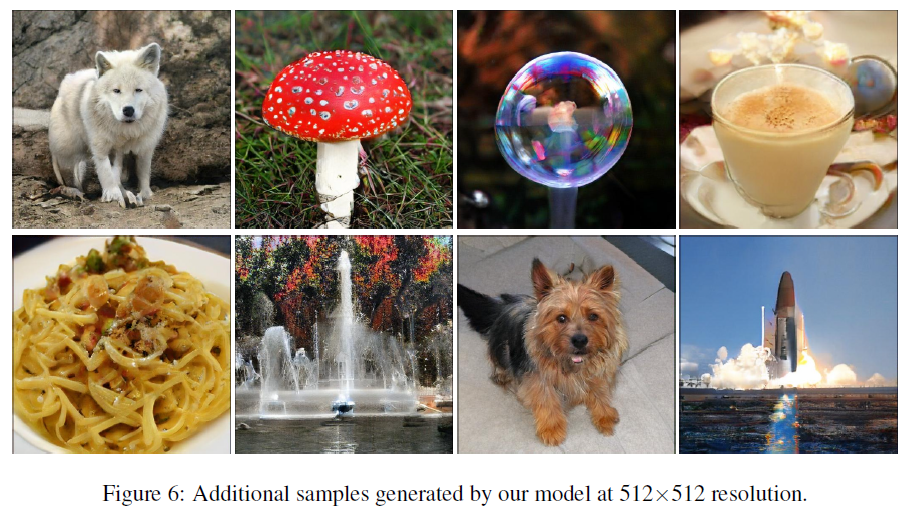

Based on the small thumbnails, it’s almost impossible to tell that they’re not real images – there’s a chestnut-colored dog with his tongue hanging out, a beautiful ocean vista, a monarch butterfly, and a juicy hamburger complete with melted cheese and a bun that looks like it was brushed with butter. The textures of the images, from the dog’s fur to the hamburger’s juices, are incredibly realistic, with careful study revealing only tiniest of tells that the image isn’t a real one. And the research is making waves in the research community, where some expressed shock at the image quality.

Courtesy: Andrew Brock

Oriol Vinyals, a research scientist at DeepMind, for example, wondered if the images were the “best GAN samples ever.”

“I want to live in a #BIGGAN generated world!” wrote Meltem Atay, a neurotechnology PhD student who focuses on machine learning. Another one noted that the images are “unbelievably detailed,” and another asked, “Wait . . . these are generated images?”

Courtesy: Andrew Brock

The algorithm that did this? It’s called BigGAN, the last three letters of which stand for Generative Adversarial Network. This kind of neural net is composed of two models – one that conjures random images out of random numbers, and one that compares these generated images to real images and tells the generator just how far off it is.

GANs are common in machine learning research, and BigGAN isn’t that different from other algorithms out there. But there is one big difference – BigGAN throws a ton of computational power, courtesy of Google, at the problem. And as you can see this strategy produces far superior results.

“The main thing these models need is not algorithmic improvements, but computational ones,” says Andrew Brock, a PhD student at Edinburgh Center for Robotics and the Google intern who wrote the paper. “When you increase model capacity and you increase the number of images you show at every step, you get this twofold combined effect.”

Courtesy: Andrew Brock

In other words, by adding more nodes to increase the complexity of the neural network and showing the model far more images than most researchers do, Brock was able to create a system that more accurately understands and models textures, and then combines these individual textures to generate bigger forms, like that of a puppy.

But while the algorithm can generate the glistening skin of a frog from scratch, it’s not perfect – neural nets still can’t count or interpret larger structural trends. Even if BigGAN sees far more images of spiders than other smaller GANs, it still can’t settle on a single number – it just knows that spiders have a lot of legs. There are countless images on Twitter of researchers excitedly sharing BigGAN’s hyperreal textures on animals that also have extra heads or far too many horns. Some of these are just as horrifying as their AI-generated predecessors, and when paired with high resolution, enter true uncanny valley territory.

Brock and the Google researchers he worked with, Jeff Donahue and Karen Simonyan, also employed what Brock calls the “truncation trick” to create even more realistic images. This lowers the random numbers that the generator uses to create its images, essentially telling it to focus on getting really good at one type of image – like that of a cocker spaniel staring right at you – rather than generating a bunch of other types of images of cocker spaniels.

How could BigGAN ultimately be used? This kind of research can help make pixelated images clearer, as NVIDIA has shown. But Brock says that generating these images is more of a research goal than anything practical, with more subtle implications.

“There’s not a practical application unless you’re trying to generate fake news of really realistic puppies, or stock images” Brock says. “But it’s an important thing to consider if you care about AI and want to move [toward] learning things directly from data without human intervention . . . We want to be able to learn structure from data.”

AI researcher and creator of the blog AI Weirdness Janelle Shane sees another potential use, too – art.

“You could illustrate a story this way, or make a hauntingly beautiful movie set,” she writes. “It all depends on the data set you collect, and the outputs you choose. And that, I think, is where algorithms like BigGAN are going to change human art – not by replacing human artists, but by becoming a powerful new collaborative tool.”

These experiments have environmental implications as well. Brock used 512 of Google’s Tensor Processing Units (or TPU) to generate his 512 pixel images, and he says his experiments generally run for between 24 and 48 hours. If each TPU uses about 200 watts in an hour of computation, then a single one of Brock’s 512 pixel experiments could be using between 2,450 and 4,915 kilowatt hours. That’s the equivalent of the electricity that the average American household uses in just under six months.

“The good news is that AI can now give you a more believable image of a plate of spaghetti,” data artist and researcher Jer Thorp writes on Twitter. He jokingly estimates, “The bad news is that it used roughly enough energy to power Cleveland for the afternoon.”

Information and communications technology is on track to create 3.5% of global emissions by 2020, which is more than the aviation and shipping industries – and could hit 14% by 2040, according to the Guardian‘s report of a study due out this month.

“It seems very clear that we’re in an era where we need to reduce our energy use [and] emissions as we ramp up the use of these technologies to create content like this,” says Thorp. “But there’s a ‘tech is progress’ rhetoric that allows us to somehow find an exception where this kind of work is concerned.”

While BigGAN appears to be a step forward for GANs in general, Thorp’s analysis highlights that computing power – while it may seem infinite – has a real environmental cost too, and one we may need to reckon with sooner rather than later, especially as more and more organisations start using AI’s and not people to generate imagery and videos.

Source: Medium