WHY THIS MATTERS IN BRIEF

- Pushing artificial intelligence to the edge of the network – to the device, and untethering it from the datacenter, will help make devices smarter and revolutionise the software that runs on them, making it exponentially more powerful and capable

In a speech today at Web Summit Hussein Mehana, Facebooks Director of Engineering, showed off a new incarnation of Facebooks smartphone app. It can transform a photo of your backyard barbecue into a Picasso. Or a Van Gogh. Or a Warhol.

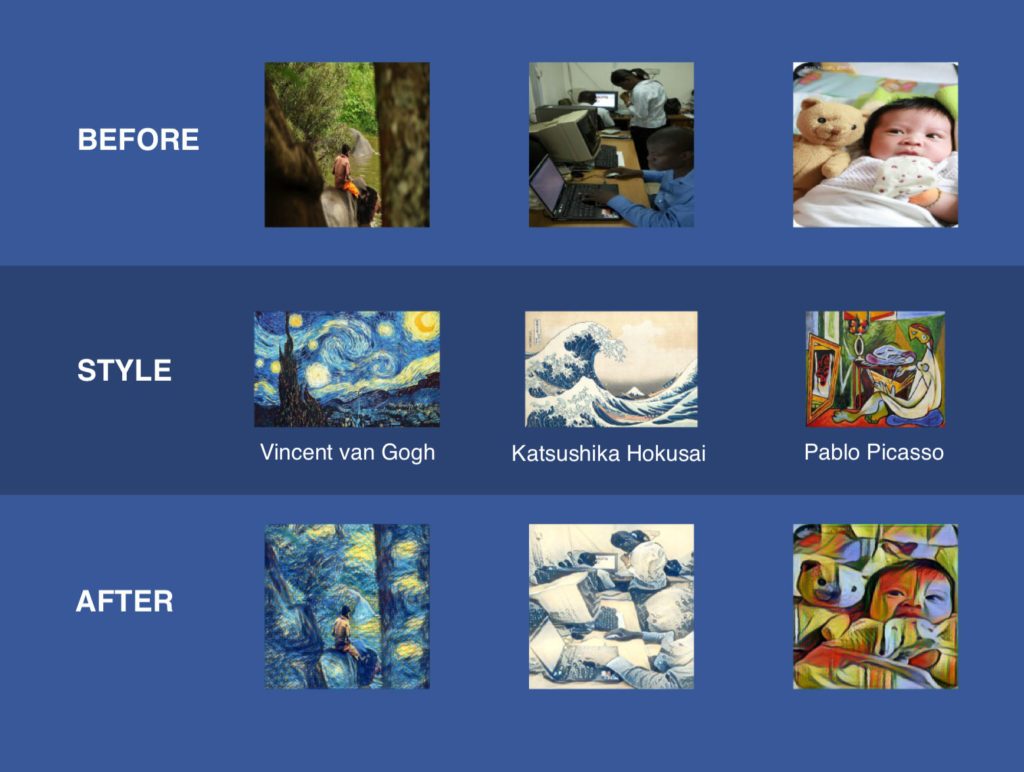

As you might have guessed by now the app includes a particularly extravagant photo filter. You select a work of art – something akin to, say, a 1907 Picasso – and it creates a Cubist incarnation of your backyard barbecue. It’s fun, and it works even with live video. Turn the camera on yourself, and you too can be a Picasso. But that’s not half as interesting as the technology that underpins the new app. Mehana is one of the Facebook engineers working to push artificial intelligence (AI) across the company, and as he explains, the app includes several deep neural networks, a form of artificial intelligence that’s rapidly reinventing the tech world.

Based loosely on the web of neurons in the human brain, neural networks can learn discrete tasks by analysing vast amounts of data and it’s this technology that helps identify faces in Facebook posts, translate your telephone calls into hundreds of other languages instantly and that is the foundation for almost every personal digital assistant – from Siri to Google Now on the market. Now, using various works of art, Facebook is training neural networks to inject a new look into your personal pics.

Before and after, the AI effect

Typically, neural networks run on large numbers of computer servers packed into data centers on the other side of the internet and they don’t work unless your phone is online. But with this new app, Facebook is taking a different approach – it’s pushing AI to “the edge” of the network and trying to get it onto, or at least as close as it can, to the device itself. In this particular case the Picasso filter is driven by a neural network which is small enough and efficient enough to run on the phone itself.

“We perceive the world in real-time,” Mehana says, “why wouldn’t you want the same thing from your AI?”

Already available in Ireland and due soon here in the US, this new Facebook app is another sign that deep neural networks will push out and live beyond the datacenter – on phones, cameras and everything that will eventually make up the world of the Internet of Things. And it’s a growing trend among tech companies. Last summer, Google squeezed a neural network into its Google Translate app, which can identify words in photos and translate them in new languages – after all, being online in a foreign country when you’re trying to translate a sign from mandarin into English, for example, is expensive, and now you don’t have to be.

So, these tools can operate without an internet connection and that points to a future where our smartphone apps will be able to perform a much wider range of tasks while offline. But it also shows we’re moving towards technology that can handle more complex AI tasks with less delay. Ultimately, if you can complete a task without sending a bunch of data across the wire, it will happen quicker and, as a consequence the user experience will be better.

Imagine apps that can instantly recognise faces or objects when you point your phone at them.

“Doing this type of work, or processing, on the phone changes the nature of the game,” says Allen Institute CEO Oren Etzioni, pointing out that this can even help drive augmented reality headsets like the Microsoft Hololens. If a device can more accurately recognise the world around it, it can more accurately augment that reality.

A neural network operates in two stages. First, a company like Facebook or Google trains it for a particular task, like image recognition or machine translation. Facebook might teach a neural network to recognise goats, for instance, by feeding it millions of goat photos. Then someone like you or I “execute” the neural network. We give it a photo, and it tells us whether the photo includes a goat.

Facebook’s app doesn’t train its neural networks on your smartphone. That still happens on servers back in the datacenter. But the phone does execute the neural net, without having to call the data enter. That may seem like a small thing, but building a deep neural net that we can so quickly execute on a phone which offers limited processing power and memory is no simple task. The new photo filter is based on neural network technology first described by a team of German researchers in 2015, and that technology couldn’t operate in real-time, even though it ran on data center hardware. Little more than a year later, Facebook is doing pretty much the same thing on a phone, and without delay. For Facebook CTO Mike Schroepfer, this shows just how fast AI is evolving.

As for how Facebook did it, well they did what they’re best at – they minimised the complexity of the neural network that transforms your photo into a Picasso. The training stage though still took a long time. According to Facebook engineering director Tommer Leyvand, the neural net must train for a good 400 hours on GPU chips, the processors typically used for AI training inside the data center. Basically, after training a neural net to recognize objects in photos, the team feeds it a famous work of art, retraining it to apply the same style to those objects. But in the end, Menhana and team honed this neural net so that it only uses the most important parts of what it learned.

At the same, the team build a new piece of software designed specifically for executing neural networks with the limited resources available on mobile phones. This AI framework is called Caffe2Go, and according to Facebook, it can execute neural nets in less than 1/20th of a second. Naturally, execution times depend on what models are being executing but the larger point is that Facebook intends to offer the framework on both iOS and Android devices, intent on building all sorts of AI models that can operate without being tethered to the data center.

“With anything we can build on the server, we now have a vehicle to ship it on mobile devices – and soon,” Schroepfer explains. He says that Facebook is already experimenting with mobile neural networks that can recognize objects in videos at 60 frames a second and eventually, this kind of work will create a virtuous circle of AI evolution. As companies like Facebook and Google continue to push neural networks onto smartphones, phone makers will start building hardware into these devices that can run neural networks with even greater speed. That, in turn, will yield even more complex apps. And so on. Schroepfer says Facebook is already talking to the major mobile chip makers about modifying their processors for use with future AI.

Meanwhile, some companies are building entirely new processors that could accelerate the execution of neural networks on phones and other devices. This includes Movidius, a company recently acquired by Intel, the world’s largest chip maker, as well as IBM and if these chips work as advertised, they will find a home in the market.

“The demand will be there,” Schroepfer says.

In the meantime, while a Picasso photo filter won’t change your life – unless of course you’re a teen, or a Snapchat user, it’s a bigger sign of things to come – AI at the edge.