WHY THIS MATTERS IN BRIEF

Exponential computing platforms that have more processing power than mammalian brains are now a reality, simulating a whole human brain is next.

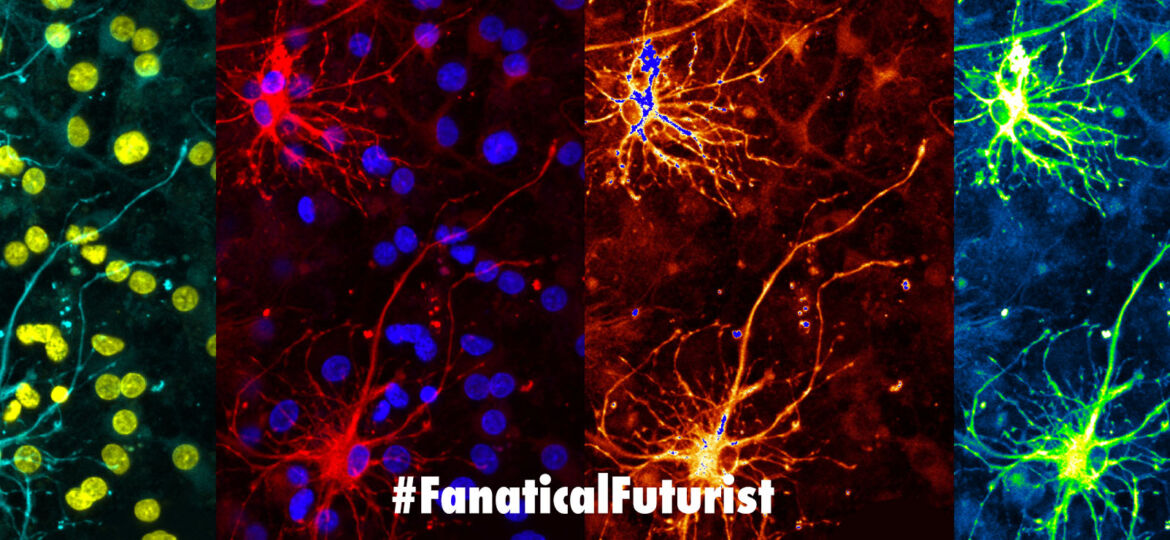

Neuromorphic computers are self-learning computing systems capable of packing all the power of today’s top of the line supercomputers, like Summit, which performs 200 quadrillion calculations per second, into a package the size of a finger nail. Over the past couple of years there have been numerous advances in the field including the creation of a human brain like synthetic synapse from NIST that’s over 200 million times faster than our own biological brain circuitry and new neuromorphic chips, like Lohi from Intel.

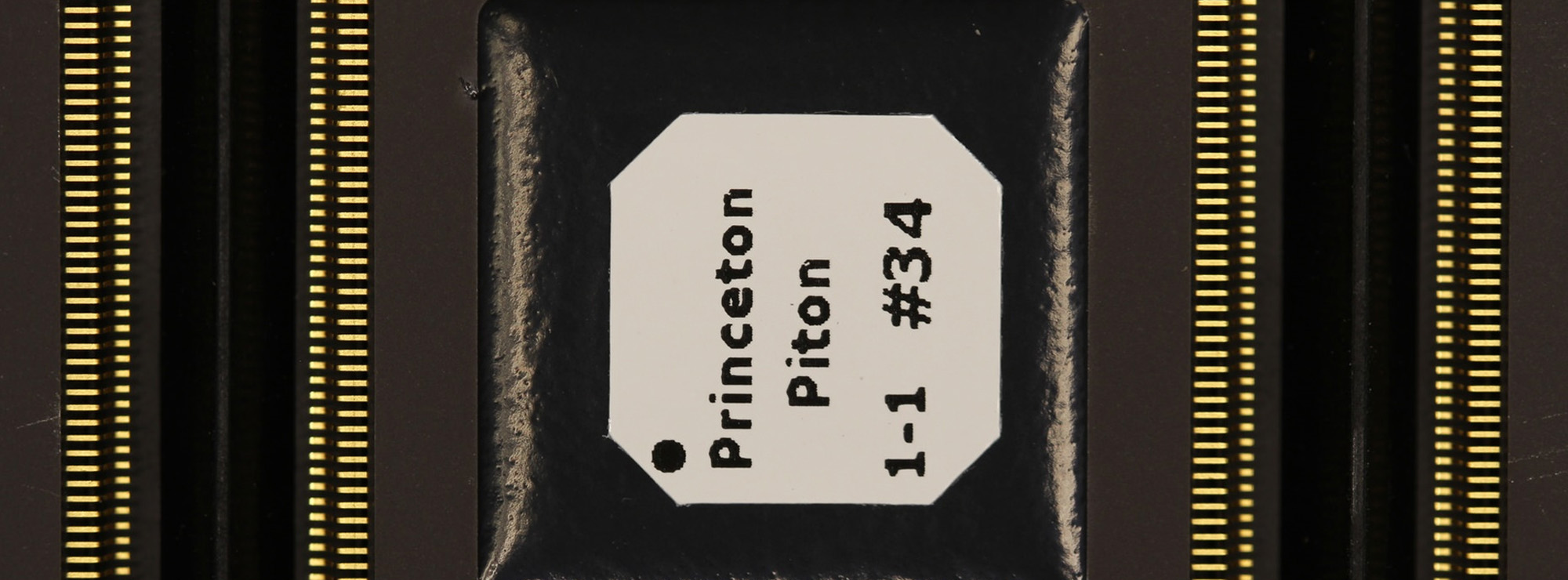

Now, after 12 years of work researchers at the University of Manchester in the UK, who have also bought us breakthroughs in DNA computers, from blueprints and early experimental prototypes, have finally completed building “SpiNNaker,” a first of a kind “Spiking Neural Network Architecture,” neuromorphic supercomputer that can simulate the internal workings of up to a billion neurons, equivalent to a mouse’s brain, by using a whopping one million processing cores.

It’s staggering tech

The human brain contains approximately 100 billion neurons, exchanging signals through hundreds of trillions of synapses so it’s orders of magnitude more complex than a mouse’s brain, but while these numbers are staggering, a simulation of the full human brain will need far more than raw processing power. What’s needed is a radical rethinking of the standard computer architecture on which most computers are built, and that’s what this promises.

“Neurons in the brain typically have several thousand inputs, and some have up to quarter of a million,” said Professor Stephen Furber, who led the SpiNNaker project. “So the issue is communication, not computation. High performance computers are good at sending large chunks of data from one place to another very fast, but what neural modelling requires is sending very small chunks of data, which represent the equivalent of an electrical impulse in a human synapse in the brain, from one place to many others, which is quite a different communication model.”

The researchers tackled this problem by devising a massively parallel architecture where each one of the million cores is able to send tiny packets of information, which are up to just 72 bits in size, that are routed to their destinations by an internal communication network. It’s this architecture that let the team simulate a whole mouse brain. But even this kind of ad-hoc design, however, isn’t nearly enough on its own – to build a proper brain model you’ll also need to get the wiring right.

“To build a mouse brain model we needed, in principle, to know every neuron and its connections to every other neuron in the brain,” said Furber. “In practice this an infeasible amount of data to collect, so we had to settle for statistical distributions of neurons types and statistical connectivity data, so that we can construct a statistically representative brain model.

“Such models do now exist, though they are very rough cut in places – they have been compared to the first attempts to draw a map of the globe, which had highly variable accuracy and missed out Australia altogether as it hadn’t been discovered then.”

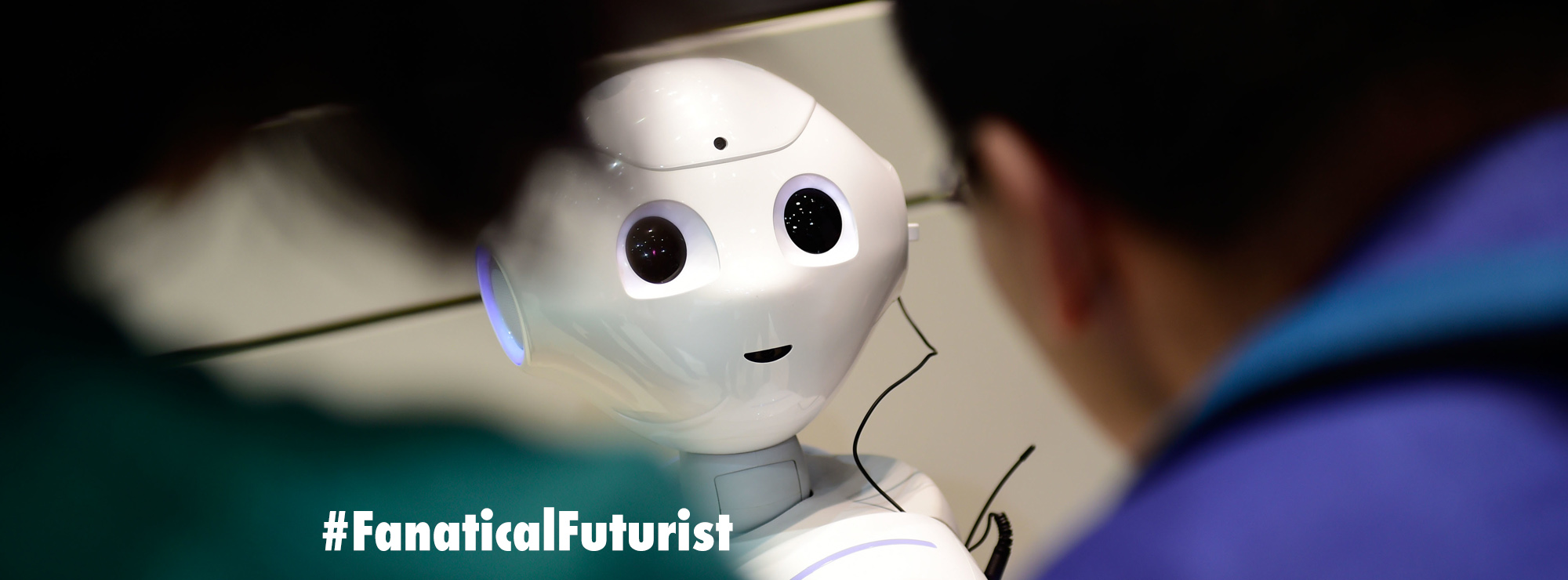

Though this utopia of “one to one neuronal mapping” may not happen anytime soon, even a somewhat rough lay of the land could provide interesting results. For instance, researchers could build a computer model of the visual cortex of a mouse, “show” it an image that would be translated into a stream of spikes down the optic nerve, and learn much about how such a signal is processed by the cortex, even using the output to control the movement of a virtual mouse or a physical robot.

Furber says that the system also has the potential to uncover more about how high-level functions such as learning work inside the human brain, something that in itself would be revolutionary as we increasingly attempt to use these new computer systems to unlock the secrets of the human brain and create new treatments for cognitive disorders like Alzheimer’s and dementia.

“We already support a fair amount of work on learning processes at the synaptic level, including dopamine reinforced plasticity which is a biologically plausible form of reinforcement learning. But through putting such local plasticity rules together into a high level brain-like learning system is possible on SpiNNaker, it is stretching our understanding to generate such a system that we can then claim ‘is how the brain learns.'”

The team has already used the system to simulate a region of the brain called the Basal Ganglia, an area affected in Parkinson’s disease. And the technology has a huge amount of potential to provide advancements in the medical field, particularly with regard to pharmaceutical testing, although the researchers believe the impact of his research on real patients could take decades to materialise.

Furber and his colleagues are now working on a second-generation machine, “SpiNNaker2,” which uses upgraded silicon technology to deliver 10 times the functional density and energy efficiency that would let them model a whole insect brain which could then fit on top of a drone. Or, you could just turn a living dragonfly into a living “cyborg” radio controlled drone like this one… whatever floats your boat. And that’s just the start for what is an incredibly powerful and revolutionary technology.

Source: University of Manchester