WHY THIS MATTERS IN BRIEF

Computer chips that can learn by themselves will revolutionise Artificial Intelligence and let us create machines are that genuinely “intelligent.”

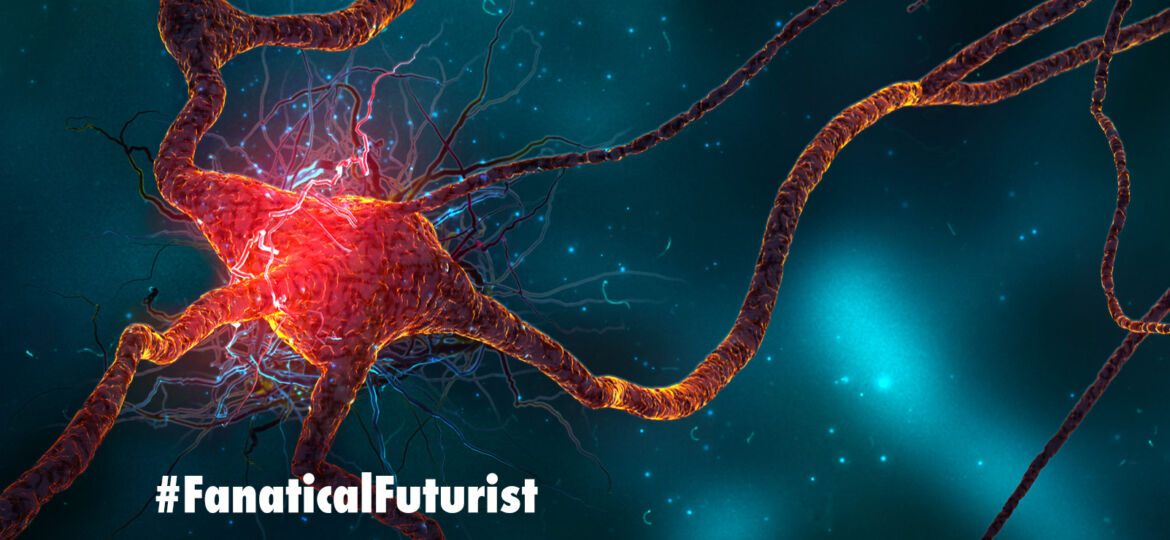

For those working in the field of advanced Artificial Intelligence (AI) getting a computer to simulate how the human brain works is a gargantuan task but many think it might be easier to achieve if the hardware the scientists are using is designed with more of a brain like architecture in mind in the first place. This, coming on the back of other computing developments, such as Chemical, DNA, Liquid, Molecular, Photonic and Quantum computing, is an emerging field called Neuromorphic Computing, and now engineers at MIT say they believe they’ve overcome a significant hurdle to creating computer chips with artificial “human-like” synapses.

At the moment human brains are much more powerful than every other computer on the planet, after all, they contain around 80 billion neurons, and over 100 trillion synapses and they run them all using less power than you find in two AA batteries, and today’s computer chips work by transmitting signals in binary 1’s and 0’s.

To get an idea of how this binary world compares to a human brain consider this… in 2016 one of the world’s most powerful supercomputers, the Riken K in Japan, tried to simulate the human brain but its efforts were less than enamouring and it took over forty minutes to simulate one second of brain activity and it used 82,944 processors and a petabyte of main memory, or the equivalent of around 250,000 desktop computers, to do it.

However, if instead of binary the computer chips had synapse like connections the results could have been tipped on their head. Synapses manage signals as they are transmitted through the brain, and neurons activate depending on the number and type of ions flowing across them. This helps the brain recognise patterns, remember facts, and carry out tasks, but so far replicating human synapses has proven difficult – very difficult.

Now though researchers at MIT have designed a new chip with artificial synapses made of Silicon Germanium that let them precisely control the strength of electrical current flowing along them, just like the ion flow between neurons, and in a simulation the new chip was able to recognise handwriting samples, a standard Neuromorphic test, with 95 percent accuracy.

Previous designs for neuromorphic chips used two conductive layers separated by an amorphous “switching medium” to act like the synapses, and when they were switched on ions flow through it to create “conductive filaments” that “mimicked synaptic weight,” or, in other words, the strength or weakness of a signal between two neurons.

The problem with this approach though is that without defined structures to travel along the signals have an infinite number of paths they can take and this made the previous chips’ performance inconsistent and unpredictable, which, let’s face it is rubbish if you’re trying to create a state of the art computer chip.

“Once you apply some voltage to represent some data with your artificial neuron you have to be able to erase it and write it again in the exact same way,” said lead researcher Jeehwan Kim, “but in an amorphous solid, when you write again, the ions go in different directions because there are lots of defects. This stream is constantly changing and it’s hard to control. That’s the biggest problem – non-uniformity of the artificial synapse.”

With this in mind the team created lattices of Silicon Germanium, with one dimensional channels through which ions can flow which made sure that the exact same path was used every time. These lattices were then used to build a neuromorphic chip and when voltage was applied all the artificial synapses on the chip showed the same current, and that was the breakthrough – goodbye inconsistency.

The team then tested the chip on an actual task, recognising handwriting, and their simulated artificial neural network, which at that time consisted of only three neural sheets separated by two layers of artificial synapses, was able to recognise tens of thousands of handwritten numerals with 95 percent accuracy, compared to the 97 percent accuracy of existing software.

Now the team say the next step is to actually build a chip that’s capable of carrying out the handwriting recognition task and passing it with flying colours, with the ultimate end goal being to create portable neural network devices.

“Ultimately we want a chip as big as a fingernail to replace one big supercomputer,” Kim said, “and this [research] opens a stepping stone to produce real artificial [intelligence] hardware.”

The research has been published in the journal Nature Materials.