WHY THIS MATTERS OM BRIEF

Within the decade alot more of the content you consume will be made by AI, and after that it could be almost all of it.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

As I’ve been writing about and talking about for years we all know that Artificial Intelligence (AI) is getting better at creating synthetic content and that one day most content, from art, articles, blogs, books, and games to video and beyond, will be made by AI. Most of these AI’s use something called “Transformers” to generate their content, in other words they use one input such as text and “transform” it into another such as an image or video.

Recently Facebook owner Meta caused a storm when they showed off their new Text-to-Video system, but what was overlooked by many in the mainstream media was another tool of theirs – Text-to-Audio that can generate sounds from a text prompt.

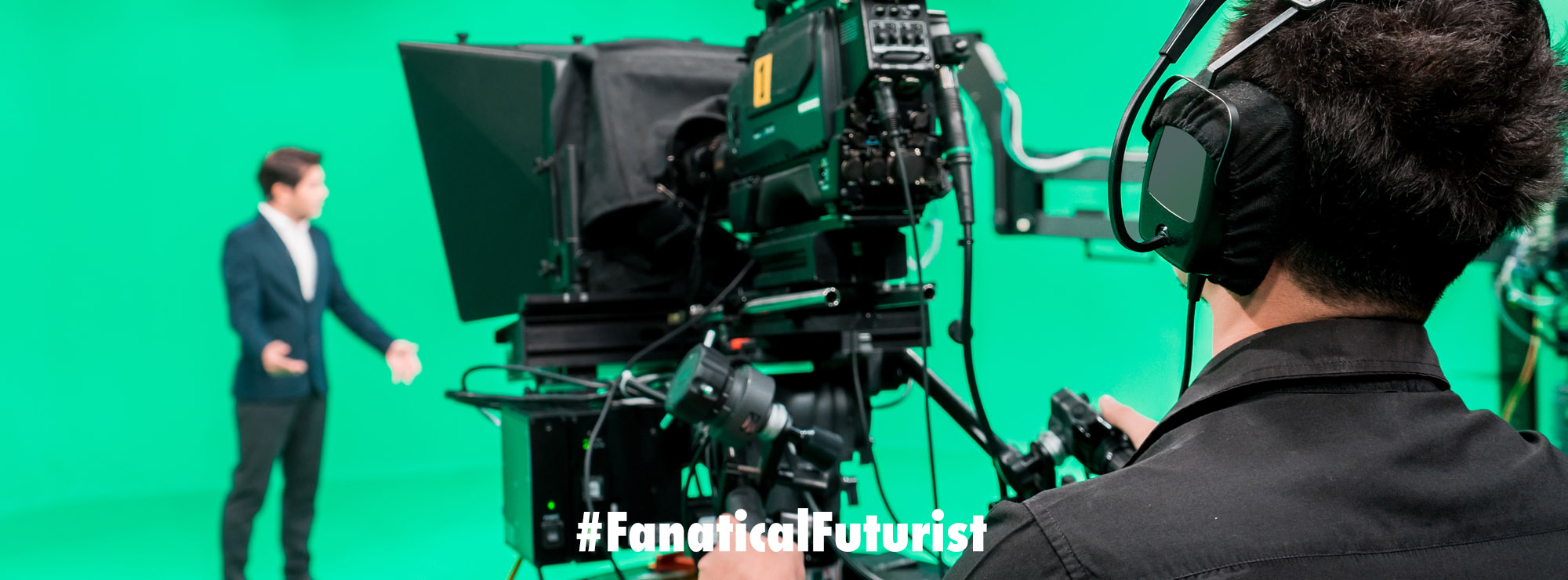

The Future of Synthetic Content keynote, by Matthew Griffin

AudioGen, an AI worked on by Meta and the Hebrew University of Jerusalem, turns text prompts such as “whistling with wind blowing” into an audio file that sounds like the scenario described. It is the sound-based equivalent of popular AI-based systems that generate images from text prompts, such as DALL-E or Midjourney, and that have captured the public imagination.

AudioGen uses a language AI model to help it understand the string of text it is given, then isolates the relevant parts of that text. Those parts are then used by the AI to generate noises – learned from 10 data sets of common sounds, totalling around 4000 hours of training data. Given a small snippet of music, the model can also generate a longer music track.

“The model can generate a wide variety of audio: ambient sounds, sound events and their compositions,” says Felix Kreuk at Meta AI.

The language model strips out extraneous words, such as prepositions, to identify the items in a scene that are likely to generate sound – for instance, “a dog barking in a park” becomes “dog, bark, park.” A separate model then generates the audio using these key elements.

Hear it for yourself

The quality of the audio created, and the accuracy with which it captures the text prompt, has been measured by people employed through Amazon’s Mechanical Turk platform. The overall quality for the sounds generated by AudioGen was rated at around 70 per cent, compared with 65 per cent for a competing project, Diffsound.

“I think it works very well,” says Mark Plumbley at the University of Surrey, UK, who sees potential uses in video games. Likewise, Plumbley can foresee a future in which TV and movie scores are created using generative models.

At present, AudioGen can’t differentiate between “a dog barks then a child laughs” and “a child laughs then a dog barks,” meaning it can’t sequence sounds through time – yet. “We are working on implementing better data augmentation techniques to handle these obstacles,” says Kreuk.

There could be other issues with models like this. Plumbley wonders who would own the rights for the generated audio, which would be important if the sounds made were used commercially.

How realistic the sounds are is also up for debate.

“We’re seeing more and more applications of sequence-to-sequence transformation – such as Text-to-Image, Text-to-Video and now Text-to-Audio – and they all share the same lack of physical grounding,” says Roger Moore at the University of Sheffield, UK. This means the output of these models can be dreamlike, rather than being strongly tied to reality.

Until that changes, and generative models are able to accurately represent what happens in reality, the media they produce will always lack some utility, says Moore.

“What we’re seeing right now is the first steps in this direction using massive data sets, but we still have a long way to go,” he adds.

Although from my lofty observation point I’m seeing this “long way to go” happening faster and faster so while yes it will be a long journey as we’ve seen elsewhere “tomorrow” is closer than you might think.

Reference: arxiv.org/abs/2209.15352, Audio Examples