WHY THIS MATTERS IN BRIEF

Imagine being able to create videos by just typing what you want them to be. That’s what Meta’s latest tech does, and it’s only going to get better in the future.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

As I first predicted many many years ago back in 2017 and continuously ever since, and even wrote a book on, Meta today unveiled an Artificial Intelligence (AI) system that generates short videos based on text prompts alone, and while just a few years ago these Text-to-Video (T2V) AI’s were fairly awful, interesting but awful, you can now see just how much they’ve progressed in what’s a relatively short space of time.

Make-A-Video as it’s known lets you type in a string of words, like “A dog wearing a superhero outfit with a red cape flying through the sky,” and then generates a five-second clip that, while pretty accurate, has the aesthetics of a trippy old home video. And, just like those interestingly awful videos from a few years ago, from the likes of Stanford University, fast forward a few years and these newer synthetic videos will be longer and higher quality.

The Future of Synthetic Content, by Futurist Matthew Griffin

Although the effect is rather crude the system offers an early glimpse of what’s coming next for generative artificial intelligence, with synthetic video generation being the obvious step on from the static images created by the Text-to-Image AI systems that I’ve talked about over the past few years from companies such as Google, OpenAI, and Nvidia.

Meta’s announcement of Make-A-Video, which is not yet being made available to the public yet will likely prompt other AI labs to release their own versions. It also raises some big ethical questions.

In the last month alone, AI lab OpenAI has made its latest Text-to-Image AI system DALL-E available to everyone, and AI startup Stability.ai launched Stable Diffusion, yet another open source Text-to-Image system.

But Text-to-Video AI comes with even greater challenges. For one, these models need a vast amount of computing power. They are an even bigger computational lift than largest Text-to-Image AI models, which use millions of images to train, because putting together just one short video not only requires the AI generate hundreds of images but it also needs to “know” what it’s doing.

As a result that means it’s really only large tech companies that can afford to build these systems for the foreseeable future. They’re also trickier to train, because there aren’t currently any large scale data sets of high quality videos paired with text metadata.

To work around this, Meta combined data from three open-source image and video data sets to train its model. Standard text-image data sets of labelled still images helped the AI learn what objects are called and what they look like. And a database of videos helped it learn how those objects are supposed to move in the world. The combination of the two approaches helped Make-A-Video, which is described in a non-peer-reviewed paper published today, generate videos from text at scale.

Tanmay Gupta, a computer vision research scientist at the Allen Institute for Artificial Intelligence, says Meta’s results are promising. The videos it’s shared show that the model can capture 3D shapes as the camera rotates. The model also has some notion of depth and understanding of lighting. Gupta says some details and movements, as we saw recently with Nvidia’s AI, are decently done and convincing.

However, “there’s plenty of room for the research community to improve on, especially if these systems are to be used for video editing and professional content creation,” he adds. In particular, it’s still tough to model complex interactions between objects and characters, for example.”

In the video generated by the text prompt “An artist’s brush painting on a canvas,” the brush moves over the canvas, but strokes on the canvas aren’t realistic.

“I would love to see these models succeed at generating a sequence of interactions, such as ‘The man picks up a book from the shelf, puts on his glasses, and sits down to read it while drinking a cup of coffee,’” Gupta says.

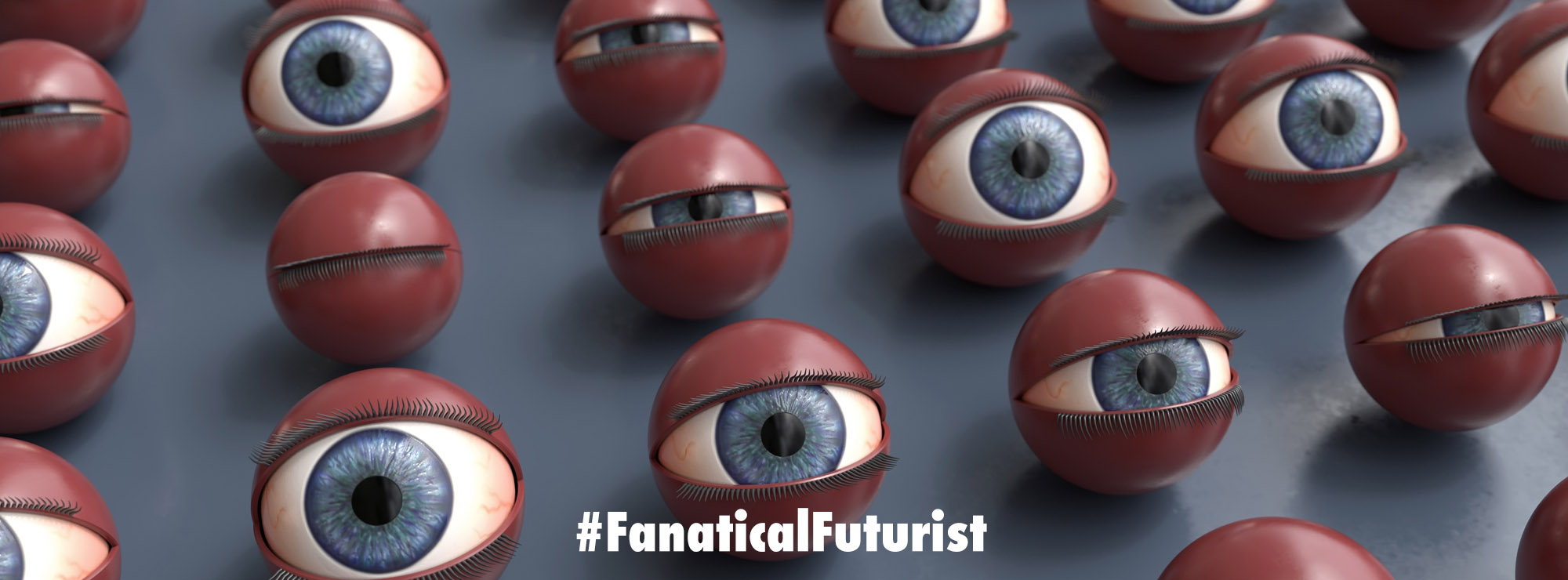

For its part, Meta promises that the technology could “open new opportunities for creators and artists” and democratise content creation. But as the technology develops, there are fears it could be harnessed as a powerful tool to create and disseminate misinformation and deepfakes.

It will also make it even more difficult to differentiate between real and fake content online. Meta’s model ups the stakes for generative AI both technically and creatively but also “in terms of the unique harms that could be caused through generated video as opposed to still images,” says Henry Ajder, an expert on synthetic media.

“At least today, creating factually inaccurate content that people might believe in requires some effort,” Gupta says. “In the future, it may be possible to create misleading content with a few keystrokes.”

And I myself say “will” not “may.”

The researchers who built Make-A-Video filtered out offensive images and words, but with data sets that consist of millions and millions of words and images, it is almost impossible to fully remove biased and harmful content.

A spokesperson for Meta says it is not making the model available to the public yet, and that “as part of this research, we will continue to explore ways to further refine and mitigate potential risks.”

Source: Facebook