WHY THIS MATTERS IN BRIEF

Being able to reconstruct the images people are thinking about in a lab environment is one thing, but doing it in real time using dynamic content is completely different level of complexity.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Over the past couple of years during my keynotes I’ve been talking a lot about how we’re using a combination of technologies, such as Artificial Intelligence (AI) and an assortment of invasive and non-invasive Brain Machine Interfaces (BMI), combined with good old human ingenuity, to help us communicate telepathically with machines such as F-35 fighter jets, as well as other people, create “hive minds,” and read people’s thoughts, transfer images to other people’s heads, and play games, in ways few people ever thought possible. Now though another of my predictions, that we will “soon” be able to live stream dynamic thoughts – thoughts that aren’t just laboratory control experiments – to TV, is coming true, and it’s an amazing advance over the technology that’s come before.

If truth be told though as fancy as being able to predict that one day we’ll genuinely be able to live stream our brains to YouTube in high definition might sound it’s not that difficult a prediction to make. After all, it’s just a natural next step to the work that’s already been done in Japan where, for example, recently people in a lab watched geometric shapes on a screen while an AI attached to a BMI interpreted their brainwaves, de-coded them, and streamed them to TV.

Your mind on TV

Being able to decode and stream people’s mental images and thoughts to a TV without having to use an invasive BMI, like the ones Elon Musk and Neuralink are developing in order to “connect people to AI,” has long been the stuff of science fiction, but now, improving on the Japanese teams breakthrough, researchers from the Russian company Neurobotics and the Moscow Institute of Physics and Technology (MIPT) have finally found a way to visualise and stream more complex brain wave patterns as actual images in near real time without having to use invasive brain implants. And, while it might not seem it it’s a giant step forwards.

However, while the Russian’s work has the potential to help patients with all manner of neurodegenerative disorders in the future, first the team of neurobiologists will need to understand how the brain encodes information by studying it in real-time, and that’s what they did during this study by watching people watching videos and by developing a new type of BMI.

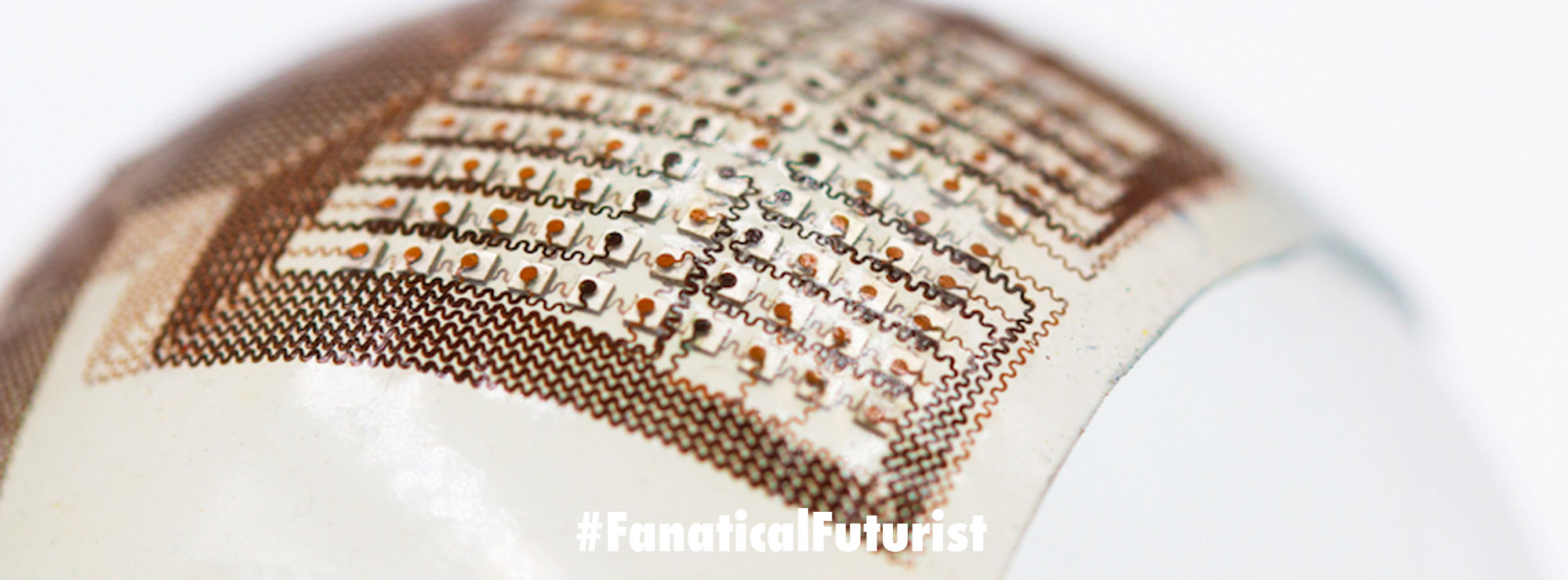

Using AI neural networks and Electroencephalography (EEG) a technique for recording brain waves via electrodes placed non-invasively on the scalp, the team were able to re-construct and visualise the videos the test subjects were watching in real time, as you can see from the video.

“We’re working on the Assistive Technologies project of Neuronet of the National Technology Initiative, which focuses on the brain machine interfaces that enables post-stroke patients to control an arm exoskeleton for neurorehabilitation purposes, or paralysed patients to drive, for example, an electric wheelchair. The ultimate goal is to increase the accuracy of neural control for healthy individuals, too,” said Vladimir Konyshev, who heads the Neurorobotics Lab at MIPT.

During their experiment the neurobiologists first asked subjects to watch YouTube video fragments from five arbitrary video categories while EEG data was collected. The EEG data showed that the brain wave patterns were distinct for each category of videos, enabling the team to analyse the brain’s response to videos in real-time. Then, in the second phase of the experiment, the researchers developed two neural networks. The first was for generating random category-specific images from “noise,” and the second was to generate similar “noise” from the EEG readout. The networks were then combined so that EEG signals could be turned into actual images.

To test the new system the subjects were shown previously unseen videos while their EEGs were recorded and fed to the neural networks, and astonishingly the system produced images that could be easily categorized in 90 percent of the cases.

“The electroencephalogram is a collection of brain signals recorded from scalp. Researchers used to think that studying brain processes via EEG is like figuring out the internal structure of a steam engine by analyzing the smoke left behind by a steam train,” explained paper co-author Grigory Rashkov, a junior researcher at MIPT and a programmer at Neurobotics. “We did not expect that it contains sufficient information to even partially reconstruct an image observed by a person. Yet it turned out to be quite possible.”

“What’s more, we can use this as the basis for a brain machine interface operating in real time. It’s fairly reassuring. Under present-day technology, the invasive neural interfaces envisioned by Elon Musk [and his Neuralink subsidiary] face the challenges of complex [robotic] surgery and rapid deterioration due to natural processes — they oxidize and fail within several months. We hope we can eventually design more affordable neural interfaces that do not require implantation,” the researcher added.

The study was published as a preprint on bioRxiv, and, frankly, fast forward this technology five to ten years and it’s going to be, to use a pun, mind blowing!

Related