WHY THIS MATTERS IN BRIEF

- Imagine a new form of communication, one that transcends phones and virtual reality. Imagine telepathy.

I know what you’re thinking… literally. Only kidding – for now anyway. Imagine being able to read another persons mind then imagine a question and answer game played by two people who are not in the same place and not talking to each other. Round after round, one player asks a series of questions and the other accurately guesses what the other is thinking about.

Sci-fi? Mind reading superpowers? Not quite.

University of Washington researchers recently used a direct brain-to-brain connection to enable pairs of participants to play a question and answer game by transmitting signals from one brain to the other over the internet and although the technology is raw and still far from being perfected technology only ever improves.

The experiment is the first of its kind to show that two brains can be directly linked to allow one person to guess what’s on another person’s mind.

“This is the most complex brain-to-brain experiment, I think, that’s been done to date in humans,” said lead author Andrea Stocco, an assistant professor of psychology and a researcher at UW’s Institute for Learning & Brain Sciences.

“It uses conscious experiences through signals that are experienced visually, and it requires two people to collaborate,” Stocco said.

How it works

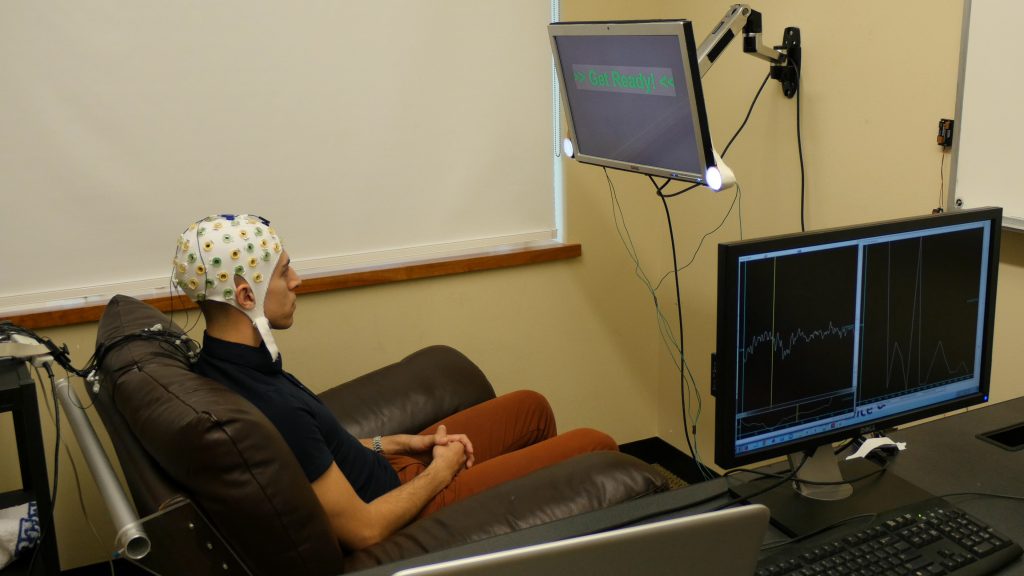

The first participant, or “respondent,” wears a cap connected to an electroencephalography (EEG) machine that records electrical brain activity. The respondent is shown an object (for example, a cat) on a computer screen, and the second participant, or “inquirer,” sees a list of possible objects and associated questions.

With the click of a mouse, the inquirer sends a question and the respondent answers “yes” or “no” by focusing on one of two flashing LED lights attached to the monitor, which flash at different frequencies.

A “no” or “yes” answer both send a signal to the inquirer via the Internet and activate a magnetic coil positioned behind the inquirer’s head. But only a “yes” answer generates a response intense enough to stimulate the inquirer’s visual cortex and cause them to see a “flash of light” known as a “phosphene.”

The phosphene, which might look like a blob, waves or a thin line is created by a brief disruption in the inquirer’s visual field and tells them the answer is yes – think of it as someone shining a flash light in your eyes when the answer is yes. By using the answers to these simple yes or no questions, the inquirer is able to identify the correct item.

Image 1: One of the participants

The experiment was carried out in dark rooms in two UW labs located almost a mile apart and involved five pairs of participants, who played 20 rounds of the question-and-answer game. Each game had eight objects and three questions that would solve the game if answered correctly. The sessions were a random mixture of 10 real games and 10 control games that were structured the same way.

The researchers took steps to ensure participants couldn’t use clues other than direct brain communication to complete the game. Inquirers wore earplugs so they couldn’t hear the different sounds produced by the varying stimulation intensities of the “yes” and “no” responses. Since noise travels through the skull bone, the researchers also changed the stimulation intensities slightly from game to game and randomly used three different intensities each for “yes” and “no” answers to further reduce the chance that sound could provide clues.

The researchers also repositioned the coil on the inquirer’s head at the start of each game, but for the control games, added a plastic spacer undetectable to the participant that weakened the magnetic field enough to prevent the generation of phosphenes. Inquirers were not told whether they had correctly identified the items, and only the researcher on the respondent end knew whether each game was real or a control round.

“We took many steps to make sure that people were not cheating,” Stocco said.

Participants were able to guess the correct object in 72 percent of the real games, compared with just 18 percent of the control rounds. Incorrect guesses in the real games could be caused by several factors, the most likely being uncertainty about whether a phosphene had appeared.

“They have to interpret something they’re seeing with their brains,” said co-author Chantel Prat, a faculty member at the Institute for Learning & Brain Sciences and a UW associate professor of psychology. “It’s not something they’ve ever seen before.”

Errors can also result from respondents not knowing the answers to questions or focusing on both answers, or by the brain signal transmission being interrupted by hardware problems.

“While the flashing lights are signals that we’re putting into the brain, those parts of the brain are doing a million other things at any given time too,” Prat said.

The study builds on the UW team’s initial experiment in 2013, when it was the first to demonstrate a direct brain-to-brain connection between humans. Other scientists have connected the brains of rats and monkeys, and transmitted brain signals from a human to a rat, using electrodes inserted into animals’ brains. In the 2013 experiment, the UW team used noninvasive technology to send a person’s brain signals over the Internet to control the hand motions of another person.

The experiment evolved out of research by co-author Rajesh Rao, a UW professor of computer science and engineering, on brain-computer interfaces that enable people to activate devices with their minds. In 2011, Rao began collaborating with Stocco and Prat to determine how to link two human brains together.

In 2014, the researchers received a $1 million grant from the W.M. Keck Foundation that allowed them to broaden their experiments to decode more complex interactions and brain processes. They are now exploring the possibility of “brain tutoring,” transferring signals directly from healthy brains to ones that are developmentally impaired or impacted by external factors such as a stroke or accident, or simply to transfer knowledge from teacher to pupil.

The team is also working on transmitting brain states — for example, sending signals from an alert person to a sleepy one, or from a focused student to one who has attention deficit hyperactivity disorder, or ADHD.

“Imagine having someone with ADHD and a neurotypical student,” Prat said.

“When the non-ADHD student is paying attention, the ADHD student’s brain gets put into a state of greater attention automatically.”

Many technological advancements over the past century, from the telegraph to the Internet, were created to facilitate communication between people. The UW team’s work takes a different approach, using technology to strip away the need for such intermediaries.

“Evolution has spent a colossal amount of time to find ways for us and other animals to take information out of our brains and communicate it to other animals in the forms of behavior, speech and so on,” Stocco said.

“But it requires a translation. We can only communicate part of whatever our brain processes.

“What we are doing is kind of reversing the process a step at a time by opening up this box and taking signals from the brain and with minimal translation, putting them back in another person’s brain,” he said.