WHY THIS MATTERS IN BRIEF

The brain is one of the most complicated objects in the universe but now scientists are managing to decode it to stream our dreams, emotions and thoughts.

Functional Magnetic Resonance Imaging (fMRI) scanners are an intriguing technology. After all, how many other technologies can claim to be able to look inside people’s brains, extract people’s thoughts and emotions and put them on the small screen for everyone to see? If you ever wanted to take up mind reading then, in short, fMRI is your technology of choice.

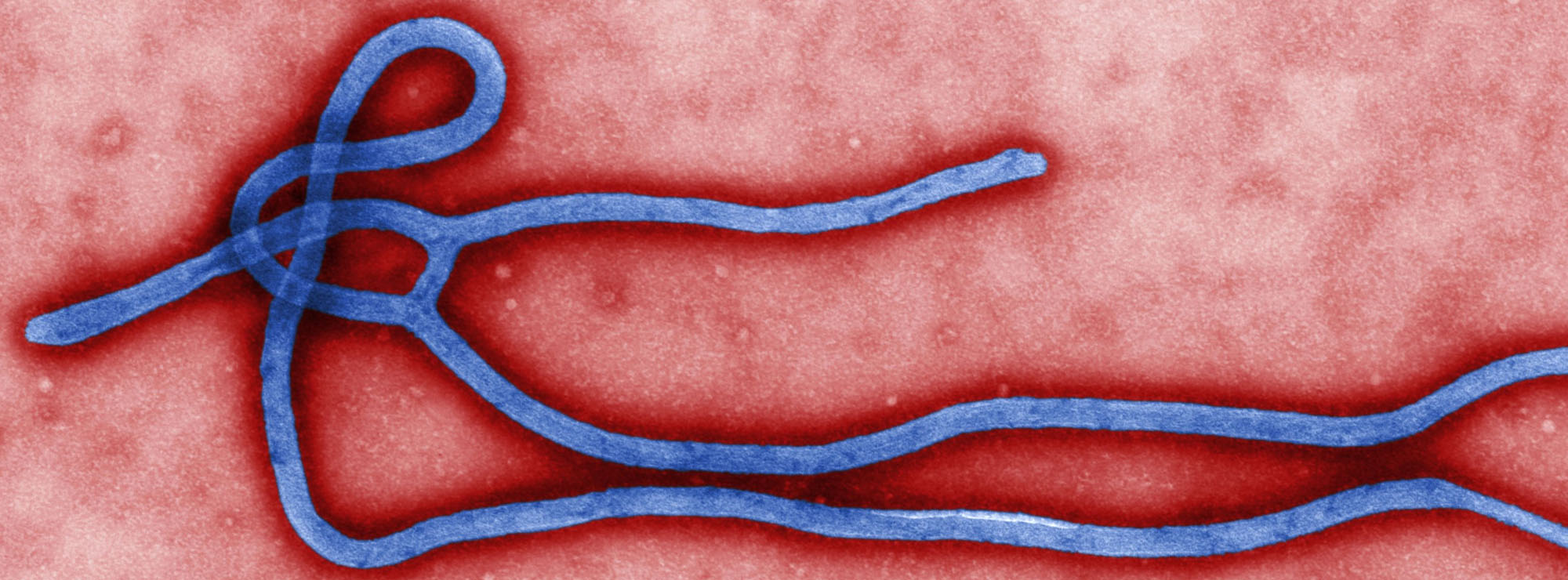

First a science lesson. fMRI scanners work by scanning and recording the minutest changes in the activity of blood vessels in the brain, and it’s this precision which makes them so valuable. When synapses and neurons in the human brain are active they consume more energy – energy which is delivered to them courtesy of the haemoglobin molecules inside the circulating red blood cells. When they give up their oxygen they not only change colour, from arterial red to venous blue, but they also turn slightly magnetic – and it’s these minute changes that are picked up by the fMRI scanner.

As you can imagine it’s no surprise therefore that fMRI scanners are a neuroscientists dream tool – literally and figuratively – and that they use them to decipher and determine what volunteers are seeing, imagining or intending to do.

In short they’re used to read minds, in real time. Scientists in the past have used them to pull images from people’s heads, as well as “find” the center of our own consciousness, and since 2011, thanks to a team at University of California Berkeley, they’ve been used to pull movies out of people’s heads too – imagine tapping into the mind of a coma patient, or watching your own dreams and thoughts – on YouTube – and you have an idea of the potential.

Using a combination of fMRI and artificial intelligence (AI), which the team used to improve their computational models, the team of researchers, led by Professor Jack Gallant, a UC Berkeley neuroscientist, successfully decoded and reconstructed three volunteers “dynamic visual experiences,” which in this case was a series of Hollywood movie trailers.

In plain English they managed to recreate the movies their volunteers watched, and recreate them on the small screen – by pulling them straight out of their heads.

“Our natural visual experience is like watching a movie,” said Shinji Nishimoto, a post doctoral researcher in Gallant’s lab, “and for this technology to have wide applicability, we must first understand how the brain processes these dynamic visual experiences.”

Nishimoto and two other research team members were the test subjects in the experiment, because the procedure required volunteers to remain still inside the MRI scanner for hours at a time.

While they were in the scanners they watched two sets of Hollywood movie trailers, while an fMRI scanner measured the blood flow through their visual cortex – the part of the brain that processes visual information. On the computer, the volunteers brain was divided into small, 3D cubes known as volumetric pixels, or “Voxels.”

“We built a model for each voxel that describes how shape and motion information in the movie is mapped into brain activity,” Nishimoto said.

The brain activity recorded while subjects viewed the first set of clips was then fed into an AI computer model that learned, second by second, to associate the visual patterns in the movie with the corresponding brain activity.

The brain activity evoked by the second set of clips was then used to test the model by feeding it over 18 million seconds of random YouTube videos so it could predict the brain activity that each film clip would most likely evoke in each subject.

Reconstructing movies using brain scans has been challenging up until now – and let’s face it, it’s still challenging – because the blood flow signals measured using fMRI change much more slowly than the neural signals that encode the dynamic information in movies. This is why almost every attempt to decode brain activity in the past has focused purely on still images.

“We tried to address these problems by developing a two stage model that separately describes the underlying neural population and blood flow signals,” Nishimoto said, “ultimately, scientists need to understand how the brain processes dynamic visual events that we experience in everyday life, and we need to know how the brain works under natural conditions – for that, we need to first understand how the brain works while watching movies.”

At the moment the technology can only reconstruct the movie clips people have already watched, and not dreams or memories, and it’s blurry, but in the future it’s hoped that it will be able to dynamically decode and reconstruct other movies inside our heads – such as our dreams and memories.

“This is a major leap toward reconstructing the internal imagery from the deepest recesses of the human mind,” said Professor Gallant, “we are opening a window into the movies in our minds.”

Eventually, it’s hoped that the technology, which has now been under development for six years, will be used to help coma patients and patients with locked in syndrome, as well as stroke victims and people with neurodegenerative diseases. And it may also lay the groundwork for new types of Brain Machine Interfaces (BMI), and open the door to full streaming telepathy.

However, the researchers point out that the technology is decades from being commercialised, so for now you’ll just have to stick to watching regular YouTube movies.