WHY THIS MATTERS IN BRIEF

We all too often think of things in one dimension terms, but what happens when computers can run in computers, and AI’s can run in AI’s, or both can run in each other? And evolve themselves … ?

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

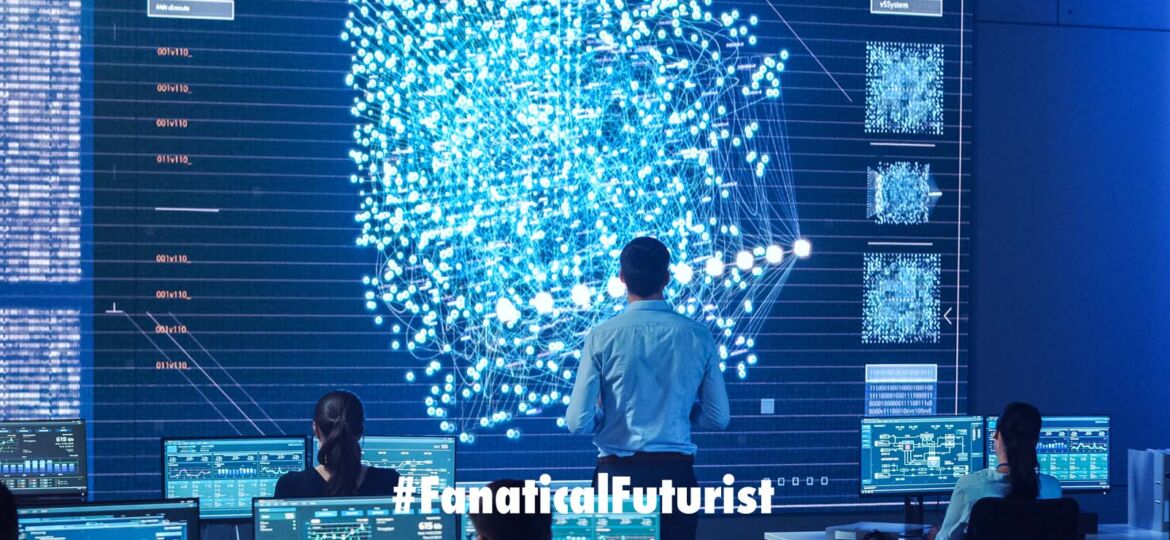

I recently wrote about a researcher who built a virtual computer in a virtual world which in itself would create a weird infinitely looping paradox and which could be possible of continuous self improvement. And now in a similar move it turns an Artificial Intelligence (AI) has built a computer inside itself that can run code, such as another AI or … whatever. Including games obviously. And, since this technology or trend doesn’t have a name yet I’ve decided to call it Meta Artificial Intelligence (MAI), to go along with the Meta Computing trend, which also didn’t have a name. And if you can think of a better name then I’m all ears … perhaps Multi-Dimensional AI? Huh?

In this case the AI mimics the operation of a standard computer within its neural network and it could be used to speed up certain calculations. Researchers have used it to put an AI inside an AI and play Pong.

If you want a computer to do something, you have to write code that manipulates bits of data. But if you code an AI-driven neural network, you have to train it with feedback before it can do anything. For instance, a neural network can distinguish between photos of cats and dogs after being shown thousands of examples and told if its guesses are correct.

Now Jason Kim and Dani Bassett at the University of Pennsylvania have a new approach, in which a neural network runs a code, just like an ordinary computer.

A neural network is a series of nodes, or artificial neurons, that takes an input and returns a changed output. The pair calculated what effect individual artificial neurons had, and used this to piece together a very simple neural network that could carry out basic tasks, such as addition.

Kim and Bassett then linked several networks together in chains so they could do more complex operations, replicating the behaviour of the logic gates found in computer chips. These chains were combined to make a network that could do things a classical computer can, including running a virtual neural network and a playable version of the game Pong.

The virtual neural network can also drastically simplify splitting up huge computational tasks. These are often spread over many processors to gain speed, but take more power to be split into chunks that can be run independently by separate chips, then recombined.

An emerging breed of machine called a neuromorphic computer, a computer so powerful it could fit he computing power found in today’s biggest supercomputers into a package no larger than your fingernail, designed to efficiently run AI software, may also be able to help these virtual networks work faster.

While a computer uses its CPU to carry out tasks, and stores data in memory, a neuromorphic computer uses artificial neurons to both store and compute, lowering the number of operations it must carry out. Neuromorphic computers may also make it easier for software to accurately work with continuous variables, such as those in physics simulations.

Francesco Martinuzzi at Leipzig University in Germany says neural networks running code could squeeze better performance out of neuromorphic chips.

“There will definitely be specific applications where these computers are going to be outperforming standard computers. And by far, I believe.”

Abdelrahman Zayed at Montreal Polytechnic in Canada says this could be exciting, so long as the chains can avoid long calculations failing if an algorithm forgets the beginning as it is learning the end.

These neural networks would also need to be scaled up.

“Computers don’t just have one or two logic gates – a CPU will have billions of transistors,” says Zayed. “Just because it worked for two or three gates, that doesn’t necessarily mean that it will scale up to billions.”

Watch this space.

Reference: arxiv.org/abs/2203.05032