WHY THIS MATTERS IN BRIEF

Let us salute the end of criminal line ups and berate the loss of our future privacy.

If you only remember one thing about technology then remember this – over time it gets faster and it gets better and for those of you who think your mind is the only safe place for all your secrets then think again.

In 2012 scientists from the University of California, University of Oxford and University of Geneva demonstrated how they could use a $299 game controller from a company called Emotive to hack into peoples brains and pull pin numbers and bank information out of their head. Now scientists from the University of Oregon have gone one step further and demonstrated how they can read peoples thoughts and pull images from peoples’ brains to put them on a screen for everyone else to see.

“We can take someone’s memory – which is typically something internal and private – and we can pull it out from their brains,” said neuroscientist Brice Kuhl, one of the team members.

The team started by selecting 23 volunteers and 1,000 colour photos of random peoples faces while the volunteers were hooked up to a Functional Magnetic Resonance Imaging (fMRI) machine which detects subtle changes in the blood flow of the brain to measure their neurological activity. Also hooked up to the machine was an artificial intelligence that read the brain activity of the participants.

Lee and Kuhl started out with 23 participants and a set of over 1,000 colour photos of different, random faces. The participants were shown the images while they underwent a Functional Magnetic Resonance Imaging (fMRI) scan which detects subtle changes in the blood flow of the brain to measure its neurological activity and their neural responses were recorded.

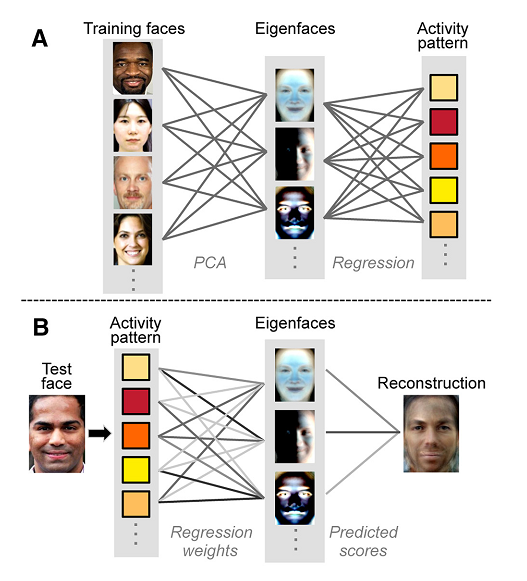

After the participants had seen the faces the scientists used a technique called Principal Component Analysis (PCA) to decompose them into 300 eigenfaces, a type of vector used to improve the efficiency of computer vision facial recognition. Each eigenface represented a statistic or feature which an artificial intelligence program, attached to the machine could use to determine what type of neural activity was associated with each eigenface. Basically, this first phase (A) was a training session for the AI – it needed to learn how certain bursts of neurological activity correlated to certain physical features on the faces.

Once the AI had formed enough brain activity-face code match-ups the team started Phase B of the experiment. But this time the AI had to figure out what the faces looked like based only on the participants’ brain activity and this time round all of the faces shown to the participants were completely different. The AI then managed to reconstruct each face based on activity from two separate regions in the brain – the Angular Gyrus (ANG), which is involved in a number of processes related to language, number processing, spatial awareness, and the formation of vivid memories and the Occipitotemporal Cortex (OTC), which processes visual cues.

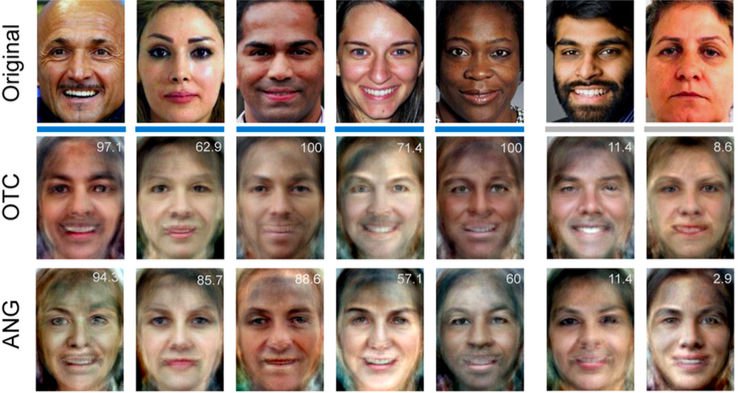

While the reconstructions produced by this technique aren’t perfect – as you can see from the below, they do capture some of the major features of the original faces and when the researchers showed the reconstructed images to separate groups of online survey respondents and asked questions like, ‘Is this male or female?’ ‘Is this person happy or sad?’ and ‘Is their skin colour light or dark?’ to a degree greater than chance, the responses checked out. And, circling back round to my statement at the top of this article – remember that technology only ever gets faster and better so let’s toast the end of criminal line ups and berate the loss of our last bastion of privacy.

It sounds similar to a FFT (Fast Fourier Transform) on faces. Interesting!