WHY THIS MATTERS IN BRIEF

Logically speaking getting AI’s to build AI’s makes sense, but as AI’s continue to proliferate and be woven into the digital fabric of our society it opens up a dangerous pandoras box.

As many companies, large and small will tell you, creating a good artificial intelligence (AI) is difficult, really difficult, and for companies such as Alibaba, Facebook, Google, IBM and Microsoft where AI could literally make or break their futures it’s a battle they have to win. And now the team at Google’s AI research lab, Google Brain, think they have the answer – get AI’s to design and build new AI’s, but they’re not alone, not by a long shot. Late last year, for example, Facebook announced its AI’s were already building new AI’s, and it’s the start of a growing trend.

What could possibly go wrong!?

If you were one of the people who said “nothing” and “that sounds like a great idea” then you might want to go and stand in the corner with everyone else who said that – and it’s a lonely corner.

The team’s idea is that if they can get AI’s to build other AI’s then not only will it speed up and simplify the process of making new AI’s but that it will also make it much, much cheaper.

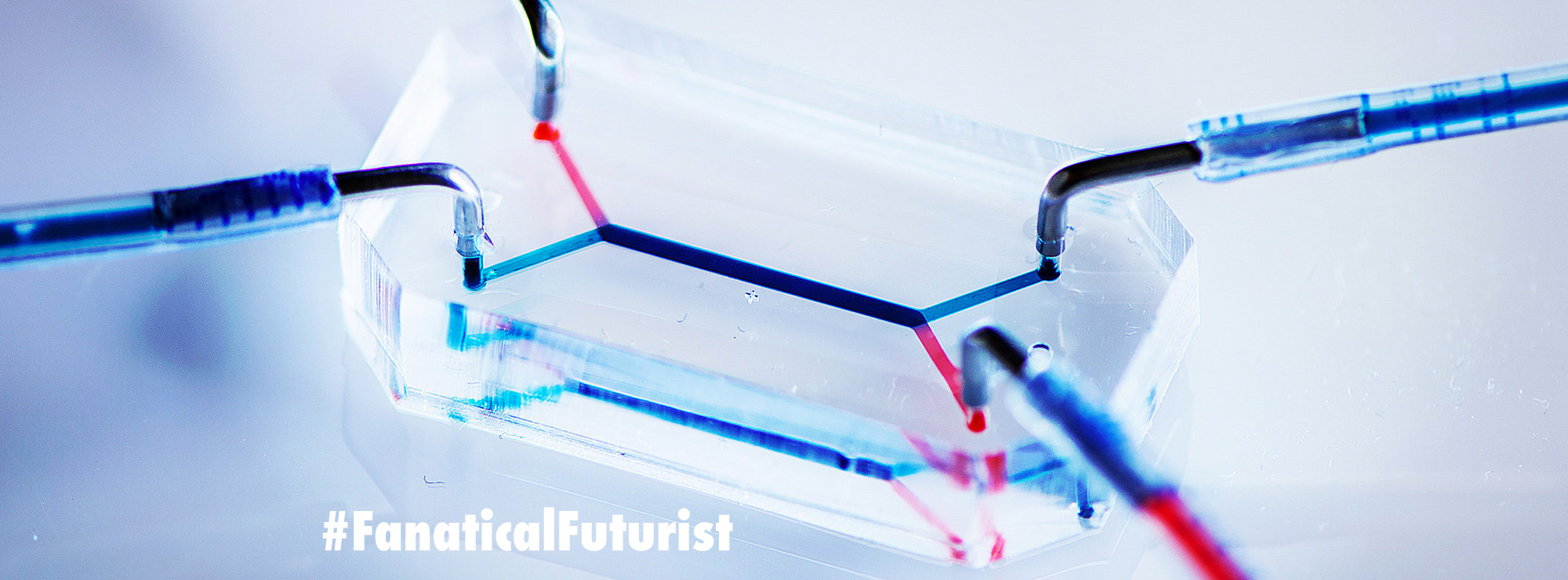

At the moment if you want to make your own AI you have to train it using machine learning techniques, hire experts and have the right tools, so the teams new hope is that in the future if a company wants to make their own AI then they can simply rent an AI builder instead of going out to market and trying to hire a team of experts. The upshot of all of this of course is that AI’s would proliferate.

Today most AI’s can take anywhere between a few weeks to a few months to build and require huge amounts of computing power to be trained, an AI builder could streamline everything, optimise and even find new ways for the next generation of AI’s to learn.

For now the Google Brain team are focusing on automating the machine learning process, and in recent months several other groups have also reported progress on getting machine learning programs to create other machine learning programs so they aren’t alone in their quest. They include researchers at the nonprofit research institute OpenAI, owned by Elon Musk, MIT, the University of California Berkeley, and Google’s other artificial intelligence research group, DeepMind.

“Currently the way to solve AI problems is to have human expertise, data and computation,” said Jeff Dean, who leads the Google Brain research group, “the question we want to answer is can we eliminate the need for a lot of machine learning expertise?”

Put another way – is there a way they can automate the machine learning process to free up data scientists time to do “other stuff,” and while the answer is inevitably going to be yes it’s inevitably going to raise other questions, such as what happens when you have what many regard already to be a “black box technology,” whose own creators aren’t even sure how it really works, creating other black boxes?

Around the world there are already companies, including Google and MIT, who are trying to assuage people’s potential concerns – whether that’s by creating AI “kill switches” for rogue AI’s or developing new technologies that help to crack open AI’s so we humans can really understand what’s going on inside the black box.

For now, Google says its AI maker is not advanced enough yet to compete with human engineers. However, given the rapid pace of AI development, it may only be a few years before that is no longer true. Hopefully it doesn’t happen before we are ready.