WHY THIS MATTERS IN BRIEF

The kind of AI that noone’s talking about neuromorphic computers will revolutionise AI as we know it.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

A while ago I talked about the development of a neuromorphic computer that matched the scale of a mouse brain – believe it or not a massive technical achievement. And now Australian researchers are putting together a supercomputer designed to emulate the world’s most efficient learning machine – a neuromorphic monster capable of the same estimated 228 trillion synaptic operations per second that human brains handle.

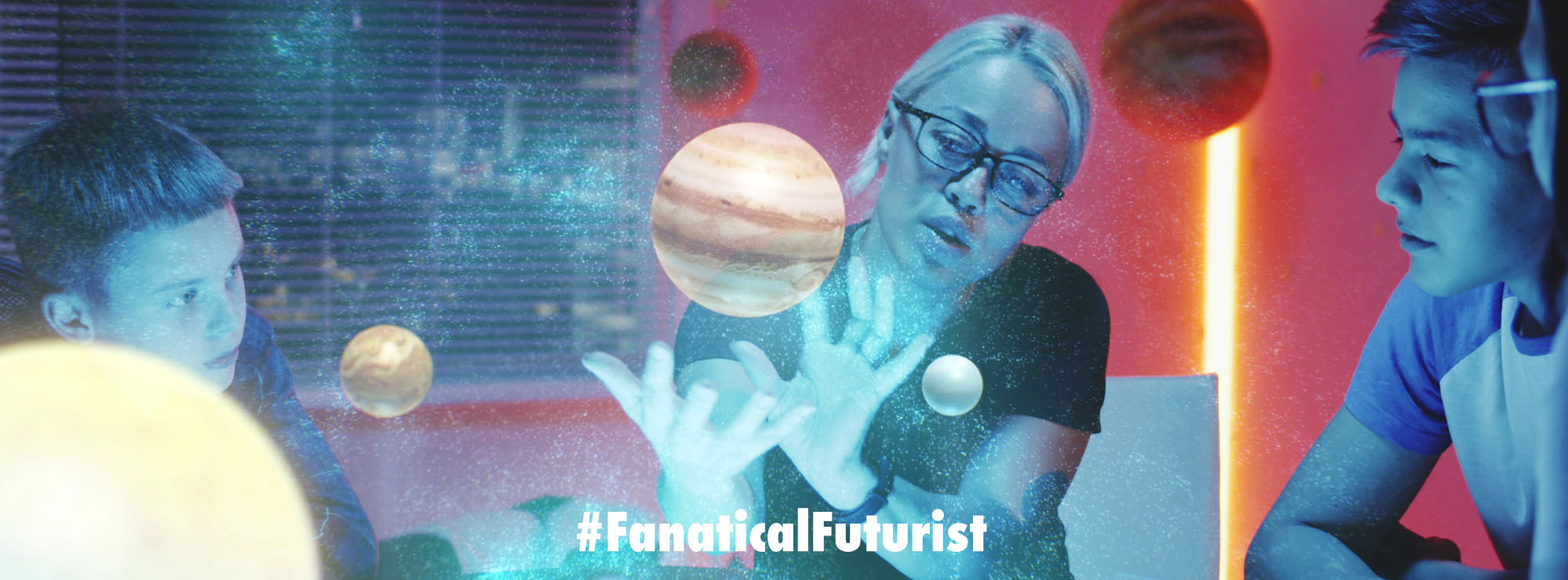

As the age of Artificial Intelligence (AI) dawns upon us, it’s clear that this wild technological leap is one of the most significant in the planet’s history, and will very soon be deeply embedded in every part of our lives. But it all relies on absolutely gargantuan amounts of computing power. Indeed, on current trends, the AI servers NVIDIA sells alone will likely be consuming more energy annually than many small countries. In a world desperately trying to decarbonise, that kind of energy load is a massive drag.

But as often happens, nature has already solved this problem. Our own necktop computers are still the state of the art, capable of learning super quickly from small amounts of messy, noisy data, or processing the equivalent of a billion billion mathematical operations every second – while consuming a paltry 20 watts of energy.

And that’s why a team at Western Sydney University is building the DeepSouth neuromorphic supercomputer – the first machine ever built that’ll be capable of simulating spiking neural networks at the scale of the human brain.

“Progress in our understanding of how brains compute using neurons is hampered by our inability to simulate brain like networks at scale,” says International Centre for Neuromorphic Systems Director, Professor André van Schaik. “Simulating spiking neural networks on standard computers using Graphics Processing Units (GPUs) and multicore Central Processing Units (CPUs) is just too slow and power intensive. Our system will change that. This platform will progress our understanding of the brain and develop brain-scale computing applications in diverse fields including sensing, biomedical, robotics, space, and large-scale AI applications.”

DeepSouth is expected to go online in April 2024. The research team expects it’ll be able to process massive amounts of data at high speed, while being much smaller than other supercomputers and consuming much less energy thanks to its spiking neural network approach.

It’s modular and scalable, using commercially available hardware, so it may be expanded or contracted in the future to suit various tasks. The goal of the enterprise is to move AI processing a step closer to the way a human brain does things, as well as learning more about the brain and hopefully making advances that’ll be relevant in other fields.

It’s fascinating to note that other researchers are working on the same problem from the opposite direction, with a number of teams now starting to use actual human brain tissue as part of cyborg computer chips – with impressive results.

Source: Western Sydney University