WHY THIS MATTERS IN BRIEF

Programming is one of todays most in demand skills but as we continue to train, not code our latest computer systems, the trend won’t last.

Before the invention of the computer, most experimental psychologists thought the brain was an unknowable black box that they’d never be able to unravel – let alone understand. You could analyze a subject’s behaviour but thoughts, memories, emotions? That stuff was obscure and inscrutable, beyond the reach of science. So these behaviorists, as they called themselves, confined their work to the study of stimulus and response, feedback and reinforcement. They gave up trying to understand the inner workings of the mind and this doctrine ruled the field for four decades.

Then, in the mid 1950s, a group of rebellious psychologists, linguists, information theorists, and early artificial intelligence (AI) researchers came up with a different conception of the mind. People, they argued, were not just collections of responses that had been conditioned over millions of years, they absorbed information, processed it, and then acted upon it. They had systems for writing, storing, and recalling memories. They operated via a logical, formal syntax. The brain wasn’t a black box at all. It was more like a computer.

The so called cognitive revolution started small, but as computers became standard equipment in psychology labs across the country, it gained broader acceptance. By the late 1970s, cognitive psychology had overthrown behaviorism, and with the new regime came a whole new language for talking about the brain. Psychologists began describing thoughts as programs, ordinary people talked about storing facts away in their memory banks, and business gurus fretted about the limits of mental bandwidth and processing power in the modern workplace.

This story has repeated itself again and again. As the digital revolution wormed its way into every part of our lives, it also seeped into our language and our deep, basic theories about how things work. Technology always does this. During the Enlightenment period Newton and Descartes inspired people to think of the universe as an elaborate clock. In the industrial age, it was a machine with pistons, then it was a computer and now it’s software. Which is, when you think about it, a fundamentally empowering idea. Because if the world is software, then the world can be coded.

Code is logical. Code is hackable. Code is destiny. These are the central tenets and self-fulfilling prophecies of life in the digital age. As software has eaten the world, to paraphrase venture capitalist Marc Andreessen, we have surrounded ourselves with machines that convert our actions, thoughts, and emotions into data – the raw material that armies of code wielding engineers can manipulate.

We have come to see life itself as something ruled by a series of instructions that can be discovered, exploited, optimized and rewritten. Companies use code to understand our most intimate ties; Facebook’s Mark Zuckerberg has gone so far as to suggest there might be a “fundamental mathematical law underlying human relationships that governs the balance of who and what we all care about.”

In 2013, Craig Venter announced that, a decade after the decoding of the human genome, he had begun to write code that would allow him to create synthetic organisms, including one day a synthetic human.

“It is becoming clear,” he said, “that all living cells that we know of on this planet are DNA software driven biological machines.”

Even self-help literature insists that you can hack your own source code, reprogramming your love life, your sleep routine, and your spending habits. Hacking is the new oxygen.

In this world, the ability to write code has become not just a desirable skill but a language that grants insider status to those who speak it. They have access to what in a more mechanical age would have been called the levers of power.

“If you control the code, you control the world,” wrote criminologist Marc Goodman.

Meanwhile Paul Ford in Bloomberg Businessweek was slightly more circumspect: “If coders don’t run the world, they run the things that run the world.”

But whether you like this state of affairs or hate it. Whether you’re a member of the coding elite or someone who barely feels competent to mess around with the settings on your phone, don’t get used to it. Our machines are starting to speak a different language now, one that even the best coders can’t fully understand.

Over the past several years, the biggest tech companies in Silicon Valley have aggressively pursued an approach to computing called machine learning. In traditional programming, an engineer writes explicit, step-by-step instructions for the computer to follow. With machine learning, programmers don’t encode computers with instructions. They train them.

If you want to teach a neural network to recognize a cat, for instance, you don’t code it to look for whiskers, ears, fur, and eyes. You simply show it thousands and thousands of photos of cats, and eventually it works things out. Then, if it keeps misclassifying foxes as cats, you don’t rewrite the code. You coaching it some more until it gets it right. Todays modern computer systems learn in a way that isn’t too far removed from the way that we humans learn.

This approach is not new, it’s been around for decades but it has recently become immensely more powerful, thanks in part to the rise of deep neural networks and massively distributed, powerful computational systems that can mimic the multi-layered connections of neurons in the brain. And already, whether you realize it or not, machine learning powers large swaths of our online activity.

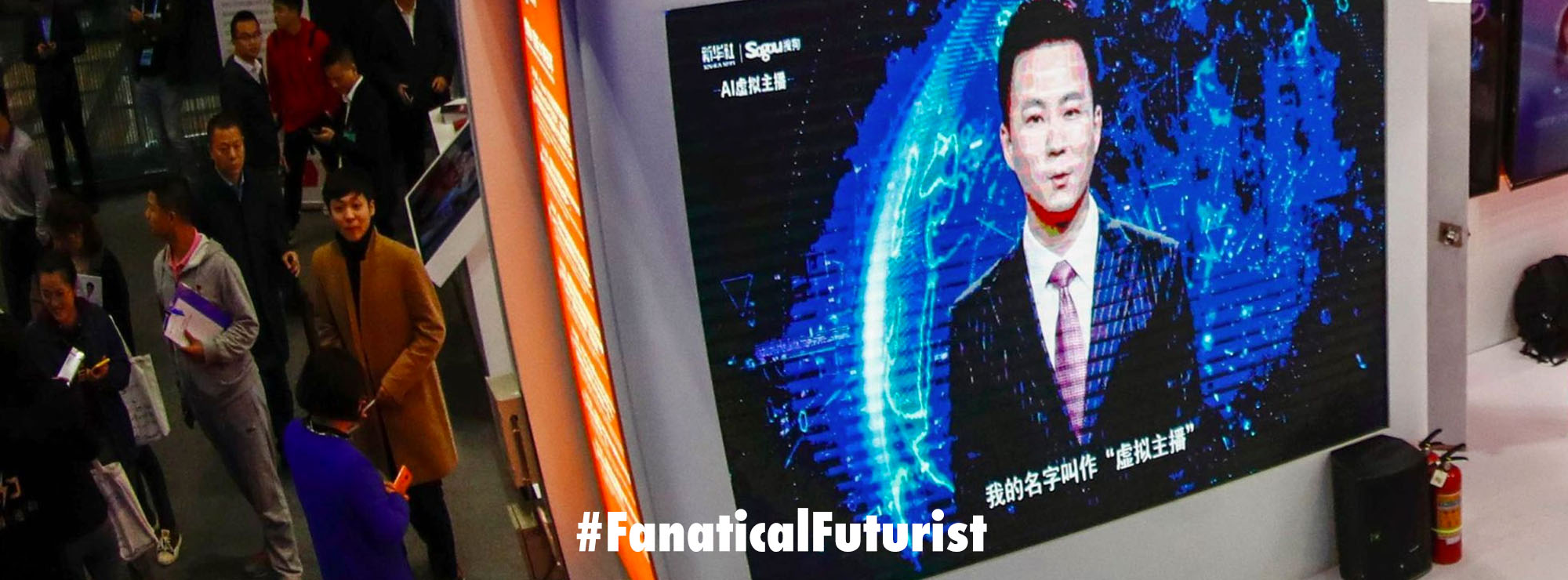

Facebook uses it to organise your newsfeed, power its advertising and WhatsApp bot networks and manage its global fleet of internet beaming drones. Google uses it to power Google Translate, Project Magenta, recognise the contents of trillions of images and much more. And that’s all the tip of what is already a very, very large iceberg that spans everything from fully autonomous warships to the latest self-driving cars and drones.

Even Google’s search engine – for so many years a towering edifice of human-written rules – has begun to rely on these deep neural networks. In February the company replaced its longtime head of search with machine-learning expert John Giannandrea, and it has initiated a major program to retrain its engineers in these new techniques.

“By building learning systems,” Giannandrea told reporters this autumn, “we don’t have to write these rules anymore.”

But here’s the thing… with machine learning, the engineer never knows precisely how the computer accomplishes its tasks. The neural network’s operations are largely opaque and inscrutable. It is, almost in the truest sense of the word, a black box. It’s like we’re back in the 1950’s but as these black boxes assume responsibility for more and more of our daily digital lives, they are not only going to change our relationship to technology – they are going to change how we think about ourselves, our world, and our place within it.

If in the old view programmers were like gods, authoring the laws that govern computer systems, now they’re like parents or dog trainers. And as any parent or dog owner can tell you, that is a much more mysterious relationship to find yourself in. Andy Rubin, best known as the co-creator of the Android operating system, Rubin is notorious in Silicon Valley for filling his workplaces and home with robots. He programs them himself.

“I got into computer science when I was very young, and I loved it because I could disappear in the world of the computer. It was a clean slate, a blank canvas, and I could create something from scratch,” he says, “it gave me full control of a world that I played in for many, many years.”

Now, he says, that world is coming to an end. Rubin is excited about the rise of machine learning and his new company, Playground Global, which is backed by hundreds of millions of dollars of funding invests in machine-learning startups and is positioning itself to lead the spread of intelligent devices but it saddens him a little too. Because machine learning changes what it means to be an engineer.

“People don’t linearly write the programs,” Rubin says.

“After a neural network learns how to do speech recognition, a programmer can’t go in and look at it and see how that happened. It’s just like your brain. You can’t cut your head off and see what you’re thinking.”

When engineers do peer into a deep neural network, what they see is an ocean of math – a massive, multilayer set of calculus problems that by constantly deriving the relationship between billions of data points generate guesses about the world.

AI wasn’t supposed to work this way. Until a few years ago, mainstream AI researchers assumed that to create intelligence, we just had to imbue a machine with the right logic or code. Write enough rules and eventually we’d create a system sophisticated enough to understand the world. They largely ignored, even vilified, early proponents of machine learning, who argued in favour of plying machines with data until they reached their own conclusions.

For years computers weren’t powerful enough to really prove the merits of either approach, so the argument became a philosophical one.

“Most of these debates were based on fixed beliefs about how the world had to be organized and how the brain worked,” says Sebastian Thrun, the former Stanford AI professor who created Google’s self-driving car, “neural nets had no symbols or rules, just numbers. That alienated a lot of people.”

The implications of an unparsable machine language aren’t just philosophical. For the past two decades, learning to code has been one of the surest routes to reliable employment – a fact not lost on all those parents enrolling their kids in after school code academies. But a world run by neurally networked deep-learning machines requires a different workforce. Analysts have already started worrying about the impact of AI on the job market, as machines render old skills irrelevant and programmers might soon get a taste of what that feels like themselves.

“I was just having a conversation about that this morning,” says tech guru Tim O’Reilly when we asked him, “I was pointing out how different programming jobs would be by the time all these STEM educated kids grow up.”

Traditional coding won’t disappear completely, indeed, O’Reilly predicts that we’ll still need coders for a long time yet but there will likely be less of it, and it will become a meta skill, a way of creating what Oren Etzioni, CEO of the Allen Institute for Artificial Intelligence, calls the “scaffolding” within which machine learning can operate. Just as Newtonian physics wasn’t obviated by the discovery of quantum mechanics, code will remain a powerful, if incomplete, tool set to explore the world. But when it comes to powering specific functions, machine learning will do the bulk of the work for us.

Of course, humans still have to train these systems, although even that is beginning to change as they slowly start figuring out how to train themselves. But for now, at least, this is a rarefied skill. The job requires both a high-level grasp of mathematics and an intuition for pedagogical give-and-take.

“It’s almost like an art form to get the best out of these systems,” says Demis Hassabis, who leads Google’s DeepMind AI team, “there’s only a few hundred people in the world that can do that really well.” But even that tiny number has been enough to transform the tech industry in just a couple of years.

Whatever the professional implications of this shift, the cultural consequences will be even bigger. If the rise of human-written software led to the cult of the engineer, and to the notion that human experience can ultimately be reduced to a series of comprehensible instructions, machine learning kicks the pendulum in the opposite direction. The code that runs the universe may defy human analysis. Right now Google, for example, is facing an antitrust investigation in Europe that accuses the company of exerting undue influence over its search results. Such a charge will be difficult to prove when even the company’s own engineers can’t say exactly how its search algorithms work in the first place.

This explosion of indeterminacy has been a long time coming. It’s not news that even simple algorithms can create unpredictable emergent behaviour – an insight that goes back to chaos theory and random number generators. Over the past few years, as networks have grown more intertwined and their functions more complex, code has come to seem more like an alien force, the ghosts in the machine ever more elusive and ungovernable. Planes grounded for no reason. Seemingly unpreventable flash crashes in the stock market and rolling blackouts.

These forces have led technologist Danny Hillis to declare the end of the age of Enlightenment, our centuries long faith in logic, determinism, and control over nature. Hillis says we’re shifting to what he calls the age of Entanglement.

“As our technological and institutional creations have become more complex, our relationship to them has changed,” he says, “instead of being masters of our creations, we have learned to bargain with them, cajoling and guiding them in the general direction of our goals. We have built our own jungle, and it has a life of its own.” The rise of machine learning is the latest, and perhaps the last, step in this journey.

This can all be pretty frightening. After all, coding was at least the kind of thing that a regular person could imagine picking up at a boot camp. Coders were at least human. Now the technological elite is even smaller, and their command over their creations has waned and become indirect. Already the companies that build this stuff find it behaving in ways that are hard to govern. Last summer, Google rushed to apologize when its photo recognition engine started tagging images of black people as gorillas. The company’s blunt first fix was to keep the system from labelling anything as a gorilla.

To nerds of a certain bent, this all suggests a coming era in which we forfeit authority over our machines.

“One can imagine such technology outsmarting financial markets, out-inventing human researchers, out-manipulating human leaders, and developing weapons we cannot even understand,” said Stephen Hawking whose sentiments were echoed by Elon Musk and Bill Gates, “whereas the short term impact of AI depends on who controls it, the long term impact depends on whether it can be controlled at all,” he said. But don’t be too scared, this isn’t the dawn of Skynet. We’re just learning the rules of engagement with a new technology.

Already, engineers are working out ways to visualize what’s going on under the hood of a deep-learning system and are trying to design kill switches. But even if we never fully understand how these new machines think, that doesn’t mean we’ll be powerless before them. In the future, we won’t concern ourselves as much with the underlying sources of their behaviour, we’ll learn to focus on the behaviour itself. The code will become less important than the data we use to train it.

If all this seems a little familiar, that’s because it looks a lot like good old 20th-century behaviorism. In fact, the process of training a machine learning algorithm is often compared to the great behaviorist experiments of the early 1900s. Pavlov triggered his dog’s salivation not through a deep understanding of hunger but simply by repeating a sequence of events over and over. He provided data, again and again, until the code rewrote itself. And say what you will about the behaviorists, they did know how to control their subjects.

In the long run, Thrun says, machine learning will have a democratizing influence. In the same way that you don’t need to know HTML to build a website these days, you eventually won’t need a PhD to tap into the insane power of deep learning. Programming won’t be the sole domain of trained coders who have learned a series of arcane languages. It’ll be accessible to anyone who has ever taught a dog to roll over.

“For me, it’s the coolest thing ever in programming,” says Thrun, “because now anyone can program.”

For much of computing history, we have taken an inside-out view of how machines work. First we write the code, then the machine expresses it. This worldview implied plasticity, but it also suggested a kind of rules-based determinism, a sense that things are the product of their underlying instructions. Machine learning suggests the opposite, an outside-in view in which code doesn’t just determine behaviour, behaviour also determines code. Machines are products of the world.

Ultimately we will come to appreciate both the power of handwritten linear code and the power of machine-learning algorithms to adjust it, the give-and-take of design and emergence.

Now, 80 years after Alan Turing first sketched his designs for a problem-solving machine, computers are becoming devices for turning experience into technology. For decades we have sought the secret code that could explain and, with some adjustments, optimize our experience of the world. But our machines won’t work that way for much longer, and our world never really did.

We’re about to have a more complicated but ultimately more rewarding relationship with technology but we will go from commanding our devices to parenting them.