WHY THIS MATTERS IN BRIEF

Rendering high quality virtual worlds is resource intensive, DeepFovea eliminates up to 99 percent of those resources which will make AR and VR more accessible.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

One of the biggest issues with Augmented Reality (AR) and Virtual Reality (VR) worlds today is the huge amount of computing power and network bandwidth they need to suck up in order to provide users a high quality experience – something that hopefully cloud based rendering and 5G might help overcome in the future. In order to fix this issue Facebook just unveiled a new light weight Artificial Intelligence (AI) system called DeepFovea, a foveated rendering system, that’s specially designed to overcome both of these problems, and when you have a look at the quality of the VR images that it can render using amazing low amounts of compute and network bandwidth the results, it has to be said, are quite stunning.

This is also the first practical application of a Generative Adversarial Network (GAN), the same type of AI that helps generate everything from DeepFakes and Synthetic Content, to new helping innovate products like NASA’s interplanetary landers, and, because of the power of the technology it’s able to generate natural looking hi definition video based on nothing more than an incredibly sparse input – as you can see from the video. In tests, DeepFovea can decrease the amount of compute resources needed for rendering by as much as 10 to 14x while any image differences remain imperceptible to the human eye.

A high quality VR experience with just 10% of the data

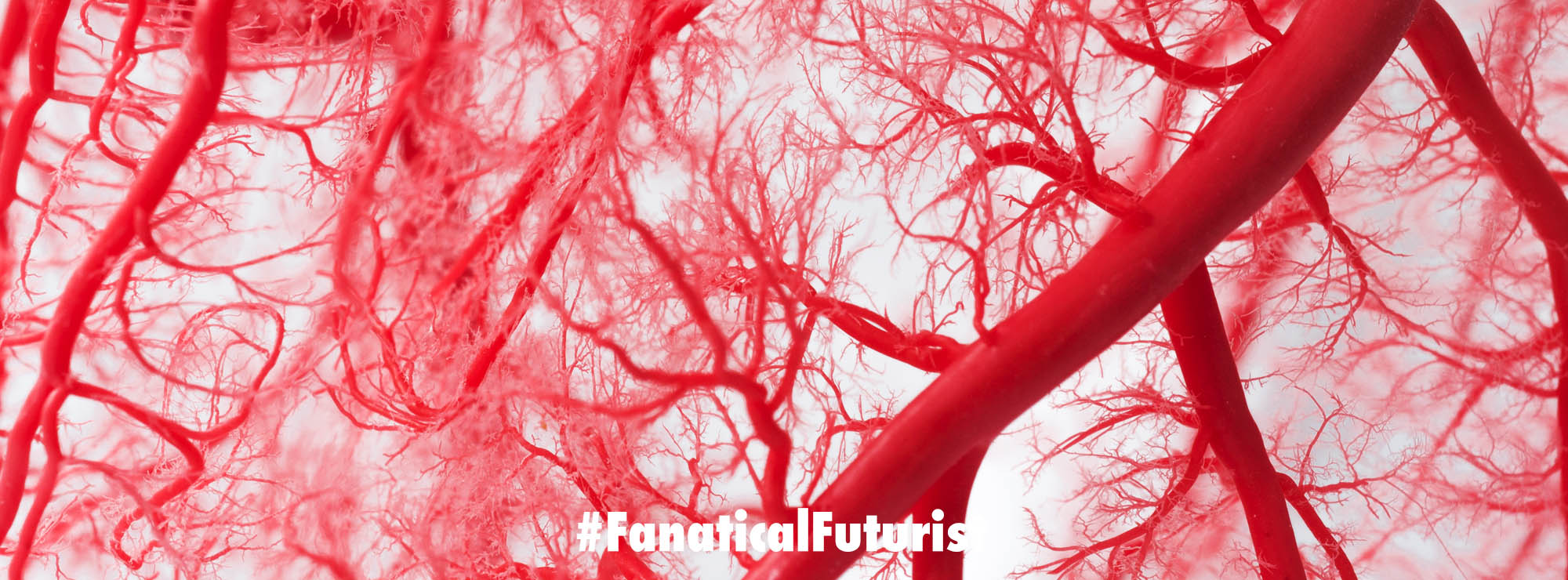

When the human eye looks directly at an object, it sees it in great detail. Peripheral vision, on the other hand, is much lower quality, but because the brain infers the missing information, humans don’t notice. DeepFovea uses recent advances in GAN’s that can similarly “in-hallucinate” missing peripheral details by generating content that is perceptually consistent.

The system is trained by feeding a large number of video sequences with dramatically decreased pixel density as input. The input then simulates the peripheral image degradation and the target helps the network learn how to fill in the missing details based on statistics from all the videos it has seen. The result is a natural looking video generated out of a stream of sparse pixels that has been decreased in density by as much as 99 percent along the periphery of a 60×40 degree field of view. The system also manages the level of flicker, aliasing, and other video artefacts in the periphery to be below the threshold that can be detected by the human eye.

High quality AR and VR experiences require high image resolution, high frame rate, and multiple views, which can be extremely resource intensive, so in order to advance these systems and bring them to a wider range of audiences and devices, such as those with mobile chipsets and small, portable batteries, we’ll need to dramatically increase rendering efficiency, and that’s what DeepFovea helps achieves. DeepFovea also shows how deep learning can help accomplish this task via foveated reconstruction and it’s also hardware-agnostic which makes it a promising tool for potential use in next-gen head-mounted display technologies.