WHY THIS MATTERS IN BRIEF

Researchers have re-thought cameras from the point of view of today’s AI’s, and what they’ve created is as innovative as it is revolutionary.

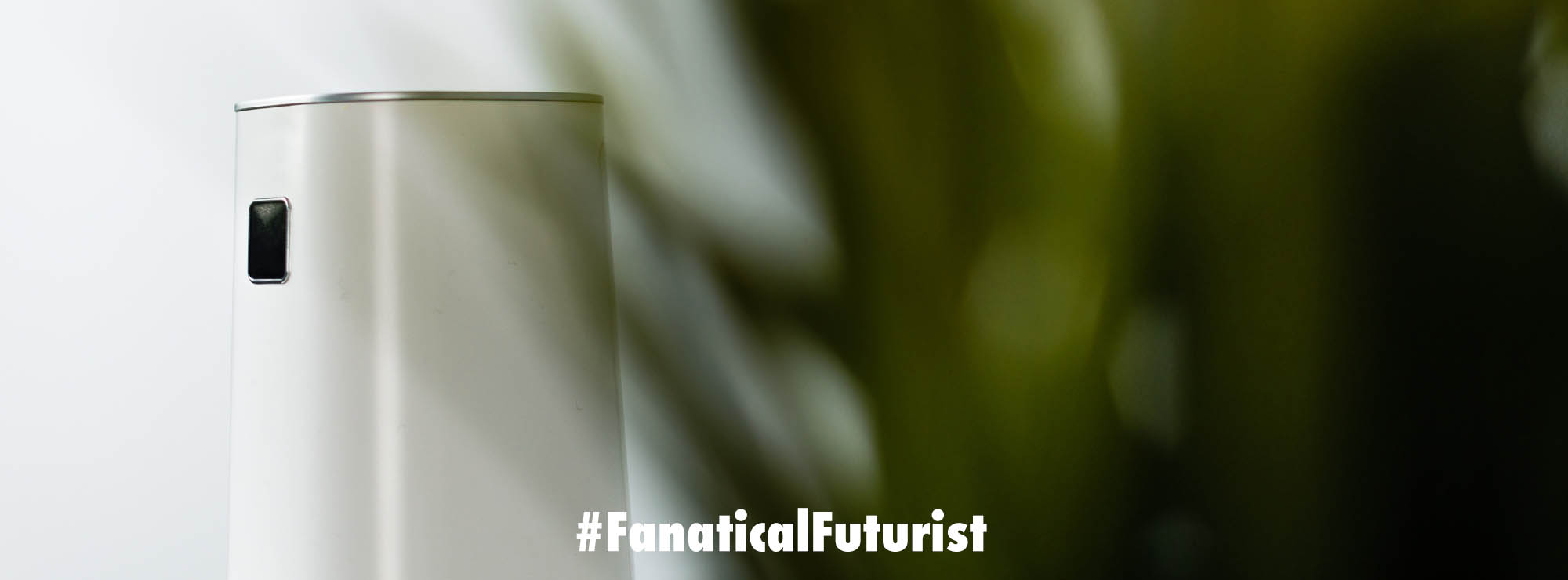

Cameras used to be their own devices with lenses and film and trips to the drug store to get the pictures developed. Then, they disappeared into phones, tablets, laptops, and video game consoles. Now, it appears that cameras could someday become as inconspicuous as a normal pane of glass, like the ones in your car or your home.

According to new research, a team in the US have used a simple photodetector pressed up against the edge of a window to detect the reflections that bounce around inside the glass, just like light signals travelling through a fiber optic cable, and create images from them using some clever Artificial Intelligence (AI) and computer processing. In short the pane of glass acts like a giant camera lens.

Take a peek at the tech

While the resulting grainy images the team produced won’t be competing with conventional cameras any time soon they could be the perfect “camera” for many of today’s machine vision program applications. For example, a window pane or a piece of car windshield could provide all the resolution that an image processing algorithm or neural network might need to do whatever it’s programmed to do such as track occupants behaviours or gestures, or whatever else.

Most of the images captured by cameras today are never seen by the human eye says Rajesh Menon, Associate Professor of electrical and computer engineering at the University of Utah who led the research. They’re seen only by algorithms processing security camera feeds or videos from a factory floor, or autonomous vehicle image sensors. And the number of images never seen by humans is increasing.

So, Menon asks, “If machines are going to be seeing these images and video more than humans, then why don’t we think about redesigning the cameras purely for machines? Take the human out of the loop entirely, and think of cameras purely from a non-human perspective.”

In other words, computer vision algorithms don’t always need the high resolutions and image fidelity that a discerning human eye demands. Plenty of information might still be extracted, at lower cost and with a smaller gadget footprint, from the lower quality images taken by Menon and his co-author Ganghun Kim’s “see-through lens-less camera.”

Menon and Kim’s technology, for which they’ve already applied for a patent on behalf of the university, begins with a pane of glass or plastic. Nothing special is required for the visual medium itself, Menon says. They used a sheet of plexiglass because it was easy to work with and cut.

In order to make their new camera they attached an off-the-shelf 640 by 480 pixel 8 bit resolution photodetector to an edge of the plastic sheet that they’d smoothed, and prepared to interface it with their imaging device. Then they put reflective tape around the rest of the edges of the pane of plexiglass. They can do the imaging without the tape, Menon says, but this trick boosts the signal-to-noise ratio.

They kept their field of view simple for this proof-of-concept. The object they set in front of the pane was an array of 32 by 32 LED lights. Then, they looked at the signal arriving at the photodetector when each of the 1,024 lights was individually illuminated. So any arbitrary image from the LED array would, at least in a first approximation, just be a linear combination of the signals from each of the individual LED lights that had been illuminated.

Menon says for this project, they developed traditional signal processing algorithms that could reconstruct the image from the signal received at the photodetector. They called this step the “inverse problem,” because their algorithm was taking a complex and jumbled signal and driving it backward to discover the object(s) that could have generated the photons their detector detected.

“We’re detecting a distribution [of photons] in space that corresponds to a particular object,” he says. “As humans, we like to see one-to-one maps. That’s exactly what a lens does. Here we have a one-to-many map. Which is why we have to solve the inverse problem.”

That’s also why these windowpane cameras could be particularly well suited for programs that rely on computer vision. The image quality and resolvable information may be good enough for computer vision, but is not yet, and perhaps never will be, ready to replace the traditional lens-based camera for images that humans view.

Menon also says his team is now developing a machine learning algorithm to study more complex images such as handwriting that could be detected and transcribed into text.

Menon says one of the first applications for this technology could be in augmented reality and virtual reality glasses and headsets where the addition of cameras, whether it’s for eye tracking or to take photos and video, often make them harder and more expensive to make, and bulkier.

It’s ironic of course that a breakthrough might come in the form of a technology that suffers a drastic reduction in quality from the state of the art today. But, says Menon, perhaps the most substantial leap forward is the mind-shift in thinking about redesigning technology that’s “good enough” for AI and image processing systems. Because like a fly’s eye, what matters in the AI world isn’t so much the high quality of a single data source but rather the proliferation of data sources.

Which is why, at least from the perspective of machine vision systems atleast, in the future cameras and simple panes of glass might increasingly start looking more alike.