WHY THIS MATTERS IN BRIEF

We’re always told ai cannot be imaginative or creative, but human creativity can be cloned.

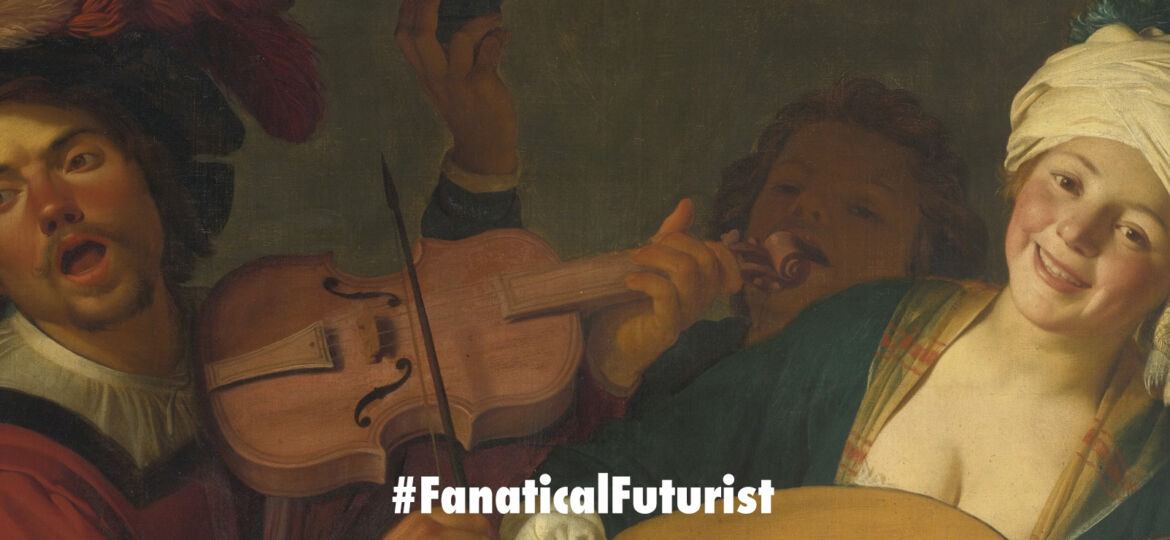

Recently art made by Artificial Intelligence (AI) software has become a field in its own right and it’s become quite a niche market in certain circles. As a result the field is increasingly attracting people who are training algorithms to create everything from fake celebrity photos to fireworks, and everything in between. But this often silly, irreverent work isn’t just fun, it’s helping researchers understand AI better. In the latest chapter of what will likely be a never ending story an AI in Munich has mastered the ability to paint like the old masters, and its work, as you can see below, is exceptional.

Google’s Munich based artist-in-residence Mario Klingemann’s Twitter feed, the man behind the AI behind the art, is a delightful mess of experimental neural network art. And he shows, in near real-time, his explorations training neural nets on various types of artistic data. The results of his attempts are often humorous, showing just how bad algorithms are at conjuring something as complex as a human face. But that’s the point, by watching how these neural nets learn from something as innocent as an old painting, he’s getting a glimpse into the mind of a state of the art of AI.

Can you spot the difference?

Right now, Klingemann is focusing his artistic efforts on oil portraits from the 19th Century and he’s built a photorealistic face generator, based on NVIDIA’s pix2pixHD algorithm, by training it on a few thousand paintings from predominantly European artists before 1900. The resulting faces, created by a machine trying to see the world as an Old Master might, veer between believable and laughable.

“If you look at art history, it’s clear that faces have fascinated artists since the beginning of culture. I guess one of the reasons is that faces are easy and very hard at the same time – you can draw a recognizable face with just a few lines or you can try to reproduce one down to the last pore,” said Klingemann, “the difficult part is that every human is an expert in human faces, we notice the slightest changes in expression or if some proportions are somehow not right. Which means that if you paint or generate a face, slight changes might tell a different story or slight errors will become immediately visible.”

Can you tell the difference between these two images – one of which is an oil painting by a human, and one of which came from a neural network?

The speed at which this model can adapt to new training data is astonishing. Some results after retraining for less than 5 minutes: pic.twitter.com/hAYhLRHnCx

— Mario Klingemann (@quasimondo) April 4, 2018

At first glance it’s hard to tell. But with a closer look, you can see some strangeness going on with the oddly black left eye and the dark, shadowy goatee of the righthand image. For Klingemann, getting a neural net to produce something this good is a technical challenge – and in this case, it’s still not quite good enough.

“It is very easy for me to see how good the model works, especially in the details, since any errors will stand out as uncanny or simply wrong,” he says. He also acknowledges that, because the model is trained on centuries-old images of largely middle-aged European men and younger European women, most of the faces are white so he’s looking for more source images to diversify his training data.

For Klingemann, training neural networks is also an artistic challenge, a creative experiment that relies both on human and machine.

“Having a face generator is like having a story generator,” he says. “Every face or grouping of faces will trigger some associations, question, or even emotions. And of course the aspect that a machine does this gives it an interesting twist.”

In fact, through his experiments, he’s found that generating portraits that look like real 19th century paintings is far easier than creating photorealistic portraits of people.

“When we look at a painting we are much more forgiving about things that do not look right, since we cannot really be sure that this was not the intent of the artist,” he says, “after all, how many old portraits have such a bizarre sense of human anatomy that they look like they could have been generated by a computer?”

Take Ecce Homo, a botched restoration attempt of a 1930 painting of Jesus that blossomed into a viral meme in 2012. Klingemann created his own algorithmic version of the painting, and it’s just as creepy, and hilarious, as the original.

As machines become increasingly capable of creating “good art,” where I admit the term good art is subjective, it’s also inevitable that there will be an increasing market for it, both for buyers who want to buy into some of the world’s first machine produced pieces or whether it’s for a more mass market audience. However, as someone recently pointed out to me in Dubai historically the price of art has increased when the artist has dies, so this then begs the question – in the high end art space once a neural network has created a set number of pieces do you turn it keep it and evolve it, or erase it? And could we one day see human “artists” create custom one off neural networks just for specific clients? One things for sure, the debates are likely to be as colourful as the art itself.