WHY THIS MATTERS IN BRIEF

One day we will create super intelligent machines that far surpass our own level of intellect, and experts believe this new form of calculus might help get us there sooner rather than later.

You can be excused for not noticing that a scientist named Daniel Buehrer, a retired professor from the National Chung Cheng University in Taiwan, recently published a white paper proposing a new class of mathematics that many feel could one day lead to the birth of machine “consciousness,” and perhaps even Artificial Super Intelligence (ASI) itself which is slated to arrive circa 2045. After all, keeping up with all the breakthroughs in the field of Artificial Intelligence (AI), from the development of new Artificial General Intelligence (AGI) architectures to the AI’s, for example, from DeepMind, that are self-evolving and fighting each other, can be exhausting.

Robot consciousness, or sentient machines, have long been a touchy subject if for no other reason than the fact that as of yet we still aren’t able to describe what consciousness really is, let alone how it came to be, and this therefore makes it a touchy subject for anyone in AI circles. In order to have a discussion around the idea of a computer that can ‘feel’ and ‘think,’ and that has its own aspirations and motivations, you first have to find two people who actually agree on the semantics of sentience. And if you manage that, you’ll then have to wade through a myriad of hypothetical objections to any theoretical living AI you can come up with.

Irrespective of new resolutions, such as the European Unions’ resolution last year that proposes giving rights to robots, we’re just not ready to accept the idea of a mechanical species of ‘beings’ that exist completely independently of humans, and for good reason, because it’s still the stuff of science fiction, just like laser weapons, teleporters and tractor beams used to be.

Which brings me back to Buehrer’s white paper that proposes a whole new class of calculus, and if his theories are correct, then his maths could lead to the creation of what some experts are referring to already as an “all encompassing, all learning” algorithm, or “Master Algorithm.”

The paper, titled “A Mathematical Framework for Superintelligent Machines,” proposes a new class of calculus that is “expressive enough to describe and improve its own learning process” and Buehrer that alludes to a new mathematical method for organising the various tribes of AI learning under a “single ruling construct,” just like the one first suggested by Pedro Domingos in his book “The Master Algorithm.”

When Professor Buehrer was asked when we should expect this “Master Algorithm” to emerge, here’s what he said:

“If the class calculus theory is correct, that human and machine intelligence involve the same algorithm, then it is only less than a year for the theory to be testable in the OpenAI gym. The algorithm involves a hierarchy of classes, parts of physical objects, and subroutines. The loops of these graphs are eliminated by replacing each by a single ‘equivalence class’ node. Independent subproblems are automatically identified to simplify the matrix operations that implement fuzzy logic inference. Properties are inherited to subclasses, locations and directions are inherited relative to the center points of physical objects, and planning graphs are used to combine subroutines.”

It’s a revolutionary idea, even in a field like AI where breakthroughs are as regular as breakfasts at the moment, and the creation of a self-teaching class of calculus that could learn from, as well as control, any number of connected AI agents, which would basically make them a “CEO” for all AI machines, would theoretically grow exponentially more intelligent every time any of the various learning systems it controls were updated, which would be a lot.

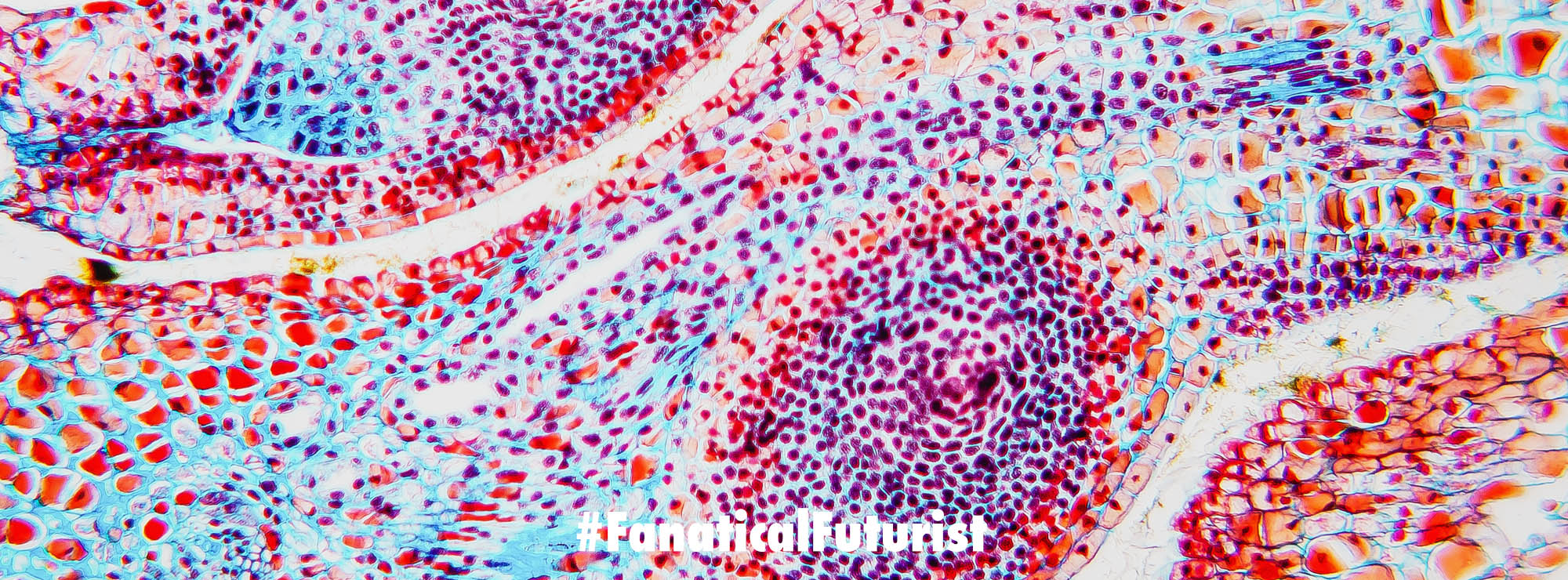

Perhaps the most interesting thing though is the idea that this control and update system will provide a sort of feedback loop, and it’s this feedback loop that, according to Buehrer, is how machine consciousness will, or could, emerge.

“Allowing machines to modify their own model of the world and themselves may create ‘conscious’ machines, where the measure of consciousness may be taken to be the number of uses of feedback loops between a class calculus’s model of the world and the results of what its robots actually caused to happen in the world,” he said.

Buehrer also states it may be necessary to develop these kinds of systems on read-only hardware, thus negating the potential for machines to write new code and become sentient.

“However, turning off a conscious sim without its consent should be considered murder, and appropriate punishment should be administered in every country,” he goes on to warn. Something I’m sure will end up in its own special never ending loop of debate…

Buehrer’s research further indicates AI may one day enter into a conflict with itself for supremacy, stating intelligent systems “will probably have to, like the humans before them, go through a long period of war and conflict before evolving a universal social conscience.” And, unfortunately, this is actually a premise that has already been tested and held up to be demonstrated to be true, for now at least, by Google who recently put two of their AI’s into opposition with each other – the result of which was the more powerful AI became aggressive…

It remains to be seen if this new math can spawn a mechanical species of beings with their own beliefs and motivations, but it’s becoming increasingly difficult to simply outright dismiss those machine learning theories that blur the line between science and fiction, and in one strange way that feels like progress, and in another strange way it’s also likely to start people freaking out about the possibility that one day there may very well be a “master” algorithm controlling everything, including perhaps, the world and everything on it, us included. Or as Elon Musk put it recently, an “immortal dictator.” While some people will ultimately see all this as helping us a bright future, or a dark one, it’s highly likely the future’s going to simply be grey – somewhere in the middle.