WHY THIS MATTERS IN BRIEF

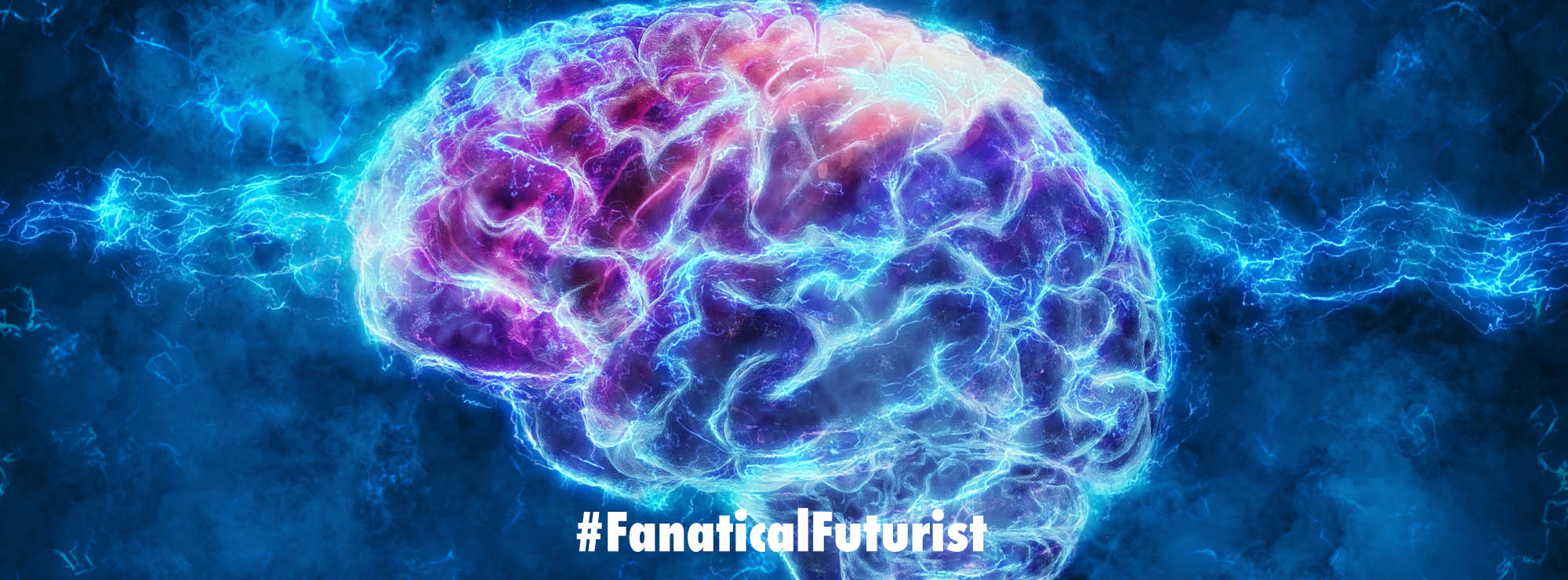

Being able to model the human brain will help us create better treatments for neurological conditions, and might also help answer questions about consciousness and sentience.

Researchers in Japan have used the powerful Riken K computer, one of the world’s fastest supercomputers, to simulate the complex neural structure of our brain in the hope that the simulations might help researchers understand the inner workings of the brain better than they do today.

Using a popular suite of neuron simulation software called NEST, the K computer is able to pull together the power of 82,944 processors to create a network simulating 1.73 billion nerve cells connected by 10.4 trillion synapses – approximating about 1% of the raw processing power of a human brain.

Advancing our understanding

Our brains are complicated things and at the heart of our current understanding of how they work is the idea that billions of specialised nerve cells, called neurons, connect together and pass signals to give rise to the activities of thought, sensing and other activities.

At one level a neuron can be considered to be a fairly simple biological switch – it absorbs the signals coming in, and if that signal is strong enough, the neuron fires it to the other neurons it’s connected to. This sort of processing can be implemented on electronic hardware as well and that’s where the K computer comes in.

The Japanese project is the result of work by researchers from the Riken HPCI Programme for Computational Life Sciences at the Okinawa Institute of Technology Graduate University in Japan and Germany’s Jülich Institute of Neuroscience and Medicine.

In terms of speed our brains neurons are actually quite slow at flicking their biological switches. They work at a rate of milliseconds – because they need time to recover after firing, but because of the sheer number of them, there’s still a lot of computing going on every second.

Computers on the other hand can switch much faster because, unlike their biological counterparts they don’t have to rest after “firing”. At the moment the K computer is using about one petabyte of memory – roughly the same amount of storage found in 250,000 home computers and all of its processors but despite that the first simulation run still took 40 minutes to provide the same computational power of just one second of “real” neural network activity in the brain.

Despite that though the brain researchers have been impressed by the numbers and the computing power because they’re the bedrock of helping them unravel some of the greatest mysteries about how our brains clusters of neurons work together.

Meanwhile the K research team freely admit that this first stage is more about demonstrating what can be done with todays technology and that their simulations, as of yet, don’t actually address or answer any significant questions about how our brains work. It’s a bit like building a super connected motorway network, populated with simulated cars, but not yet looking at how that road network reacts to the holiday road rush.

There’s no doubt that such giant scale simulations will soon yield answers to mysteries about how our brains operate, how we learn, how we perceive and perhaps even how we feel but any simulation is only as good as the assumptions it makes in building the software.

Even with the open source NEST software, if you look in detail there are a huge range of parameters in the simulation that need to be set, tweaked and changed and these parameters can often significantly alter what you get out of the simulation. And to fully model and understand our brain – in particular to be able to explain some of the things we already know about the brains function – we’ll need to bring together the skills and knowledge from other research disciplines such as neuroscience and computer science, in the same way that the Japanese led K simulation pulls together the power of myriad computer processors.

With Japan ready to develop the next generation supercomputer by 2020 – 100 times faster than the K computer – and other countries also entering the race it’s easy to see how these simulations are only going to get bigger and better. And who knows what mysteries they’ll unlock…