WHY THIS MATTERS IN BRIEF

One day the world’s best spy could very well be an AI, and that’s bad news for Starbuck’s whose biggest coffee shop is on the NSA campus…

The rise of Artificial Intelligence (AI), and an increasingly connected society has already, according to the UK’s MI5 made it “much harder for spies to hide in the shadows”, but now, if Robert Cardillo has his way, so called robo-automation tools will perform 75 percent of the tasks currently done by the new front line of American intelligence spies – the analysts who collect, analyse, and interpret images beamed from drones, satellites, and other feeds around the globe.

Cardillo, the director of the National Geospatial-Intelligence Agency, (NGA), announced his push toward “automation” and “AI” at a conference this week in San Antonio. The annual conference, hosted by the United States Geospatial Intelligence Foundation, brings together technologists, soldiers, and intelligence professionals to discuss national security threats, changes in technology, and data collection and processing.

AI is on the rise, and last year former President Barack Obama’s White House created a Defcon Scale for Cyberattacks, and released a white paper on its potential future impacts in the final months of the administration, and police forces around the world are increasingly using preliminary “pre-crime” technologies to predict when, where and by whom crimes will likely be committed. And all of that is in addition to the likes of companies like Amazon and Netflix who are using machine learning to calculate what movie you will want to watch or which book you may buy.

Yet this sort of automation is also seen as a threat to workers, who fear being put out of jobs, particularly in the private sector.

The fear that AI will take over jobs, or fail catastrophically along the way, is palpable in the intelligence community as well, and Cardillo admitted that the workforce is “skeptical,” if not “cynical” or “downright mad,” about the prospect of automation intruding on their day-to-day lives, potentially replacing them.

The coming revolution in AI has been hyped for years, often falling short of expectations. But if it does happen, analysts worry they’ll become obsolete.

Cardillo, who called it a “transforming opportunity for the profession,” said he’s working on showing the workforce that AI is “not all smoke and mirrors.” The message he’s sending to workers at the agency is that the goal of automation “isn’t to get rid of you — it’s there to elevate you.… it’s about giving you a higher level role to do the harder things.”

In Cardillo’s eyes, the profession of geospatial intelligence – monitoring and exploiting commercial and proprietary video and imagery feeds, such as those available from global satellite surveillance company Planet – is on the precipice of a data explosion similar to when the internet took off. At that point, the National Security Agency (NSA), which is responsible for collecting and analysing digital communications, had to figure out ways to vacuum up and glean specific conclusions from an explosion of communications traveling back and forth on the web.

Just as the NSA employs algorithms to trawl through millions of messages, Cardillo wants machine learning to help with large volumes of imagery. Instead of analysts staring at millions of images of coastlines and beachfronts, computers could digitally pour over images, calculating baselines for elevation and other features of the landscape. NGA’s goal is to establish a “pattern of life for the surfaces of the Earth to be able to detect when that pattern changes, rather than looking for specific people or objects.”

NGA is responsible for tracking potential threats, such as military testing sites in North Korea. When something at a site changes, like large groups of people or cars arriving, it may indicate preparations for a missile test.

“We don’t have a higher priority,” said Cardillo, “we put everything we can into North Korea.”

But the number of sensors, images, and video feeds is exploding and will continue to grow in the coming years, he predicted.

“A significant chunk of the time, I will send [my employees] to a dark room to look at TV monitors to do national security essential work,” he said, “but boy is it inefficient.”

The agency is also turning to academia and the private sector for help. Cardillo hired Anthony Vinci, the founder and former CEO of Findyr, a company that crowdsources data from countries around the world, to head up the agency’s machine learning efforts within NGA.

Companies exhibiting at the conference were clearly all on the AI bandwagon, boasting flashy datasets and advanced algorithms. But not everyone was convinced relying on computers for the bulk of data crunching and analysis was such a great idea for intelligence work.

Justin Cleveland, a former intelligence official who works for the security company Authentic8, which created a secure browser called Silo that also allows intelligence professionals to disguise their cyber tracks, was skeptical of the automation boom.

“It can be helpful,” he said in an interview at the conference, “but you could have one bad algorithm and you’re at war. Taking humans out of the bulk of the process is bound to lead to errors, and at the end of the day, you have to trust the person who wrote the algorithm over the analyst,” he said.

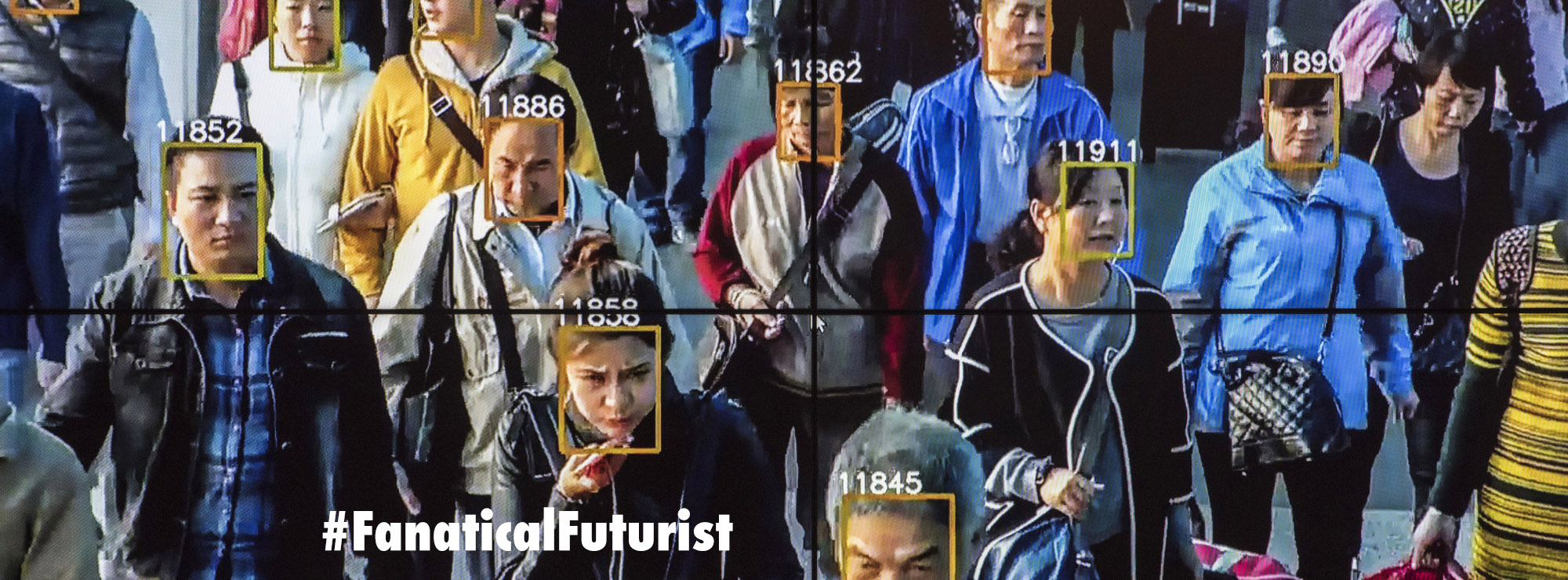

Jimmy Comfort, a deputy director at the National Reconnaissance Office, was enthusiastic about certain applications for AI in some areas like facial recognition.

“There are so many parallels with what the commercial guys are doing,” he said in an interview.

But for his agency, which works mainly with satellites, the needs are different. Satellites take fewer images, from much farther away.

“There’s challenges for us doing that stuff from space,” Comfort said.

With AI increasingly becoming integrally tied with the intelligence gathering and analysis community though how long will it be before we see the first sentient AI, an AI with a conscience, turn Snowden on nation states and leak all their secrets? Maybe that’s a problem they’ll be looking into addressing at next years’ conference.

Meanwhile, as for the question of who spies on the AI spies, and the pitfalls of embedding a powerful black box technology like AI into the very heart, and the very fabric, of the world’s intelligence agencies, well, I’m not discussing that one until I can find a very, very long barge pole.

That’s going to make for a lot of very boring Mission Impossible movies now.

Of course some would like to see a world where AI makes all decisions for them. I would call that brain dead.

What happens when AI power source is unplugged?

Their future might be like this until the power grid goes dark along with them. 🙂

It is an unwise trend. A blended approach with HUMINT Over it provides for better checks and balances. In for technology working for human beings not the other way around.

Ya right, good luck with that…

What I meant is that AI alone will not bring the results HUMINT can, but can be an interesting tool that can help faster and better analyze and sort the data use by HUMINT

We’ve been down this road before and the trending away from more and better integrated HUMINT has not served us well . Adding AI to a well fused effort would though potentially bear fruit

If this does come to pass, perhaps we can get back down to the point where those with clearances both need AND deserve to have them. The contractor feeding trough ritual of slapping classification on random information so they can shuffle more bodies into the business didn’t make us more secure; Reality Winner is the natural outcome of that. People rage about lucky scarf girl, but the real hazard is the guy my age with two kids in college and a wife just diagnosed with breast cancer who’s just learned he’s being laid off.

Ya right

Sure , they should investigate lots of areas in the us marshall state dept , dhs , fbi , cia , border patrol ect the payoff and caliphate run deep.

That’s very scary

um ,,, gee ,, thanks Mr Director of the I “,,, they have been doing that AI thing for decades ,,,, quantum computers are that old they are selling the shitty secondhand ones that are already out of date,,,,, hectic in the reality ,,, everything is recorded in full ,, and there is a matching back up system ,,,, hang on ,, oops ,, allegedly ,,, yeah ,, allegedly they have been doing that A.I. thing for years ,,,,, darn it ,,, I posted it without re-typing it ,,,, allegedly allegedly allegedly [if you say it 3 times it screws with the AI robots head shhh don’t tell the robot dude]

Hmmm… interesting but I am not sure if e.g. Garry Kasparov would agree, cf. his latest book, “Deep thinking”, on how human and machine reasoning are quite different and should complement, rather than replace, each other (I agree).

AI = linear algebra, very well known since decades, but computing power is enhanced. It is almost better to understand and develop the rules. So the most systems are deterministic. Quite difficult with AI.

AI’s will replace 75% of desk jockeys … the field people not a chance

Idiots like this are the reason why the Intelligence community is taking a beating. Relationships and good old fashioned interaction. Nothing beats it.