WHY THIS MATTERS IN BRIEF

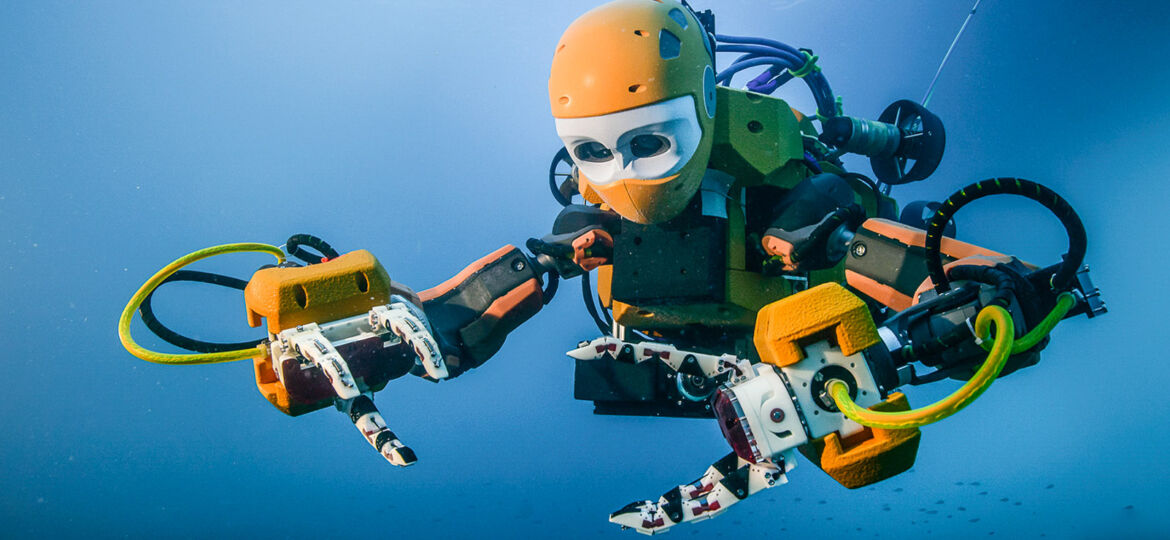

Built to go where human divers can’t Stanfords OceanOne takes the plunge.

Oussama Khatib held his breath as he swam through the wreck of La Lune, 100 meters below the Mediterranean. The flagship of King Louis XIV sank here in 1664, 20 miles off the southern coast of France, and no human had touched the wreck – or the countless treasures and artefacts the ship once carried – in the centuries since.

With guidance from a team of skilled deep-sea archaeologists who had studied the site, Khatib, a professor of computer science at Stanford, spotted a grapefruit-size vase. He hovered precisely over the vase, reached out, felt its contours and weight, and stuck a finger inside to get a good grip. He swam over to a recovery basket, gently laid down the vase and shut the lid. Then he stood up and high-fived the dozen archaeologists and engineers who had been crowded around him.

This entire time Khatib had been sitting comfortably in a boat, using a set of joysticks to control OceanOne, a humanoid diving robot outfitted with human vision, haptic force feedback and an artificial brain – in essence, a virtual diver.

When the vase returned to the boat, Khatib was the first person to touch it in hundreds of years. It was in remarkably good condition, though it showed every day of its time underwater: The surface was covered in ocean detritus, and it smelled like raw oysters. The team members were overjoyed, and when they popped bottles of champagne, they made sure to give their heroic robot a celebratory bath.

The expedition to La Lune was OceanOne’s maiden voyage. Based on its astonishing success, Khatib hopes that the robot will one day take on highly skilled underwater tasks too dangerous for human divers, as well as open up a whole new realm of ocean exploration.

“OceanOne will be your avatar,” Khatib said.

“The intent here is to have a human diving virtually, to put the human out of harm’s way. Having a machine that has human characteristics that can project the human diver’s embodiment at depth is going to be amazing.”

The concept for OceanOne was born from the need to study coral reefs deep in the Red Sea, far below the comfortable range of human divers. No existing robotic submarine can dive with the skill and care of a human diver, so OceanOne was conceived and built from the ground up, a successful marriage of robotics, artificial intelligence and haptic feedback systems.

OceanOne looks something like a robo-mermaid. Roughly five feet long from end to end, its torso features a head with stereoscopic machine vision that shows the pilot exactly what the robot sees, and two fully articulated arms. The “tail” section houses batteries, computers and eight multi-directional thrusters.

Stanford diving robot images (Courtesy ©Osada/Seguin/DRASSM):

The body looks far unlike conventional boxy robotic submersibles, but it’s the hands that really set OceanOne apart. Each fully articulated wrist is fitted with force sensors that relay haptic feedback to the pilot’s controls, so the human can feel whether the robot is grasping something firm and heavy, or light and delicate. (Eventually, each finger will be covered with tactile sensors.) The ‘bot’s brain also reads the data and makes sure that its hands keep a firm grip on objects, but that they don’t damage things by squeezing too tightly. In addition to exploring shipwrecks, this makes it adept at manipulating delicate coral reef research and precisely placing underwater sensors.

“You can feel exactly what the robot is doing,” Khatib said.

“It’s almost like you are there, with the sense of touch you create a new dimension of perception.”

The pilot can take control at any moment, but most frequently won’t need to lift a finger. Sensors throughout the robot gauge current and turbulence, automatically activating the thrusters to keep the robot in place. And even as the body moves, quick-firing motors adjust the arms to keep its hands steady as it works. Navigation relies on perception of the environment, from both sensors and cameras, and these data run through smart algorithms that help OceanOne avoid collisions. If it senses that its thrusters won’t slow it down quickly enough, it can quickly brace for impact with its arms, an advantage of a humanoid body build.

The humanoid form also means that when OceanOne dives alongside actual humans, its pilot can communicate through hand gestures during complex tasks or scientific experiments. Ultimately, though, Khatib designed OceanOne with an eye toward getting human divers out of harm’s way. Every aspect of the robot’s design is meant to allow it to take on tasks that are either dangerous – deep-water mining, oil-rig maintenance or underwater disaster situations like the Fukushima Daiichi power plant – or simply beyond the physical limits of human divers.

“We connect the human to the robot in very intuitive and meaningful way. The human can provide intuition and expertise and cognitive abilities to the robot,” Khatib said.

“The two bring together an amazing synergy. The human and robot can do things in areas too dangerous for a human, while the human is still there.”

Khatib was forced to showcase this attribute while recovering the vase. As OceanOne swam through the wreck, it wedged itself between two cannons. Firing the thrusters in reverse wouldn’t extricate it, so Khatib took control of the arms, motioned for the bot to perform a sort of pushup, and OceanOne was free.

The expedition to La Lune was made possible in large part thanks to the efforts of Michel L’Hour, the director of underwater archaeology research in France’s Ministry of Culture. Previous remote studies of the shipwreck conducted by L’Hour’s team made it possible for OceanOne to navigate the site. Vincent Creuze of the Universite de Montpellier in France commanded the support underwater vehicle that provided third-person visuals of OceanOne and held its support tether at a safe distance.

Several students played key roles in OceanOne’s success, including graduate students Gerald Brantner, Xiyang Yeh, Boyeon Kim, Brian Soe and Hannah Stuart, who joined Khatib in France for the expedition, as well as Shameek Ganguly, Mikael Jorda, Shiquan Wang and a number of undergraduate and graduate students. Khatib also drew on the expertise of Mark Cutkosky, a professor of mechanical engineering, and his students for designing and building the robotic hands.

Next month, OceanOne will return to the Stanford campus, where Khatib and his students will continue iterating on the platform. The prototype robot is a fleet of one, but Khatib hopes to build more units, which would work in concert during a dive.

In addition to Stanford, the development of the robot was supported by Meka Robotics and the King Abdullah University of Science and Technology (KAUST) in Saudi Arabia.

Hi Teddy thanks for reaching out – After having a quick chat with the administrator of the site I believe they searched for the copyright information but couldn’t find any. However, that said I’ve taken action to have the images taken down immediately so they will be removed in the next five minutes. They’re great images by the way and if we were able to use them then that would also be wonderful but I understand completely if not and apologies for any mix up or misunderstanding, all the best

M