WHY THIS MATTERS IN BRIEF

Exascale computing has been a long time coming, and the power of these machines could change human civilisation …

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Frontier, the world’s first Exascale supercomputer came online late last year for general scientific use at Oak Ridge National Laboratory in Tennessee. And now, another such machine, Aurora, is seemingly on track to be completed any day at Argonne National Laboratory in Illinois. However, not to be left out of the exascale computing race now the European Union has announced that it’s getting its own monster.

Through a €500 million pan-European effort, an exascale supercomputer called JUPITER (Joint Undertaking Pioneer for Innovative and Transformative Exascale Research) will be installed sometime later this year at the Forschungszentrum Jülich, in Germany.

Thomas Lippert, director of the Jülich Supercomputing Center, likens the addition of JUPITER, and the expanding supercomputing infrastructure in Europe more broadly, to the construction of an astonishing new telescope.

“We will resolve the world much better,” he says. The European Union–backed high-performance computing arm, EuroHPC JU, is underwriting half the cost of the new exascale machine. The rest comes from German federal and state sources.

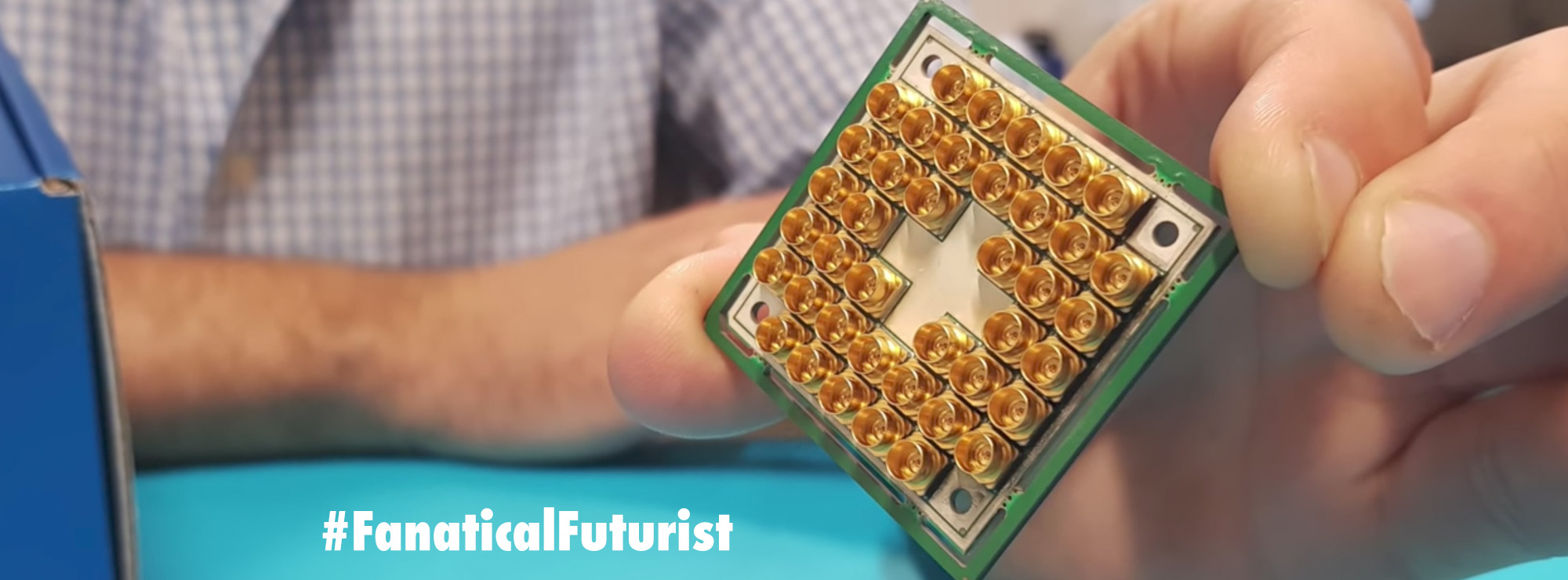

Exascale supercomputers can, by definition, surpass an exaflop – more than a quintillion floating-point operations per second, and doing so requires enormous machines. JUPITER will reside in a cavernous new building housing several shipping-container-size water-cooled enclosures. Each of these enclosures will hold a collection of closet-size racks, and each rack will support many individual processing nodes.

How many nodes will there be? The numbers for JUPITER aren’t yet set, but you can get some idea from JUWELS (shorthand for Jülich Wizard for European Leadership Science), a recently upgraded system currently ranking 12th on the Top500 list of the world’s most powerful supercomputers. JUPITER will sit close by but in a separate building from JUWELS, which boasts more than 3,500 computing nodes all told.

With contracts still out for bid at press time, scientists at the center were keeping schtum on the chip specs for the new machine. Even so, the overall architecture is established, and outsiders can get some hints about what to expect by looking at the other brawny machines at Jülich and elsewhere in Europe.

JUPITER will rely on GPU-based accelerators alongside a universal cluster module, which will contain CPUs. The planned architecture also includes high-capacity disk and flash storage, along with dedicated backup units and tape systems for archival data storage.

The JUWELS supercomputer uses Atos BullSequana X hardware, with AMD EPYC processors and Mellanox HDR InfiniBand interconnects. The most recent EuroHPC-backed supercomputer to come online, Finland-based LUMI, or Large Unified Modern Infrastructure, meanwhile uses HPE Cray hardware, AMD EPYC processors, and HPE Slingshot interconnects. LUMI is currently ranked third in the world. If Jupiter follows suit, it may be similar in many respects to Frontier, which hit exascale in May 2022, also using Cray hardware with AMD processors.

“The computing industry looks at these numbers to measure progress, like a very ambitious goal: flying to the moon,” says Christian Plessl, a computer scientist at Paderborn University, in Germany. “The hardware side is just one aspect. Another is, how do you make good use of these machines to run exascale applications?”

Plessl has teamed up with chemist Thomas Kühne to run atomic-level simulations of both HIV and the spike protein of SARS-CoV2, the virus that causes COVID-19. Last May, the duo ran exaflop-scale calculations for their SARS simulation – involving millions of atoms vibrating on a femtosecond timescale – with quantum-chemistry software running on the Perlmutter supercomputer. They exceeded an exaflop because these calculations were done at lower resolutions, of 16 and 32 bits, as opposed to the 64-bit resolution that is the current standard for counting flops.

Kühne is excited by JUPITER and its potential for running even more demanding high-throughput calculations, the kind of calculations that might show how to use sunlight to split water into hydrogen and oxygen for clean-energy applications. Jose M. Cela at the Barcelona Supercomputing Center says that exascale capabilities are essential for certain combustion simulations, for really-large-scale fluid dynamics, and for planetary simulations that encompass whole climates.

Lippert is also looking forward to a kind of federated supercomputing, where the several European supercomputer centers will use their huge machines in concert, distributing calculations to the appropriate supercomputers via a service hub. Cela says communication speeds between centers aren’t fast enough yet to manage this for some problems – a gas-turbine combustion simulation, for example, must be done inside a single machine. But this approach could be useful for certain problems in the life sciences, such as in genetic and protein analysis. The EuroHPC JU’s Daniel Opalka says European businesses will also make use of this burgeoning supercomputing infrastructure.

Even as supercomputers get faster and larger, they must work harder to be more energy efficient. That’s especially important in Europe, which is enduring what may be a long, costly energy crisis.

JUPITER will draw 15 megawatts of power during operation with plans calling for it to run on clean energy. With wind turbines getting bigger and better, JUPITER’s energy demands could perhaps be met with just a couple of mammoth turbines. And with cooling water circulating among the mighty computing boxes, the hot water that results could be used to heat homes and businesses nearby, as is being done with LUMI in Finland. It’s one more way this computing powerhouse will be tailored to the EU’s energy realities.