WHY THIS MATTERS IN BRIEF

If you ever wanted to spy on anyone the cameras of the future won’t disappoint.

Interested in the Exponential Future? Join our XPotential Community, future proof yourself with courses from our XPotential Academy, connect, watch a keynote, or browse my blog.

Interested in the Exponential Future? Join our XPotential Community, future proof yourself with courses from our XPotential Academy, connect, watch a keynote, or browse my blog.

It’s long been known that bugs would make the perfect spying companion – expect for the fact that trying to control them, or re-create them in robot form, has proven very difficult. But now every spies dream can now become a reality after researchers last year turned a living Dragonfly into a controllable cyborg drone, and after scientists this week announced they’ve finally cracked the development of a bug sized camera – combine the two and voila the “fly on the wall” spy you’ve always dreamt of.

Although, by future standards, as these cameras that are smaller than a human hair and scan the inside of your body as they travel around the blood stream, and these ones that are nano thin and see round corners and even record your insides from the outside prove, even this new tiny bug sized camera looks huge.

The camera, which streams video to a smartphone at 1 to 5 frames per second, sits on a mechanical arm that can pivot 60 degrees, and to demonstrate the versatility of this system, which weighs about 250 milligrams – about one-tenth the weight of a playing card – the team mounted it on top of live beetles.

“We have created a low-power, low-weight, wireless camera system that can capture a first-person view of what’s happening from an actual live insect or create vision for small robots,” says senior author Shyam Gollakota, a associate professor in the Paul G. Allen School of Computer Science & Engineering at the University of Washington.

“Vision is so important for communication and for navigation, but it’s extremely challenging to do it at such a small scale. As a result, prior to our work, wireless vision has not been possible for small robots or insects.”

Typical small cameras, such as those used in smartphones, use a lot of power to capture wide-angle, high-resolution photos, and that doesn’t work at the insect scale. While the cameras themselves are lightweight, the batteries they need to support them make the overall system too big and heavy for insects, or eventually insect-sized robots like these robo-bees, to lug around. So the team took a lesson from biology.

“Similar to cameras, vision in animals requires a lot of power,” says coauthor Sawyer Fuller, an assistant professor of mechanical engineering.

One tiny camera on one big bug. Courtesy: UW

“It’s less of a big deal in larger creatures like humans, but flies are using 10 to 20% of their resting energy just to power their brains, most of which is devoted to visual processing. To help cut the cost, some flies have a small, high-resolution region of their compound eyes. They turn their heads to steer where they want to see with extra clarity, such as for chasing prey or a mate. This saves power over having high resolution over their entire visual field.”

To mimic an animal’s vision, the researchers used a tiny, ultra-low-power black-and-white camera that can sweep across a field of view with the help of a mechanical arm. The arm moves when the team applies a high voltage, which makes the material bend and move the camera to the desired position.

Unless the team applies more power, the arm stays at that angle for about a minute before relaxing back to its original position. This is similar to how people can keep their head turned in one direction for only a short period of time before returning to a more neutral position.

“One advantage to being able to move the camera is that you can get a wide-angle view of what’s happening without consuming a huge amount of power,” says co-lead author Vikram Iyer, a doctoral student in electrical and computer engineering.

“We can track a moving object without having to spend the energy to move a whole robot. These images are also at a higher resolution than if we used a wide-angle lens, which would create an image with the same number of pixels divided up over a much larger area.”

The camera and arm are controlled via Bluetooth from a smartphone from a distance up to 120 meters away, just a little longer than a football field.

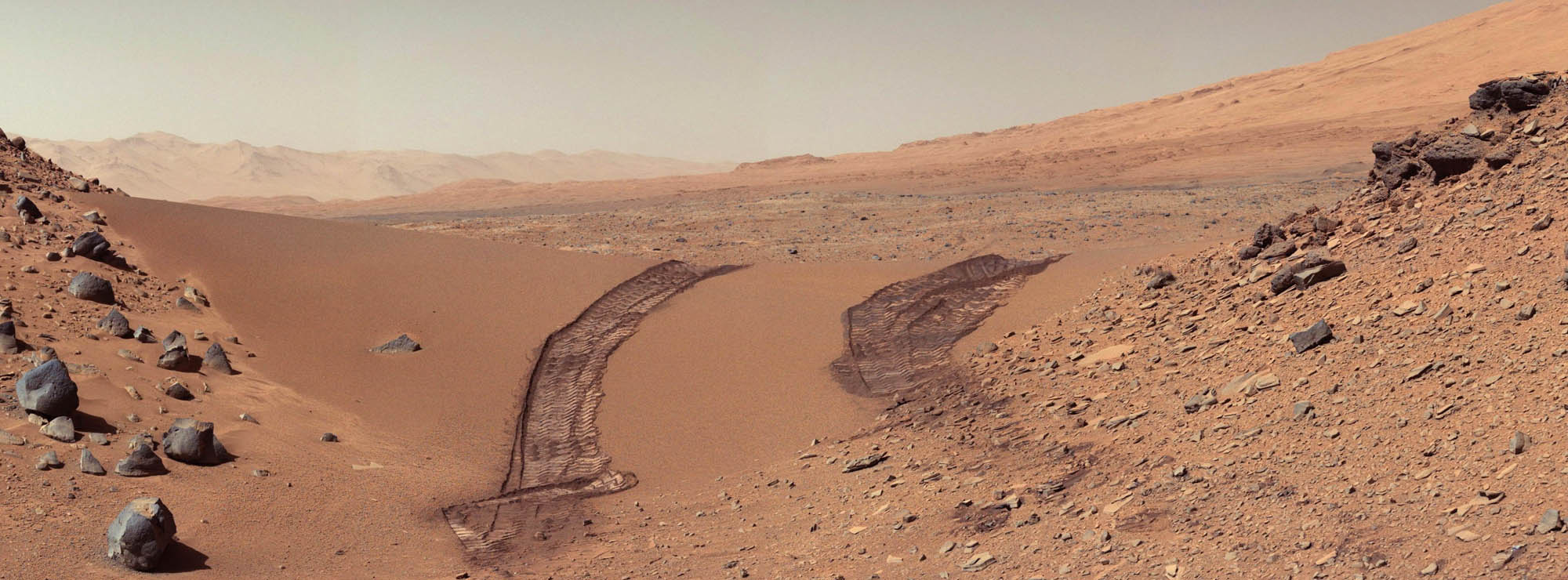

The researchers attached their removable system to the backs of two different types of beetles – a death-feigning beetle and a Pinacate beetle. Similar beetles have been known to be able to carry loads heavier than half a gram, the researchers say.

“We made sure the beetles could still move properly when they were carrying our system,” says co-lead author Ali Najafi, a doctoral student in electrical and computer engineering. “They were able to navigate freely across gravel, up a slope, and even climb trees.”

The beetles also lived for at least a year after the experiment ended.

“We added a small accelerometer to our system to be able to detect when the beetle moves. Then it only captures images during that time,” Iyer says.

“If the camera is just continuously streaming without this accelerometer, we could record one to two hours before the battery died. With the accelerometer, we could record for six hours or more, depending on the beetle’s activity level.”

The researchers also used their camera system to design the world’s smallest terrestrial, power-autonomous robot with wireless vision. This insect-sized robot uses vibrations to move and consumes almost the same power as low-power Bluetooth radios need to operate.

The team found, however, that the vibrations shook the camera and produced distorted images. The researchers solved this issue by having the robot stop momentarily, take a picture and then resume its journey. With this strategy, the system was still able to move about 2 to 3 centimeters per second – faster than any other tiny robot that uses vibrations to move – and had a battery life of about 90 minutes.

While the team is excited about the potential for lightweight and low-power mobile cameras, the researchers acknowledge that this technology comes with a new set of privacy risks.

“As researchers we strongly believe that it’s really important to put things in the public domain so people are aware of the risks and so people can start coming up with solutions to address them,” Gollakota says.

Applications could range from biology to exploring novel environments, the researchers say. The team hopes that future versions of the camera will require even less power and be battery free, potentially solar-powered.

“This is the first time that we’ve had a first-person view from the back of a beetle while it’s walking around. There are so many questions you could explore, such as how does the beetle respond to different stimuli that it sees in the environment?” Iyer says. “But also, insects can traverse rocky environments, which is really challenging for robots to do at this scale. So this system can also help us out by letting us see or collect samples from hard-to-navigate spaces.”

Johannes James, a UW mechanical engineering doctoral student, is also a coauthor of the paper. Funding came from a Microsoft fellowship and the National Science Foundation.

The results appear in Science Robotics.

Source: University of Washington