WHY THIS MATTERS IN BRIEF

As companies try to find ways to easily render the real world into virtual worlds, this is a space that is accelerating.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Hot on the heels of Nvidia who recently released their own very impressive so called Video to Video Artificial Intelligence (AI) system, Samsung researchers have unveiled an AI that generates realistic 3D renders of video scenes that, like Nvidia’s solution, can then be edited and used in everything from the production of movies and video games, through to helping future consumers relive special memories in Virtual Reality (VR). And for my ten cents I think it’s the latter use cases that Samsung will focus on developing so keep an eye out for this feature appearing within the next five years in Samsung’s consumer products.

In a paper detailing the neural network behind the AI, the researchers explained the inefficient process of creating virtual scenes today:

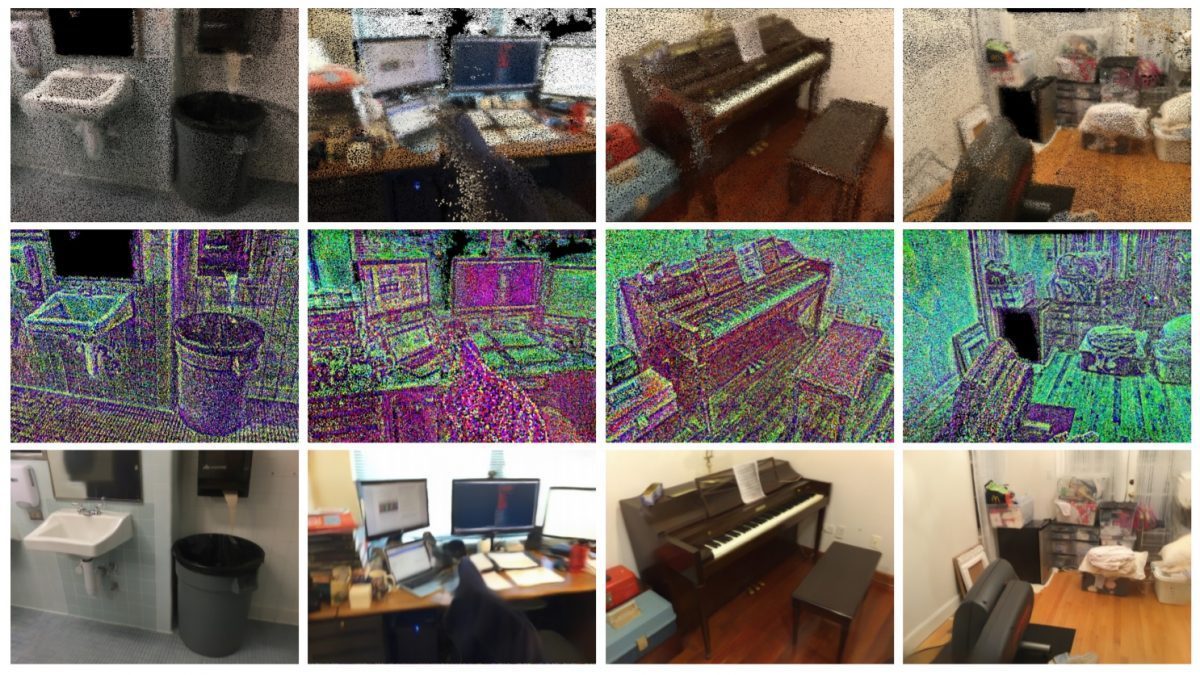

From the real world to the digital one, in a flash. Courtesy: Samsung

“Creating virtual models of real scenes usually involves a lengthy pipeline of operations. Such modelling usually starts with a scanning process, where the photometric properties are captured using camera images and the raw scene geometry is captured using depth scanners or dense stereo matching,” they said, adding, “the latter process usually provides noisy and incomplete point cloud that needs to be further processed by applying certain surface reconstruction and meshing approaches. Given the mesh, the texturing and material estimation processes determine the photometric properties of surface fragments and store them in the form of 2D parameterized maps, such as texture maps, bump maps, view-dependent textures, surface lightfields. Finally, generating photorealistic views of the modelled scene involves computationally-heavy rendering process such as ray tracing and/or radiance transfer estimation.”

The video input is then converted into points which represent the geometry of the scene. These geometry points are then rendered into computer graphics using a neural network, and vastly speeding up the process of rendering a photorealistic 3D scene that can be translated into a VR world, and you can see the result in the video.

As mentioned this type of product could one day help speed up game development, especially video game counterparts of movies that are already being filmed. Footage from a film set could provide a replica 3D environment for game developers to create interactive experiences in, but one of the most exciting new use cases, for example, would be letting consumers use it to relive their birthday parties or weddings and events by recording them and then having the solution automatically convert those videos into photorealistic VR worlds that would let them relive those memories all over again using just a VR headset and perhaps, even a haptic clothing like the Teslasuit, that helps them not just relive the moment through their eyes but also through their other senses.

Before such use cases are realised though the tech still needs some refinement – current scenes can’t be altered or edited, and any large deviations from the original viewpoint results in distortions. Still, it’s a fascinating early insight at what could be possible in a not-so-distant future.