WHY THIS MATTERS IN BRIEF

Not being able to speak other languages divides us, and it prevents us and the machines from understanding each other and the content we all generate. By breaking down communication barriers we are opening ourselves up to a new world of data and possibilities.

In the last 10 years, Google Translate has grown from supporting just a few languages to 103 and it translates over 140 billion words every day. To make this a reality the Google team responsible for the program has had to build a variety of different systems in order to translate between any two languages – and this has come with a huge computational cost.

With new developments in neural networks having an impact on so many different fields, for example, from Google’s exemplary artificial intelligence (AI) platform DeepMind which is impacting everything from natural speech processing all the way through to helping search for better cancer treatments, the team were convinced they could boost the efficiency of their system – and the translation results – even further but to do so meant they had to fundamentally rethink the technology behind Google Translate.

In September, the team announced that Google Translate was going to be switching to a new system called Google Neural Machine Translation (GNMT), an end-to-end learning framework that learns from millions of examples, and that provided significant improvements in translation quality – boosting them to human levels. But, while switching to GNMT improved the quality for the languages they tested it on, scaling it up to support all of the 103 supported languages presented the team with a significant challenge.

In Googles latest project entitled “GNMT: Enabling Zero-Shot Translation”, the team addressed this challenge by extending the previous GNMT system to create a single system that could translate between multiple languages.

The new architecture didn’t need any changes to be made to the base GNMT system, but instead inserted an additional “token” at the beginning of each input sentence that specified the required target language to translate to. more importantly, as well as improving the translation quality of the system even further the new method harnessed a methodology called “Zero-Shot Translation,” that is the translation between language pairs the system has never seen before.

Normally the system would translate, for example, English into Korean but it might never have translated Urdu directly into Korean. Using the “old” system it would have had to have first translated Urdu into English and then English into Korean, and when you look at the sheer number of language combinations – which currently run into the billions – suddenly you can realise why the system was so bloated and inefficient.

So let’s put the new system into practise.

Let’s say we train a multilingual system with Japanese to English and Korean to English examples – shown by the solid blue lines in the animation below. The new multilingual system shares the parameters it needs to translate between these four different language pairs and this sharing lets the system transfer the “translation knowledge” from one language pair to the others. This “transfer learning” and the need to translate between multiple languages forces the neural net to improve its modelling power.

This then inspired the team to ask if they could translate between a language pair the system had never seen before. For example, translations between Korean and Japanese where the systems never had any Korean to Japanese examples shown to it before. Impressively, the answer that came back was yes, the system could generate reasonable reasonable Korean to Japanese translations even though it has never been taught to do so.

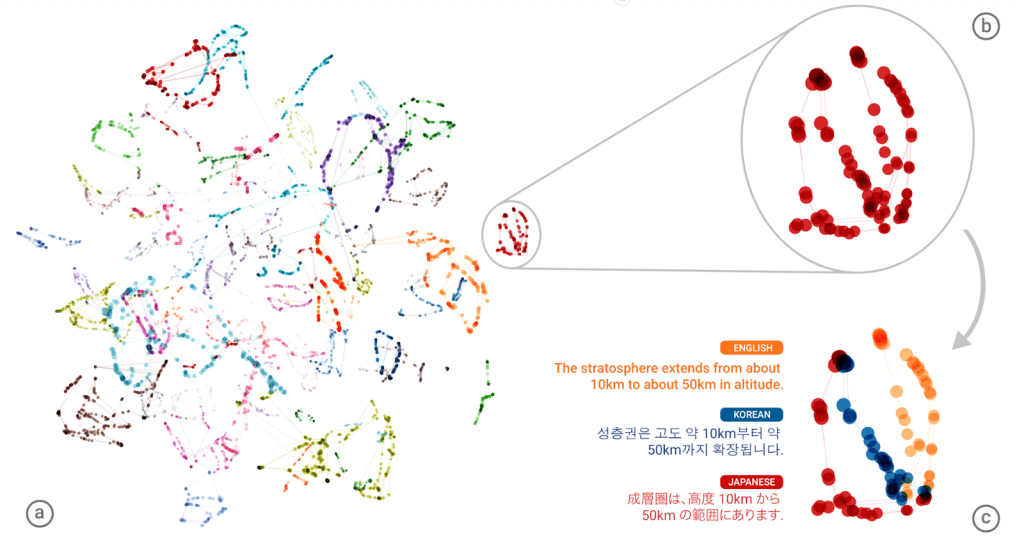

The success of these first passes then raised another important question – is the system learning a common representation in which sentences with the same meaning are represented in similar ways regardless of language, for example an “interlingua”? Using a 3D representation of internal network data, the team were able to take a peek into the black box as it translated a set of sentences between all possible pairs of the Japanese, Korean, and English languages.

Part (a) from the figure above shows an overall geometry of these translations. The points in this view are colored by the meaning; a sentence translated from English to Korean with the same meaning as a sentence translated from Japanese to English share the same color. From this view we can see distinct groupings of points, each with their own color. Part (b) zooms in to one of the groups, and part (c) colors by the source language. Within a single group, you can see a sentence with the same meaning but from three different languages. This means the network must be encoding something about the semantics of the sentence rather than simply memorizing phrase-to-phrase translations. The team interpreted this as a sign of existence of an interlingua in the network.

The experiment was so succesful that, this Multilingual Google Neural Machine Translation system, is the one supporting all of Google Translates’ users. So next time you want to translate Urdu into Afrikaans you have this system to thank but, bearing in mind that Google’s system also recently came up with its own secret way to communicate with itself we should probably also ask the question – what happens if it decides to change the rules?