WHY THIS MATTERS IN BRIEF

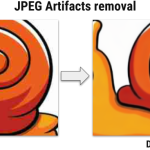

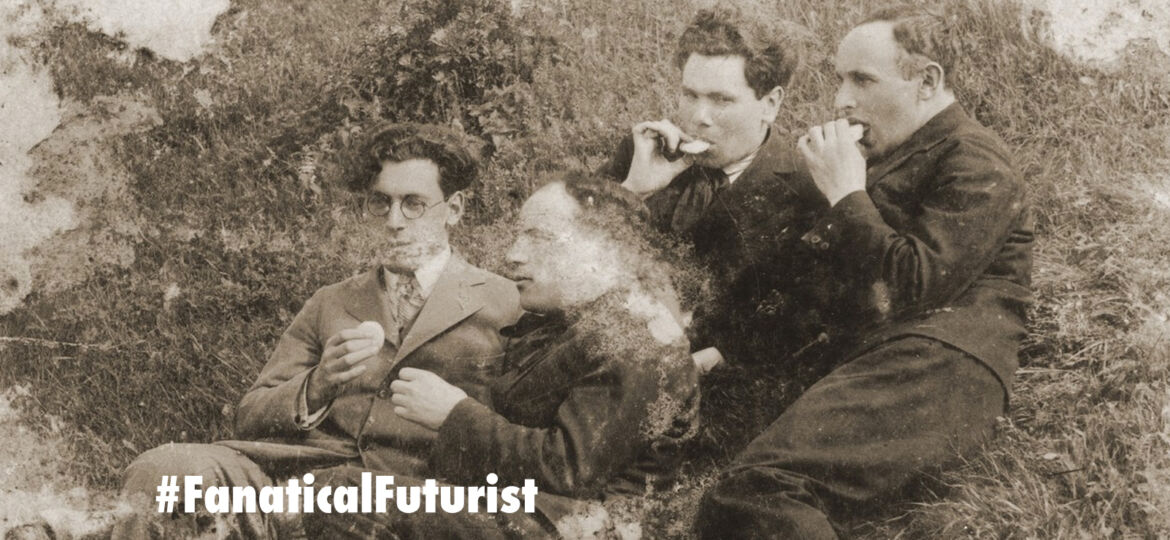

Lots of us have old or crappy photos that are in bad condition and in the past the only place you could put them was in the bin, but now new AI image restoration technology could restore them to better than the originals.

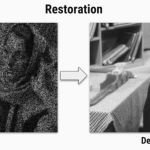

There’s nothing worse than opening an image on your computer only to find that it’s so grainy that you can’t even begin to make it out. Some people might say get a better camera. These people are mean. But computer scientists, those good, helpful people, are now saying use Artificial Intelligence (AI) instead in the form of a new neural network, a computer system that’s designed to mimic the thinking of the human brain.

Three computer scientists from University of Oxford and the Skolkovo Institute of Science and Technology in Moscow who specialise in machine vision have developed a neural net that can make that uselessly pixelated photo of avocado toast into an image that’s perfectly Instagrammable, and they call it Deep Image Prior.

Neural networks are loosely modelled to resemble a human brain. They’re made up of thousands of nodes that they use to make decisions and judgments about the data being presented to them. Just like toddlers, they start off not know anything but after a couple thousand training sessions they can quickly become better than humans at everyday tasks.

Many neural networks are trained by feeding them large datasets, which gives them a huge pool of information to pull from when it comes to making a decision.

Deep Image Prior takes a different approach. It works out everything from just that single original image, not needing any prior training before it can turn your crappy, corrupted image back into a high-res shot.

The three computer scientists used a Generative Adversarial Network (GAN) to redraw blurry picture thousands of times until it gets so good at it that it creates an images better than the original. It uses the existing input as context to fill in the missing or damaged parts. Some of the results were even better than output from pre-trained neural networks.

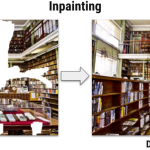

“The network kind of fills the corrupted regions with textures from nearby,” said Dmitry Ulyanov a co-author of the research in a reddit post, though he admitted there are some instances where the network would fail, such as the complexity of reconstructing the human eye…

“The obvious failure case would be anything related to semantic inpainting, for example inpaint a region where you expect there to be an eye — our method knows nothing about face semantics and will fill the corrupted region with some [random] textures.”

Aside from restoring photos, Deep Image Prior was also able to successfully remove text placed over images. Which raises the concern that this model could be used to remove watermarks or other copyright information from images online – a real world possibility that perhaps went overlooked during this research.

While this experiment means that those dodgy photos you have, whether they’re in the loft or on your smartphone, could one day be restored to a better-than-the-original state, let alone when it’s combined with new image sharpening tools like Google’s RAISR technology, it also proves that you don’t need access to a colossal dataset to create a functioning neural network. Beyond all the good this could do for your photos folder, that might end up being this project’s most lasting contribution.