WHY THIS MATTERS IN BRIEF

Unknown to many AI’s are amnesiacs, they forget almost everything they’re taught, but now a new human like memory system could be about to take AI to the next level and make them much, much smarter.

When researchers decide to train their latest and greatest artificial intelligence (AI) systems there’s one big, fundamental flaw they have to deal with – AI’s, by nature – and design – are amnesiacs, that is to say they have great difficulty in retaining knowledge between tasks. It’s the equivalent of trying to train someone something new when they’re always forgetting stuff. So as you can imagine if you’re trying to teach an AI something new it can get quite frustrating, there’s even a special term for it – “Catastrophic forgetting.” Catchy.

In a new demonstration of just how close the associations between AI’s and our own brains have become though Google’s DeepMind engineers, the same crazy cats who recently published a breakthrough Artificial General Intelligence (AGI) architecture, who’ve been teaching their AI’s to dream and fight with each other, and annihilate online gamers, have just created an AI that can retain its knowledge between tasks – turning raw memory into long term experiences that stay with the program, even as it moves onto other things. And they published a paper on it.

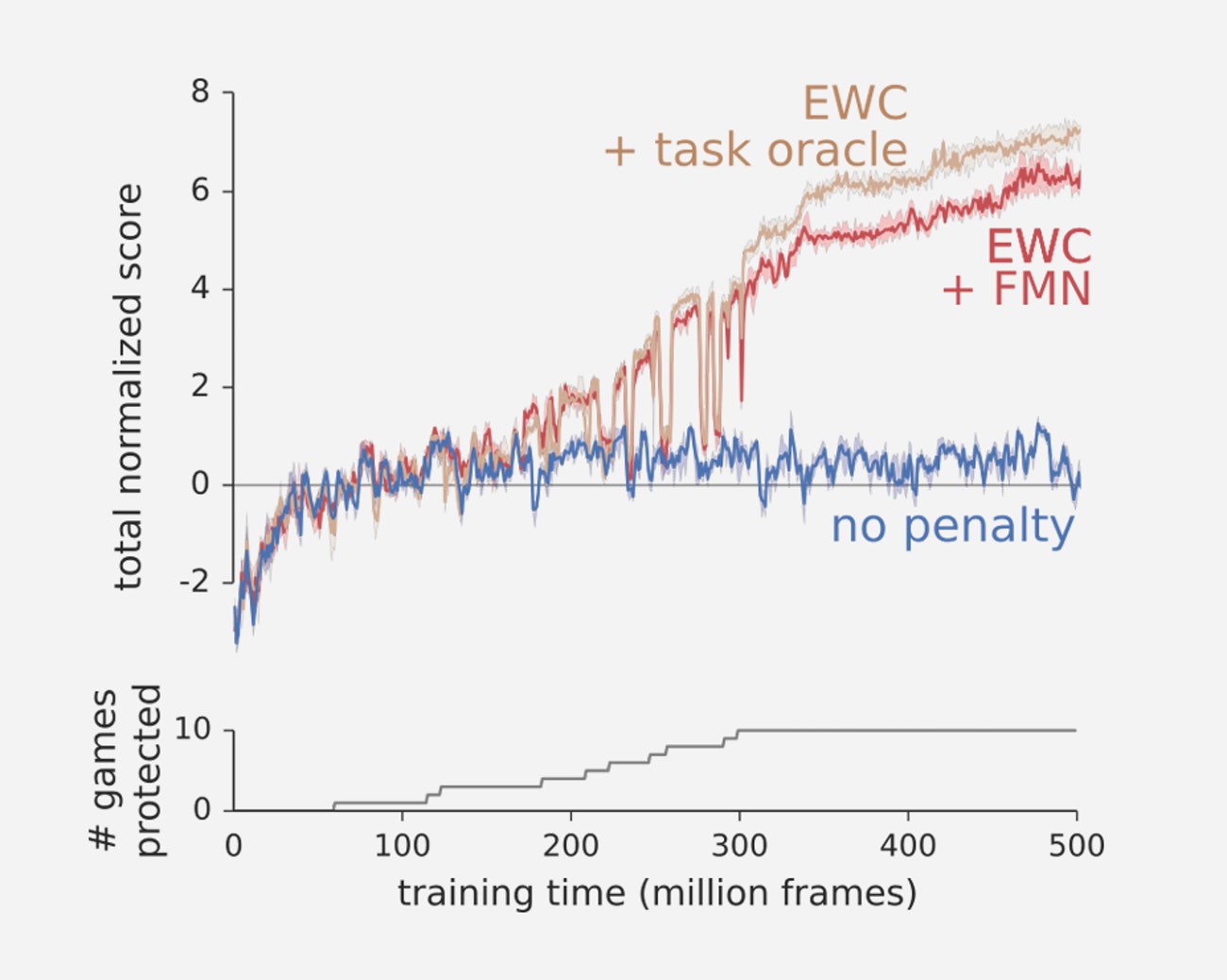

To illustrate the problem, the DeepMind team got their AI to play a series of old Atari games, with the goal of getting the highest score. The control AI was an AI with no special new memory augmentation, and it performed as well as it could, given the fact it was an amnesiac – slow, low level skill increases that were gained and lost over and over again. As the AI learnt each new game, it essentially overwrote its old skills with new ones, making its progress slow and cumbersome.

With, and without EWC

It is, of course, possible to build a giant neural network that never gets filled up, and that always has space to assign new memories and experiences to fresh, previously unused “synapses,” but again that too creates its own problems because the system would quickly become bloated.

So let’s introduce the new DeepMind approach – “Elastic Weight Consolidation,” or EWC for short. Using EWC the team could assign what they call “weighted projections” to their new system’s synapses – making them more, or less, likely to be over written depending on the situation in the future. By assigning each synapse a different level of protection the team could then let the system learn new skills within the same network, while only slightly altering the systems pre-existing structure, or memories. The result was that the new approach let the system retain its old skills without ballooning the size of that network to the point of uselessness.

The team then gave the new EWC system the same task as the older AI – to learn how to play a series of Atari Games and maximise its score. But this time it wasn’t an amnesiac and didn’t loose the memory, or experiences, of its previous attempts and as a consequence it learnt much, much faster.

Suffice to say, now that AI research is reaching a point where AI’s can build, and retain memories, we’ll see yet another acceleration in their evolution… and bearing in mind that they’re already evolving at a crazy rate that’s going to be something to behold. That said though, as we get closer to solving the riddle of our own human conciousness, then maybe the next big news will be that it’s gained conciousness.

This research is really amazing. Thanks for the post.

Thanks Simon, glad you enjoyed the article, have a great Easter!

I have to search sites with relevant information on given topic and provide them to teacher our opinion and the article

Hey that’s a lot of research you have doing their. Keep up the good work and keep us up to date.

Regards