The amount of energy consumed by the world’s largest hyper scale data centers has always posed the technology companies that run them with a conundrum. On the one hand the companies need more compute power to continue supporting their growing empires and on the other hand more servers and more hardware means more energy, higher energy bills and more emissions.

In 2015 alone these data centers consumed 91 Billion kilowatt hours of electricity, equivalent to the annual output of 34 large, 500 megawatt power plants, cost $13 billion to run and generated over 100 million tonnes of Carbon Dioxide. By 2020 their electricity consumption is expected to increase to 140 billion kilowatt hours, the equivalent annual output of 50 power plants.

Keeping the servers cooled has become such an issue that some companies, like Facebook have been building data centers on the edge of the Artic Circle while others like Microsoft are considering lights out datacenters on the bottom of the Atlantic.

For the past three years though Google has been using machine learning to try to improve the efficiency of its data centers. Two years ago they began using the technology to manage their data centres more efficiently and then, over the past three months they began using the new toy in their arsenal – DeepMind, the deep learning business unit that they acquired in 2014 for $600 million.

DeepMind’s researchers began collaborating with Googles data center teams to try to significantly improve the data centers utility. Using a system of neural networks trained on different operating scenarios within their data centres the teams eventually homed in on a more efficient and adaptive model that helped them better understand the dynamics within the data centre and then, eventually, optimise efficiency.

The model was built using historical data that had been collected by the thousands of sensors within their data centres – data such as temperatures, power, pump speeds and set points – and using it to train an ensemble of deep neural networks. Since their objective was to improve data centre energy efficiency they trained the neural networks on the average future Power Usage Effectiveness (PUE).

They then trained two additional ensembles of deep neural networks to predict the future temperature and pressure of the data centre over the next hour. The purpose of these predictions was to simulate the recommended actions from the PUE model and to ensure they didn’t go beyond any operating constraints.

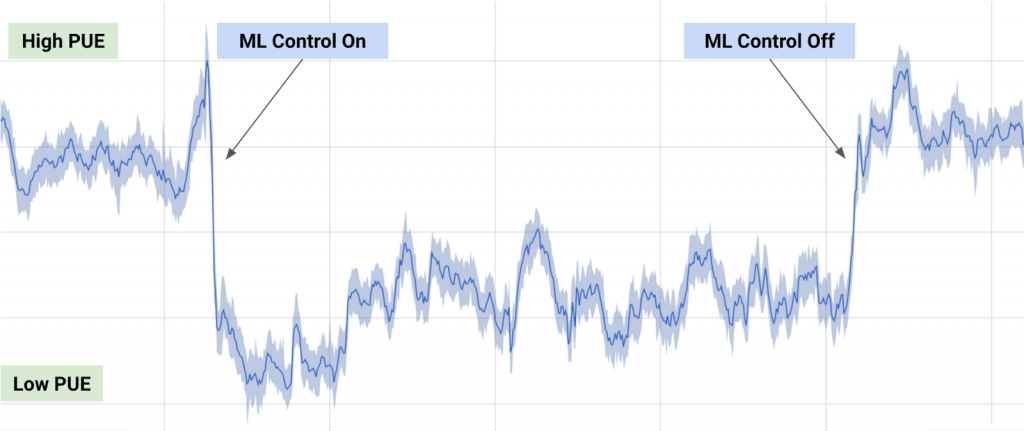

The model was then tested by deploying it into a live, tier 2 hyper scale data centre in the mid west United States and the results were impressive. The new model was able to consistently achieve a 40 percent reduction in the amount of energy used for cooling – equating to a 15 percent reduction in overall PUE after accounting for electrical losses and other non-cooling inefficiencies and it produced the lowest PUE the site had ever seen.

Fig 1. A record of power consumption before and after the trial

As for what the future holds DeepMinds “General Purpose Framework” will be applied to other data center challenges such as improving power plant conversion efficiency, reducing semiconductor manufacturing energy and water usage, or helping manufacturing facilities increase throughput.

Considering that Google used 4,402,836 MWh of electricity, equivalent to the amount of energy consumed by 366,903 US households, this 15 percent will translate into savings of hundreds of millions of dollars over the years and, all of a sudden buying DeepMind for $600 million seems like a steal…