WHY THIS MATTERS IN BRIEF

- Many of the AI breakthroughs over recent years have only been possible because of the exponential increase in the amount of computing power available to support them, now Intel and other chip companies are in an arms race to own the future of AI

Microsoft researchers recently built a neural network based AI that could recognise conversational speech as well as a human. But while at first glance this breakthrough – a world first, a step change in human-machine understanding and communication – is impressive nowadays it’s all too easy for this kind of news to get lost in the noise because unlike years gone by we now live in an age where a new AI breakthrough is announced almost every day.

The algorithms and neural nets that power many of these breakthroughs are often made possible, and made more effective because AI’s are able to learn by drawing on, and processing, huge volumes of data.

Microsoft’s system, for example, learned to recognise words by looking for patterns in old tech support calls. But it’s not just algorithms or neural nets that are driving todays AI revolution it’s the computing power behind them – after all, the first AI, the first that was officially recognised and classified as an “AI” was first developed in 1915 so AI has been here for over one hundred years but the pace of development only began accelerating when the right levels of computing power became available.

Microsoft’s speech recognition system, like most systems, relies on large farms of Graphics Processing Units (GPU) processors – chips that were originally designed for rendering graphics but that are remarkably adept at running new AI models because of their stunning parallel processing capabilities.

While many of the hyper scale datacentre operators like Alibaba, Amazon, Baidu, Facebook, Google, IBM and Microsoft train their deep neural nets using GPUs they’re also starting to investigate and use more specialised, purpose built chips that don’t just accelerate the training of their AI’s but that also accelerate their execution. For example, Google recently built its own AI processor, the Tensor Processing Unit (TPU) – much to the horror of Intel and they’re not alone. Facebook, IBM and Microsoft are also building and tweaking their own chips.

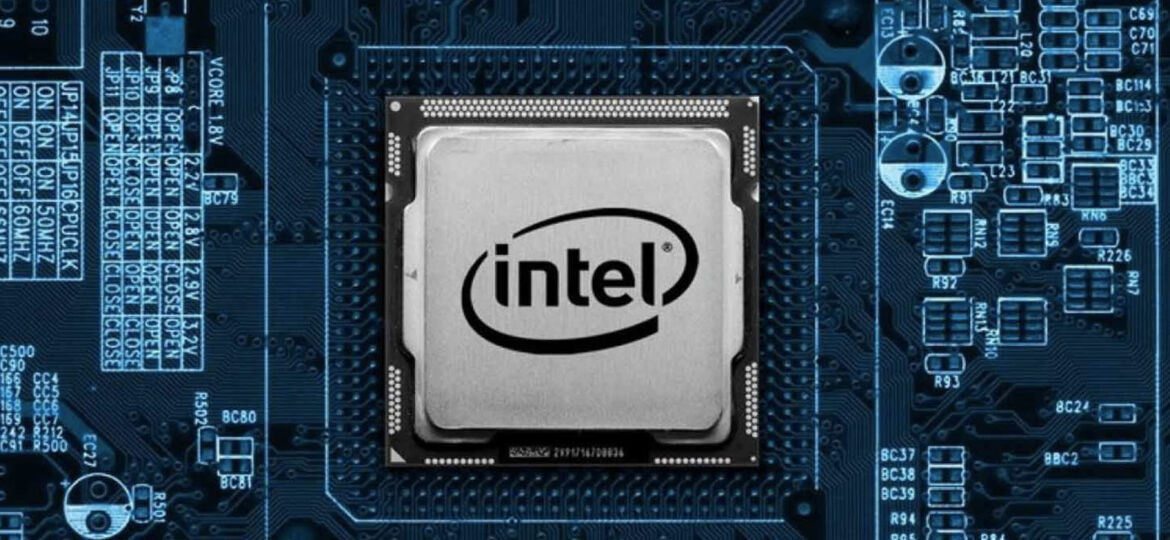

As AI systems continue to proliferate and mature Intel, who were late to the smartphone party, the wearables party and most recently the Internet of Things party have now set their sights on trying to capture and dominate the market for high performance, optimised AI chips.

Intel’s latest unveiling is a dedicated AI processor called Nervana and the company plans on testing prototypes by the middle of next year, and, if all goes well, the finished chip will be available by the end of 2017. At the moment though the market for AI chips is dominated by Nvidia and their Tesla line of GPU’s which they used to build the worlds most energy efficient supercomputer and this is a big reason why Intel is pushing so hard to be a big part of this potentially enormous market in the years to come.

Intel’s new chip is based on technology originally built by a start-up Intel acquired earlier this year, also called Nervana.

“This really is the industry’s first purpose built silicon for artificial intelligence,” said Jason Waxman, Intel’s Corporate Vice President.

It’s a nice statement but it’s one that seems to conveniently ignore all of the other AI chips that are out there on the market. Then again none of the other AI optimised chips, other than NVidia’s Tesla chips and FPGA’s from Altera, who now coincidentally happen to be owned by Intel after they bought the company earlier this year, are commercially available, nor, in some cases are they ever likely to be commercially available. Whichever way you slice and dice it it’s evident that a new hardware arms race is brewing.

Unlike some of the older chipsets, such as Knights Mill, that Intel tried to field up until recently as a serious contender, the new Nervana chip is designed from the ground up to help companies experimenting with AI accelerate both the learning and execution phases of development. But unlike many of the custom chips that are appearing Nervana is designed to work with not just one but many different types of deep neural networks. And that might be enough to make it interesting.

“We can boil neural networks down to a very small number of primitives, and even within those primitives, there are only a couple that matter,” says Naveen Rao, the founder and CEO of Nervana, and now VP and GM of Intel’s Artificial Intelligence Solutions division.

Today GPUs are still by far and away the most effective way of training AI systems, Microsoft alone have gone on record to say that using GPU’s they believe they managed to cut down the development time of their conversational AI by four fifths, but when it comes to execution while the market prefers GPU’s some companies, such as Microsoft, prefer FPGAs.

“They just happen to be what we have,” says Sam Altman, president of the tech accelerator Y Combinator and co-chairman of Elon Musk’s open source AI lab OpenAI, “and not everyone has the resources to program their own chips, much less design them from scratch.”

This is where a chip like Nervana comes in but there are still questions about just how competitive it will be once it hits the market.

“We have zero details here,” says Patrick Moorhead, the President and principal analyst at Moor Insights and Strategy, a firm that closely follows the chip business, “we just don’t know what it will do.”

But Altman, for one, is bullish on Intel’s new technology, and not just because he invested in Nervana when it was still a start-up.

“Before that experience I was sceptical that start-ups were going to play a really big role in designing new AI chips,” he said, “now I have become much more optimistic.”

Intel’s involvement in this space certainly gives this technology an added boost, after all, Intel chips powered the rise of PC and the datacenter markets and Intel have the resources needed to build chips at scale and push them to market but in today’s fast paced, dynamic markets nothing is guaranteed.