WHY THIS MATTERS IN BRIEF

We are edging closer to living in the world of Minority Report, surrounded by technologies that authorise us, sense us and interact with us remotely, and now the only thing that is fiction is privacy.

Whether it’s at your local fuel station or at the airport a good deal of attention is being given to emotion detection systems that use machine learning and deep learning systems to identify our emotions – whether that’s from our facial expressions, how we sound or the way we behave.

Many of these systems are already highly accurate but they’re still all too often limited to just being able to read what’s on the surface. Now, researchers at MIT’s Computer Science and Artificial Intelligence Lab (CSAIL) have built a system called EQ-Radio that can identify emotions using radio signals from a wireless router irrespective of whether that person is speaking or displaying any outward signs of emotions.

It’s emotional, here’s the team

EQ-Radio is composed of three elements. An RF radio emits low-frequency radio waves and captures their reflections from objects in the environment then an algorithm analyses the captured waves, separating heartbeat and respiration information, and measuring the interval between heartbeats and finally, the heartbeat and respiration information is fed into a machine learning classifier that maps it onto emotional states.

How it works

The heart of the system, so to speak, is the algorithm that extracts the heartbeat from the RF signal. It’s an impressive achievement that solves a difficult problem.

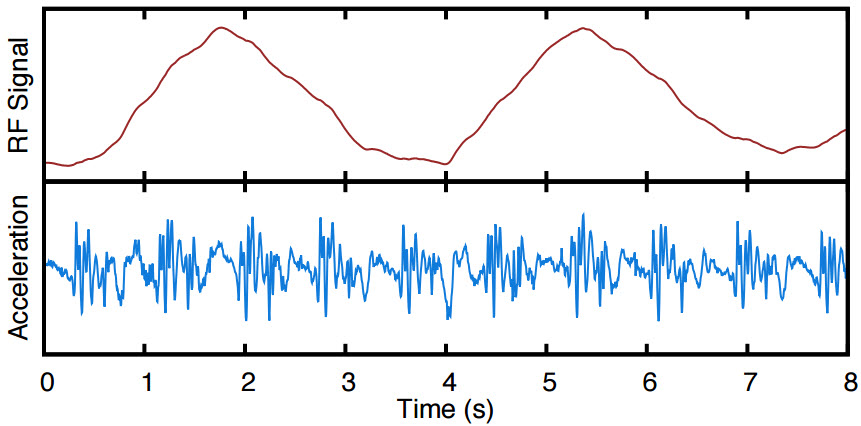

Separating out the heartbeat and breathing waveforms

The RF signal captures the movements of a person’s chest as shown in the top panel in the above figure. The large wave is caused by the displacement of the chest as the person breathes in and out. The small perturbations in the wave are caused by vibrations in the skin that occur when the heart beats.

Two problems have to be solved to extract the heartbeat from the RF signal. First, information about the heartbeat has to be separated from information about respiration. Second, the heartbeat signal has to be segmented into individual beats so that the time between each beat can be measured. This information about the interval between heartbeats is important for identifying the emotion a person is experiencing.

Separating the heartbeat and respiration signals is fairly straightforward. The movement of the chest when a person breathes has a low rate of acceleration because breathing tends to be slow and steady. The vibrations in the skin that are caused by a beating heart have a high rate of acceleration because heartbeats are caused by sharp and sudden contractions of the heart muscles. Displaying the RF signal in terms of acceleration rather than displacement of the chest produces the signal shown in the bottom panel of the figure.

Dividing the acceleration signal into individual heartbeats is a more difficult problem for three reasons. First, the heartbeat signal is noisy because it is affected by factors like the physical makeup of a person’s body and their position relative to the RF radio in addition to the beating of their heart. Second, the overall shape of the heartbeat signal for each individual is unknown. Third, the point in time when each heartbeat begins is also unknown. The team devised an algorithm that bootstraps the problem by switching back and forth between optimizing identification of the overall shape of the heartbeat signal and optimizing identification of the starting point of the heartbeat. And, as you can see, this algorithm does a remarkable job capturing individual heartbeats.

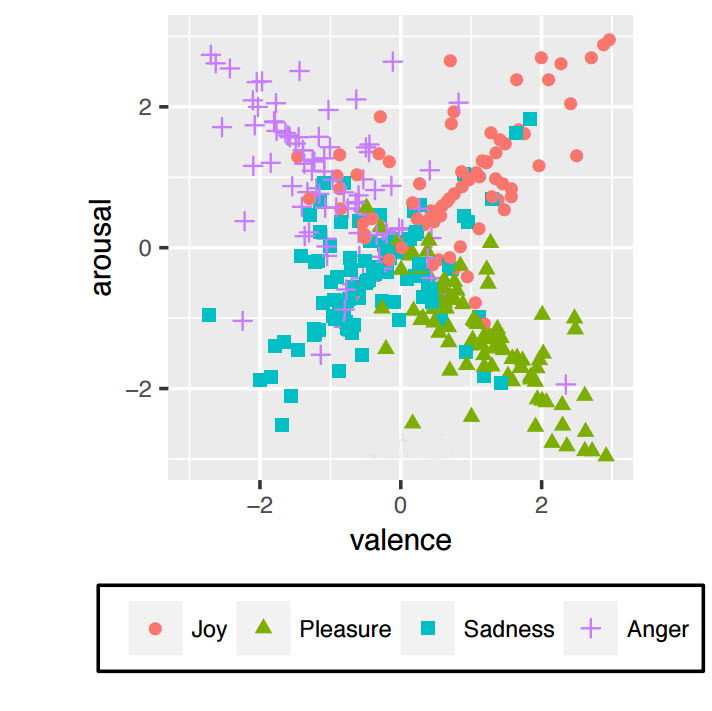

Happy or sad? Let the machine decide

A broad range of emotions can be represented in a two dimensional space in which the arousal associated with the emotion (strong or weak) is represented on the y-axis and the valence of the emotion (positive or negative) is represented on the x-axis as shown in the image above. The four quadrants of the space are then loosely associated with different emotional categories. “Anger” (upper-left quadrant) is characterized as a high-arousal negative emotion; “sadness” (lower-left quadrant) is low-arousal and negative, ”joy” (upper-right quadrant) is high-arousal and positive, and ”pleasure” (lower-right quadrant) is low-arousal and positive.

It’s important to keep in mind that names like “anger” and “joy” are used in a broad and generic sense when applied to emotional states in work like this. The methods used to identify emotions are usually capable of accurately classifying emotions along the dimensions of arousal and valence; they are typically not refined enough to accurately capture subtle differences between emotions that fall in the same quadrant like aggravated, angry, and enraged. “Angry” is best thought of as a convenient shorthand for a broad range of negative emotions that are accompanied by high levels of arousal.

The relationship between heartbeat and emotion is different for different people and can differ for the same individual at different times of the day because factors other than emotion (for example, caffeine) affect heart rate. The research team dealt with this problem by comparing a person’s heartbeat when they were experiencing an emotion with their heartbeat when they were emotionally neutral. The difference between the two was fed into a machine learning classifier that identified a person’s emotion in the two-dimensional space defined by arousal and valence.

Putting it to the test

The ability of EQ-Radio to accurately capture the heartbeat signal was tested by comparing its performance against ECG (Electrocardiogram) data gathered through sensors connected to a participant’s body. EQ-Radio’s average error was 3.2 milliseconds (ms) and 97% of the errors were 8 ms or less. Accuracy in capturing the heartbeat signal is important because an error of 30 ms reduces accuracy in emotion identification to a bit more than 50%.

The 3.2 ms average error was recorded when the participant sat facing the RF radio at a distance of three to four feet. It would be difficult to achieve this ideal positioning when identifying emotions outside of the lab. The team tested the system at distances out to 10 feet and when the participant was sitting with their sides or their back to the RF radio to determine whether it can capture heartbeat accurately when conditions are not ideal. In all cases the median error was 8 ms or less indicating that heartbeat capture using EQ-Radio is sufficiently robust for use outside the lab.

The ability of EQ-Radio to accurately identify emotions was evaluated by comparing its performance against ECG based emotion identification and Microsoft’s Project Oxford API which identifies emotions from pictures of people’s faces. The participants used things like pictures, music and their personal memories to put themselves in a particular emotional state. They were then asked to identify the particular time period during the experiment when they actually experienced the emotion. The RF, ECG and visual data from that time period were used to compare the three emotion identification systems.

EQ -Radio accurately identified emotions 72.3% to 87% of the time across different experimental conditions. ECG monitoring was consistently better but not by much. EQ-Radio’ accuracy was, at most, 1.2 percentage points behind the level of accuracy achieved with ECG.

In contrast, identification of emotions from facial expressions with Microsoft’s Project Oxford was much worse. Identification based on facial expression was very good when the participant expressed a neutral emotion but accuracy at identifying positive emotion was only 47% and accuracy at identifying negative emotions was 11% or less. Given the poor performance of Project Oxford, it would be interesting to see how EQ-Radio compares with a sophisticated emotion-recognition system like the one developed by Affectiva that uses deep learning networks to identify emotions from facial expressions captured by webcams.

The EQ-Radio is remarkably effective at identifying emotions without the need to disturb people by attaching ECG sensors to their body. However, its very success raises questions about the circumstances in which it should be used. There are times when people take care to keep their faces and voices neutral because they wish to keep their emotions private. The EQ-Radio takes that decision out of their hands and raises the question of whether a right to privacy extends to shielding your emotions from others when you don’t want those emotions to be revealed.

The fact that the existence of the technology forces consideration of the circumstances in which it ought to be used doesn’t argue against recognizing the potential benefits that can be derived from EQ-Radio. Companies can use EQ-Radio to develop products that are more finely tuned to the needs and desires of their customers when emotional response to their products is gauged with the customer’s consent. Valuable medical information about a patient’s heartbeat can be gained in a nonintrusive and noninvasive manner but while it can be a valuable tool in some areas, in other areas such as privacy it is simply another in a very long line of technologies that will increasingly strip away our many layers of privacy.

Why aren’t governments protecting us from this invasion? Maybe because they are in on the deal.

You … are the Product.

Imagine being able to correlate your mental state with different parts of different adverts. Imagine being able to profile people based on the movies they watch and their reactions. It could tell advertisers what’s working and what isn’t. All of this is already possible. Webcam + facial feature detection algorithm + valence correlation algorithm. Saw some excellent demonstrations of the facial feature and valence determination technology from Cambridge University back in 2013 when I was looking for ways to improve human machine interactions as opposed to advertising. I’m surprised that TV manufacturers haven’t exploited this as the advertising related revenue could be vast. Personally I don’t have too much of a problem with it provided it is anonymised at the source (the TV). But I would like the option to enable it or disable it. I’d also like to be rewarded for what is may data. Personal opinion obviously.

Industries are implementing AI, biometrics & neuroscience, and 3D modelling that are used to quantify the emotions in digital media in the form of images, video, audio, and text. Research states Emotion Analytics Market Revenue to be Worth $1,711.0 million by 2022 https://buff.ly/2AW5wuz

The privacy debate will continue but if this technology was in a doctors surgery then it could improve the value of the consultation by quickly establishing the emotional status of the patient…augmenting the consultation! Fascinating stuff, another thought provoking article from Matt

Hi Matthew, really looking forward to it. It’s coming to a point where the consumers get paid for their data or can opt out of it. One of the dimensions in this will be the tailored personal digital / AI assistants and which could form the primary interface between the consumer and cyberspace including all of the advertising. It’s no wonder it’s one of the three big bets from Google. That and visualisation and ML / big data analytics.