WHY THIS MATTERS IN BRIEF

Sci-fact is weirder than science fiction and now we can see people’s eye reflections and turn those reflections using NERF into 3D models. Insane tech!

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

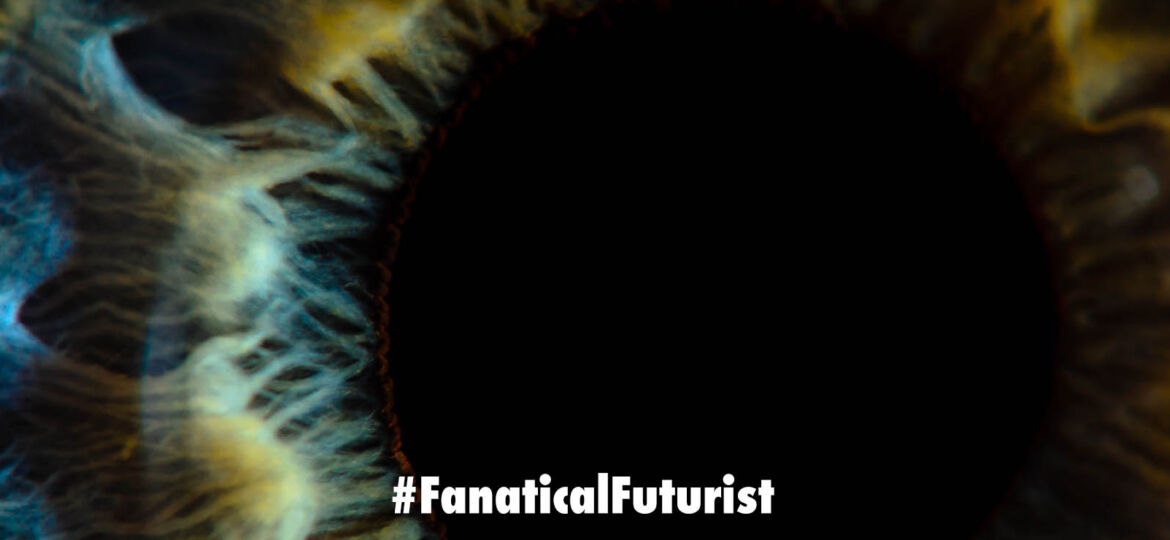

Imagine being able to reconstruct a 3D scene from the reflections in a person’s eyes. No, this isn’t a plot device from a science fiction movie. It’s the subject of a groundbreaking study by Hadi Alzayer and his team titled “Seeing the World through Your Eyes”. The research dives into an under explored area of the human eye’s reflective properties and leverages it to create a comprehensive 3D reconstruction of the observer’s environment, something that was once deemed ridiculously improbable.

The human eye, apart from its primary function of vision, also holds a wealth of information about the world around us. If we look at someone else’s eyes, the light reflected from the cornea can provide a snapshot of what they are seeing. This is the fundamental idea behind Alzayer’s work. By imaging a person’s eyes, one can collect multiple views of a scene outside the camera’s direct line of sight through the reflections in the eyes1.

Insane Tech

However, such a task is not without its challenges. Accurately estimating eye poses and differentiating the intertwined appearance of the iris and the scene reflections present significant obstacles. Alzayer and his team address these challenges with a novel approach that refines the cornea poses, the radiance field depicting the scene, and the observer’s eye iris texture. They propose a simple regularization prior on the iris texture pattern to enhance the reconstruction quality1.

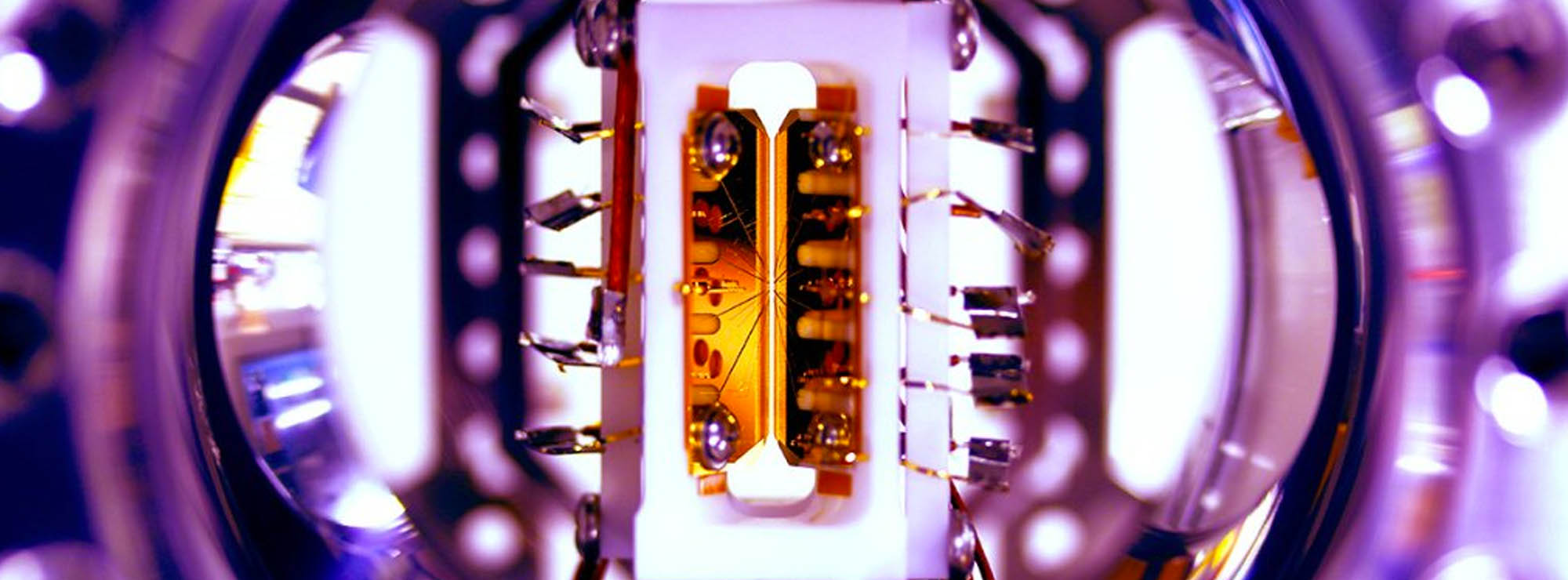

The team repurposes Neural Radiance Fields (NeRF) for training on eye images, incorporating two crucial components: texture decomposition, which leverages a simple radial prior to facilitate separating the iris texture from the overall radiance field, and eye pose refinement, which enhances the accuracy of pose estimation despite the challenges presented by the small size of eyes1.

This research is an extension of the concept of catadioptric imaging, which uses a combination of lenses and mirrors for image capturing. The human eye, in this case, serves as an external curved mirror, which is a creative realization of an accidental catadioptric imaging system1.

The study’s advancements extend the current capabilities of 3D scene reconstruction through neural rendering to handle partially corrupted image observations obtained from eye reflections. This opens up new possibilities for research and development in the broader area of accidental imaging, revealing and capturing 3D scenes beyond the visible line of sight1.

As the research suggests, capturing both eyeballs could indeed result in even higher volumetric fidelity, further pushing the boundaries of what we thought was possible.