WHY THIS MATTERS IN BRIEF

The use of machine vision, using computers to “see” malware and cyber threats, is revolutionary and possible game changing.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, read about exponential tech and trends, connect, watch a keynote, or browse my blog.

Can Artificial Intelligence (AI) actually see a cyber attack. As in literally see it? Then stop it? Thanks to a new machine vision technique the answer it seems is a resounding yes, and the breakthrough could revolutionise cyber defense.

The last decade’s growing interest in deep learning was triggered by the proven capacity of neural networks in computer vision tasks. For example, if you train a neural network with enough labelled photos of cats and dogs then it will be able to find recurring patterns in each category and classify previously unseen images and examples with a good level of accuracy.

But, what else can you do with an image classifier?

Well, in 2019 a group of cybersecurity researchers wondered if they could treat security threat detection as an image classification problem – a truly novel idea.

The Future of Cyber In-Security, by Futurist Keynote Matthew Griffin

Their intuition also proved to be right, and they were able to create an AI machine learning (ML) model that could detect malware based on images created from the content of application files. And, a year later, the same technique was used to develop a machine learning system that detects phishing websites.

The combination of binary-level visualization like this and machine learning it turns out is a very powerful technique that could provide us all with new solutions to old problems – like cyber security.

The traditional way to detect malware is to search files for known signatures of malicious payloads. Malware detectors maintain a database of virus definitions which include opcode sequences or code snippets, and they search new files for the presence of these signatures.

Unfortunately, malware developers can easily circumvent these detection methods using different techniques such as obfuscating their code or using polymorphism techniques to mutate their code at runtime. And, elsewhere dynamic analysis tools try to detect malicious behaviour during runtime, but they’re slow and require the setup of a sandbox environment to test suspicious programs which is impractical to do at spped and scale.

In recent years researchers have also tried a range of different machine learning techniques to detect malware. These ML models have managed to make progress on some of the challenges of malware detection, including code obfuscation. But they present new challenges, including the need to learn too many features and a virtual environment to analyze the target samples.

“Binary visualization,” as the new technique is known, can redefine malware detection by turning it into a computer vision problem.

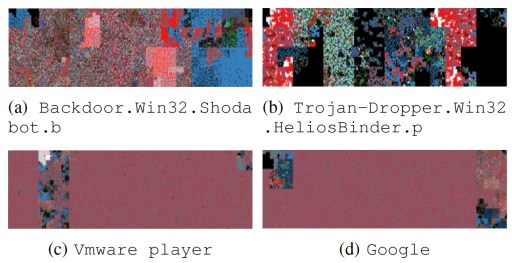

In this methodology, files are run through algorithms that transform binary and ASCII values into color codes and essentially pictures.

In a paper published in 2019, researchers at the University of Plymouth and the University of Peloponnese showed that when benign and malicious files were visualized using this technique, new patterns emerge that separate malicious and safe files. These differences would have gone unnoticed using classic malware detection methods.

According to the paper, “Malicious files have a tendency for often including ASCII characters of various categories, presenting a colorful image, while benign files have a cleaner picture and distribution of values.”

When you have such detectable patterns, you can train an artificial neural network to tell the difference between malicious and safe files. The researchers created a dataset of visualized binary files that included both benign and malign files. The dataset contained a variety of malicious payloads (viruses, worms, trojans, rootkits, etc.) and file types (.exe, .doc, .pdf, .txt, etc.).

The researchers then used the images to train a classifier neural network. The architecture they used is the Self-Organizing Incremental Neural Network (SOINN), which is fast and is especially good at dealing with noisy data. They also used an image pre-processing technique to shrink the binary images into 1,024-dimension feature vectors, which makes it much easier and compute-efficient to learn patterns in the input data.

The resulting neural network was efficient enough to compute a training dataset with 4,000 samples in 15 seconds on a personal workstation with an Intel Core i5 processor.

Experiments by the researchers showed that the deep learning model was especially good at detecting malware in .doc and .pdf files, which are the preferred medium for ransomware attacks. The researchers suggested that the model’s performance can be improved if it is adjusted to take the filetype as one of its learning dimensions. Overall, the algorithm achieved an average detection rate of around 74 percent.

It was also excellent at detecting phishing attacks which are becoming a growing problem for organizations and individuals alike. Many phishing attacks trick the victims into clicking on a link to a malicious website that poses as a legitimate service, where they end up entering sensitive information such as credentials or financial information.

Traditional approaches for detecting phishing websites revolve around blacklisting malicious domains or whitelisting safe domains. The former method misses new phishing websites until someone falls victim, and the latter is too restrictive and requires extensive efforts to provide access to all safe domains.

Other detection methods rely on heuristics. These methods are more accurate than blacklists, but they still fall short of providing optimal detection.

Then, in 2020 the same group of researchers used binary visualization and deep learning to develop a new way to detect phishing websites which uses binary visualization libraries to transform website markup and source code into color values.

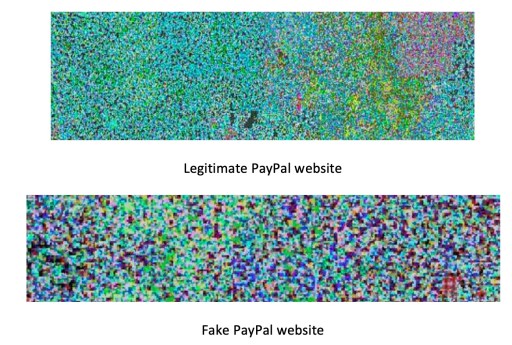

As is the case with benign and malign application files, when visualizing websites, unique patterns emerge that separate safe and malicious websites.

The researchers wrote, “The legitimate site has a more detailed RGB value because it would be constructed from additional characters sourced from licenses, hyperlinks, and detailed data entry forms. Whereas the phishing counterpart would generally contain a single or no CSS reference, multiple images rather than forms and a single login form with no security scripts. This would create a smaller data input string when scraped.”

The example below shows the visual representation of the code of the legitimate PayPal login compared to a fake phishing PayPal website.

The researchers then created a dataset of images representing the code of legitimate and malicious websites and used it to train a new classification machine learning model. The architecture they used is MobileNet, a lightweight Convolutional Neural Network (CNN) that is optimized to run on user devices instead of high capacity cloud servers. CNNs are especially suited for machine vision tasks including image classification and object detection.

Once the model is trained, it is plugged into a phishing detection tool. When the user stumbles on a new website, it first checks whether the URL is included in its database of malicious domains. If it’s a new domain, then it is transformed through the visualization algorithm and run through the neural network to check if it has the patterns of malicious websites.

This two-step architecture makes sure the system uses the speed of blacklist databases and the smart detection of the neural network–based phishing detection technique.

The researchers’ experiments showed that the technique could detect phishing websites with 94 percent accuracy.

“Using visual representation techniques allows to obtain an insight into the structural differences between legitimate and phishing web pages. From our initial experimental results, the method seems promising and being able to fast detection of phishing attacker with high accuracy. Moreover, the method learns from the misclassifications and improves its efficiency,” the researchers wrote.

According to Shiaeles, who co-wrote both the 2019 and 2020 papers, the researchers are now in the process of preparing the technique for adoption in real-world applications. Shiaeles is also exploring the use of binary visualization and machine learning to detect malware traffic in highly vulnerable IoT networks.

As machine learning continues to make progress, it will provide scientists new tools to address cybersecurity challenges – and we need innovative solutions like this in the future more than ever, so I for one look forwards to seeing where the researchers go next with their idea.