WHY THIS MATTERS IN BRIEF

In the future we won’t be able to believe everything we see or read, irrespective what the content is, so we need new ways to separate truth from fiction.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

Interested in the Exponential Future? Connect, download a free E-Book, watch a keynote, or browse my blog.

In February OpenAI, the non-profit AI research center funded by Elon Musk and a $1 Billion from Microsoft, rather dramatically withheld the release of its newest language model, a fake text generator called GPT-2, because it was so good it was dubbed “the world’s most dangerous AI” and the company feared it could be used to automate the mass production of fake news.

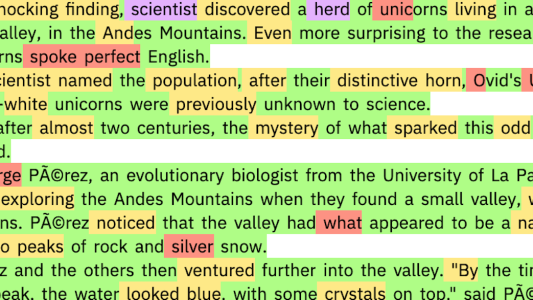

The decision also accelerated the AI community’s ongoing discussion about how to detect this kind of fake news, and in a new experiment, researchers at MIT and Harvard University considered whether the same language models that can write such convincing text, like the text you can see below, could also be used to help spot other fake news and AI generated text.

The idea behind the hypothesis is simple – language models produce sentences by predicting the next word in a sequence of text. So if they can easily predict most of the words in a given passage, it’s likely it was written by one of their own.

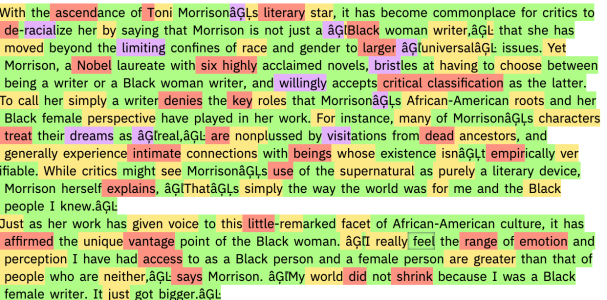

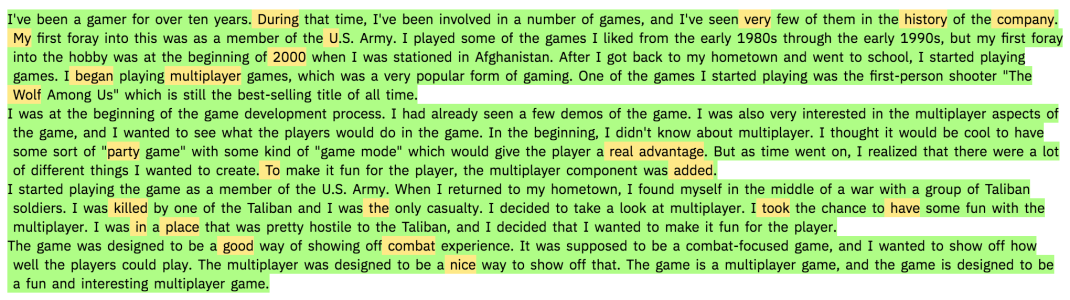

The researchers tested their idea by building an interactive tool based on the publicly accessible downgraded version of OpenAI’s GPT-2. When you feed the tool a passage of text, it highlights the words in green, yellow, or red to indicate decreasing ease of predictability; it highlights them in purple if it wouldn’t have predicted them at all. In theory, the higher the fraction of red and purple words, the higher the chance the passage was written by a human; the greater the share of green and yellow words, the more likely it was written by an AI.

Indeed, the researchers found that passages written by the downgraded and full versions of GPT-2 came out almost completely green and yellow, while scientific abstracts written by humans and text from reading comprehension passages in US standardised tests had lots of red and purple.

But not so fast. Janelle Shane, a researcher who runs the popular blog Letting Neural Networks Be Weird and who wasn’t involved in the initial research put the tool to a more rigorous test. Rather than just feeding it text generated by GPT-2, she fed it passages written by other language models as well, including one trained on Amazon reviews and another trained on Dungeons and Dragons biographies, and she found that the tool failed to predict a large chunk of the words in each of these passages, and thus it assumed they were human-written.

As a consequence her work helped identify an important problem that will have to be overcome if AI’s are truly going to be useful in helping us detect fake content – a language model might be good at detecting its own output, but not necessarily the output of others.