WHY THIS MATTERS IN BRIEF

Increasingly most people don’t believe the news they’re seeing is real, this new technology helps news organisations and outlets regain that trust.

Falsifying photos and videos used to take a lot of work – either you used CGI to generate photorealistic images from scratch, which was both challenging and expensive, or you needed some mastery of Adobe Photoshop, and a lot of time, to convincingly modify existing pictures.

Now though the advent of Artificial Intelligence (AI) generated imagery, which increasingly people can produce just by describing the images and videos they want to create using just text, has made it easier for anyone to create and tweak an images and videos with confusingly realistic results. And as a result there are an increasing number of companies and governments who are trying to find ways to discover and weed out the fakes, using a mix of technologies and techniques that include identifying the subtle skin flushes of a living person, AI’s that cross check content and sources, and much more besides. But all that said all these organisations firmly recognise they are in catch up mode and that the problem is snowballing.

Earlier this year, for example, MIT Technology Review senior editor Will Knight used off the shelf software to forge his own fake video of US senator Ted Cruz. The video, as you can see below, is a little glitchy, but as the technology behind the software improves it won’t be for long.

That same technology is also responsible for creating a growing class of footage and photos called Deepfakes, that I’ve spoken about many times before, and that have the potential to undermine truth, confuse viewers, and sow discord at a much larger scale than we’ve already seen with text only based fake news.

These are the possibilities that disturb Hany Farid, a computer science professor at Dartmouth College in the UK who has been debunking fake imagery in one form or another for 20 years, and now he thinks that a new way to authenticate content at the point of origin, from images to video, might help form one part of the solution to weeding them out.

“I don’t think we’re ready yet [for what’s coming],” he warns. But he’s hopeful that growing awareness of the issue and new technological developments could better prepare people to discern true images from manipulated creations.

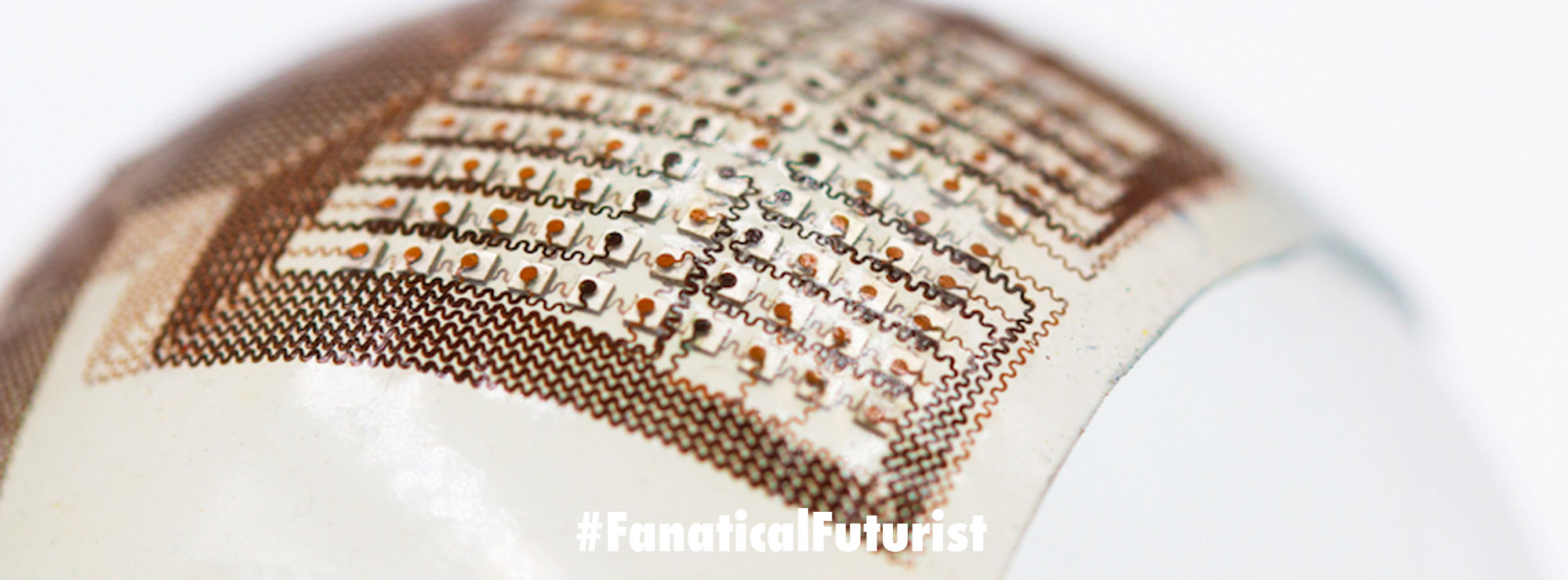

There are two main ways to deal with the challenge of verifying images, explains Farid. The first is to look for modifications in an image. Image forensics experts use computational techniques to pick out whether any pixels or metadata seem altered. They can look for shadows or reflections that don’t follow the laws of physics, for example, or check how many times an image file has been compressed to determine whether it has been saved multiple times.

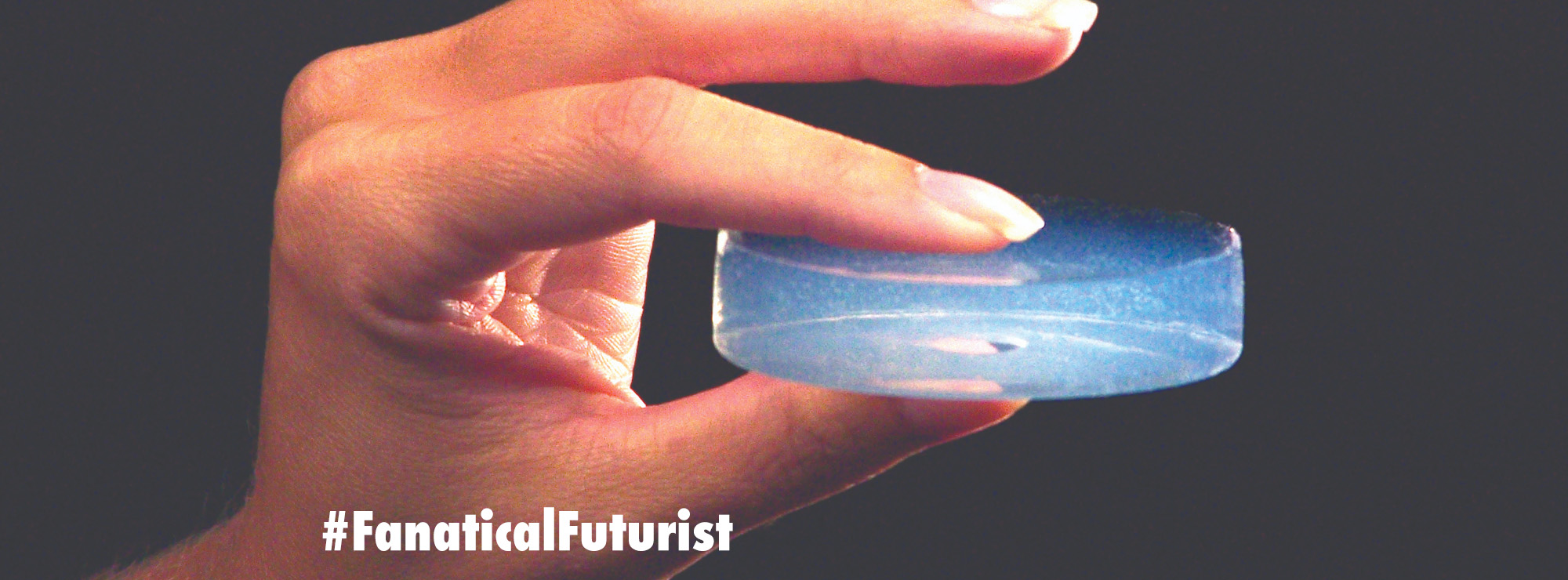

The second and newer method is to verify an image’s integrity the moment it is taken. This involves performing dozens of checks to make sure the photographer isn’t trying to spoof the device’s location data and time stamp. Do the camera’s coordinates, time zone, and altitude and nearby Wi-Fi networks all corroborate each other? Does the light in the image refract as it would for a real 3D scene? Or is someone simply taking a picture of another 2D photo?

Farid thinks this second approach is particularly promising, and considering the two billion photos that are uploaded to the web daily, he thinks it could help verify images at scale.

Two startups, US based Truepic and UK based Serelay, whose technology can both capture “verifibale photos and videos,” are now working to commercialise this idea. They have taken similar approaches – each has free iOS and Android camera apps that use proprietary algorithms to automatically verify photos when taken. If an image goes viral, it can be compared against the original to check whether it has retained its integrity.

While Truepic uploads its users’ images and stores them in its servers, Serelay stores a digital fingerprint of sorts by computing about a hundred mathematical values from each image. The company also claims that these values are enough to detect even a single pixel edit and determine approximately what section of the image was changed. Truepic meanwhile says they choose to store the full images in case users want to delete sensitive photos for safety reasons. In some instances, for example, Truepic users operating in high threat scenarios, like a war zone, need to remove the app immediately after they document scenes. Serelay, in contrast, believes that not storing the photos affords users greater privacy.

As an added layer of trust and protection, Truepic also stores all photos and metadata using a blockchain – the technology behind Bitcoin that combines cryptography and distributed networking to securely store and track information.

“It’s not bulletproof,” Farid admits, and he says there are some downsides. For instance, users must use the verification software instead of the camera app in their phone. He also notes that companies that attempt to commercialise this kind of technology may prioritize monetisation over security.

“There is some trust we are putting in the companies building these apps,” he says.

But there are also mitigating strategies. Truepic and Serelay both offer software development kits to make their technology accessible to third-party platforms. Their idea is to one day make their verification technology an industry standard for digital cameras, including Facebook, Snapchat, or even Apple’s native camera app. In that scenario, an unaltered image posted on social media could automatically receive a check mark, like a Twitter verification badge, indicating that it matches an image in their database – a sign that Serelay hopes would establish trustworthiness.

“The vast majority of the content we’re seeing online is taken with mobile devices,” says Farid. “There’s basically a handful of cameras out there that can incorporate this type of technology into their system, and I think you’d have a pretty good solution.”

Each startup is now in early talks with social-media companies to explore the possibility of a partnership, and Serelay is also part of a new Facebook accelerator program called LDN_LAB.

While the technology is not yet prevalent, Farid encourages people to use it by default when documenting high stakes scenarios anyway, whether those be political campaign speeches, human rights violations, or pieces of evidence at a crime scene. Truepic has already seen citizens use its app to document crises in Syria. Al Jazeera then used the verified footage to produce several videos. Both companies have also marketed their technology in the insurance industry as a verified way to document damage.

Farid says it’s important for companies doing this work to be transparent about their processes and work with trusted partners. That can help maintain user trust and keep bad actors away.

We still have a way to go to be fully prepared for the proliferation of deepfakes, he says. But he’s hopeful.

“The Truepic type technology and the Serelay type technology is in good shape,” he says. “I think we’re getting ready.”