WHY THIS MATTERS IN BRIEF

It’s probably time we had a proper discussion about AI and killer robots, that is if the politicians can see beyond the end of their noses…

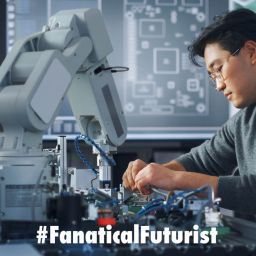

Ever since Artificial Intelligence (AI) began helped military complexes and defence organisations move the dial on automating their military systems there has been an increasing cacophony of voices that are shouting from the roof tops that the time has come to regulate AI – both in civilian life, and in the theatre of war. But, four years down the line from their first planned debate in 2013, and despite calls and open letters from thousands of experts, including Bill Gates, Elon Musk and Stephen Hawking, and even high level “Doomsday simulations,” the United Nations (UN) Convention on Conventional Weapons (CCW) committee, a framework treaty that prohibits or restricts certain weapons, still hasn’t had any decent debate on the topic, the most recent one being cancelled because Brazil hadn’t, ostensibly, paid for the meeting room.

While most countries, including China, the UK and the US agree that the use of autonomous “killer robots” and AI’s that take humans out of the “kill chain,” with Russia being the notable exception, we’re still seeing a plethora of new autonomous, “autonomous capable,” and semi-autonomous weapons systems being developed and deployed.

These include, but are not limited to, China’s smart cruise missiles, Russia’s autonomous nuclear submarines and smart hypersonic missiles, and the US’s development of Hunter-Killer drones, autonomous F-35’s and unmanned hypersonic SR-72 reconnaissance planes, autonomous mine hunters and subs and autonomous capable destroyers. To name but a couple.

That said though it’s also interesting, even as the US straps an autonomous capable AI into the heart of its critical systems, that the most powerful, and arguably “worst” use of AI could be Russian. And if it’s real, and with propaganda haze it’s hard to be certain, then it’s the perfect reason why the UN should end its debate with Brazil on who pays for the donuts and get back to debating how to regulate AI in warfare before we pass the point of no return.

Recently I’ve been picking an increasing amount of chatter about a Russian AI system called “Perimeter,” an automatic nuclear control system dubbed “Dead Hand” by the Pentagon that, up until recently, was thought to have been de-activated circa 1995.

However, if it turns out that Perimeter has been re-activated and that it really is now an AI driven system, let alone one based on “Black Box” AI tech that’s been known to spontaneously self-evolve and baffle experts, and in an age where fewer digital systems are safe thanks to a new breed of AI robo-hackers can hack into systems 100 million times faster than humans, then I know a great bunker manufacturer and a vertical farming enthusiast I can recommend to you.

What follows is a summary of some of the excerpts from Sputnik and a mix of Russian news networks, that most people say are great propaganda machines, on the topic, so grab the salt and pinch it, raise a cynical eyebrow or two and put that bunker salesman on speed dial just in case. Who knows, one day we might even be able to replace the immortal and much loved phrase “Guns don’t kill people, people do” with “People don’t kill people, AI’s do.”

Ha. Anyway… it’s time for a sad emoji 🙁

Sixty years ago the Russian’s launched the world’s first Intercontinental Ballistic Missile (ICBM) from the Soviet cosmodrome in Baikonur, the first step on the way to creating Russia’s nuclear shield.

“The R-7 [NATO designation SS-6 Sapwood] designers managed to launch the missile on the third attempt on August 21, 1957, the rocket travelled a distance of 5,600 kilometers [3,480 mi] and delivered a warhead to the Kura proving ground,” said a commentator at the time.

“Six days later the USSR officially announced that it had an operational ICBM — a year earlier than the United States did,” says Alexander Khrolenko, a reporter for the RIA Notovosti, and someone who has a keen interest in Perimeter, “thus, the Soviet Union dramatically expanded its security perimeter.”

“However, the USSR and its legal successor, the Russian Federation, continued to move forward and improve its nuclear shield. Today, Russia’s most powerful ICBM R-36M2 Voyevoda is capable of carrying 10 combat units with a capacity of 170 kilotons to a distance of up to 15,000 kilometers (9,320 mi),” he adds.

“But that is not all – the ICBM’s combat algorithms have also been improved, and in addition, the domestic nuclear deterrent system which is comprised of land, sea and aerial means of delivery of nuclear charges has become even more complicated, and today,” says Khrolenko, “Russia’s nuclear triad guarantees the annihilation of a potential aggressor under any circumstances.”

“Russia is capable of launching a retaliatory atomic strike even in the event of the death of the country’s top leadership,” he adds, referring to the famous Perimeter System which was developed in the Soviet Union in the early 1970s.

The concept came as a response to the US strategic doctrine of a “Decapitation strike” aimed at destroying a hostile country’s leadership in order to degrade its capacity for nuclear retaliation.

“This system duplicates the functions of a command post automatically triggering the launch of the Russian nuclear missiles if the country’s leadership was destroyed by a nuclear strike,” said Leonid Ivashov, President of the Academy of Geopolitical Problems during his interview with the Russian newspaper Vzglyad earlier this year.

Perimeter took up combat duty in January 1985 and in subsequent years the defense system has “ensured the country’s safety, monitoring the situation and maintaining control over thousands of its nuclear warheads.”

So, how does the system work?

“Whilst on duty, stationary and mobile control centers [of the system] are assessing seismic activity, radiation levels, air pressure and temperature, monitoring military radio frequencies and registering the intensity of communications, keeping a close watch on missile early warning system data,” says Khrolenko, “after analyzing these and other data, the system can autonomously make a decision about a retaliatory nuclear attack in case the country’s leadership is unable to activate the combat regime.”

“Having detected the signs of a nuclear strike, Perimeter sends a request to the General Staff,” Khrolenko explains, “having received a ‘calming’ response, it returns to the mode of [analysing] the situation.”

“However, if the General Staff’s line is dead Perimeter will immediately [try to contact] the strategic ‘Kazbek’ missile control system, and if Kazbek doesn’t respond either, Perimeter’s autonomous control and command system, which is based on Artificial Intelligence (AI) software, will make a decision to retaliate. It can accurately ‘understand’ that its time has come,” notes Khrolenko, stressing that there is no way to neutralize, shut down or destroy the Perimeter system.

“It is no coincidence that Western military analysts dubbed the system ‘Dead Hand’,” he adds.

So, tell me, does that win the award for “World’s worst use of AI,” and if so what should the prize be? And if this is a rabbit hole we really want to go down, what happens when, rather than if, other countries, such as North Korea follow Russia’s lead? Is it time for the UN to have that debate yet, or for governments to discuss how AI should be regulated? And, more importantly – who’ll pay for the donuts? Tab’s on me.