WHY THIS MATTERS IN BRIEF

Being able to print advanced and complex AI’s mean we can produce them cheaply and position them at the edge of networks where computer power might be limited.

Recently a team of researchers in the US revolutionised artificial intelligence when they made the world’s first neural network made from DNA, or “AI in a test tube,” and now a team of electrical and computer engineers from the University of California Los Angeles (UCLA) have announced they’ve 3D printed the world’s first physical Artificial Intelligence (AI) neural network that uses light, and not electrons like it’s traditional computer cousins, to analyse large volumes of data and identify objects. Additionally though because the new neural network uses light to work, and not electrons, it’s passive and doesn’t need any external power source to work.

Numerous devices in everyday life today use computerised cameras to identify objects — think of automated teller machines that can “read” handwritten dollar amounts when you deposit a check, or internet search engines that can quickly match photos to other similar images in their databases. But those systems rely on a piece of equipment to image the object, first by “seeing” it with a camera or optical sensor, then processing what it sees into data, and finally using computing programs to figure out what it is, and this is where the UCLA developed device gets a head start.

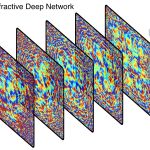

Called a “Diffractive neural network,” it uses the light bouncing from the object itself to identify that object in as little time as it would take for a computer to simply “see” the object. The UCLA device does not need advanced computing programs to process an image of the object and decide what the object is after its optical sensors pick it up. And no energy is consumed to run the device because it only uses diffraction of light.

New technologies based on the device could be used to speed up data-intensive tasks that involve sorting and identifying objects. For example, a driverless car using the technology could react instantaneously — even faster than it does using current technology — to a stop sign. With a device based on the UCLA system, the car would “read” the sign as soon as the light from the sign hits it, as opposed to having to “wait” for the car’s camera to image the object and then use its computers to figure out what the object is.

Technology based on the invention could also be used in microscopic imaging and medicine, for example, to sort through millions of cells, such as the ones that can now be imaged using smartphones, for signs of disease. The study was published online in Science.

“This work opens up fundamentally new opportunities to use an artificial intelligence based passive device to instantaneously analyse data, images and classify objects,” said Aydogan Ozcan, the study’s principal investigator, “this optical artificial neural network device is intuitively modelled on how the brain processes information. It could be scaled up to enable new camera designs and unique optical components that work passively in medical technologies, robotics, security or any application where image and video data are essential.”

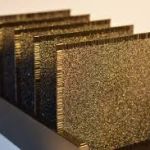

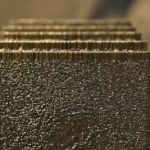

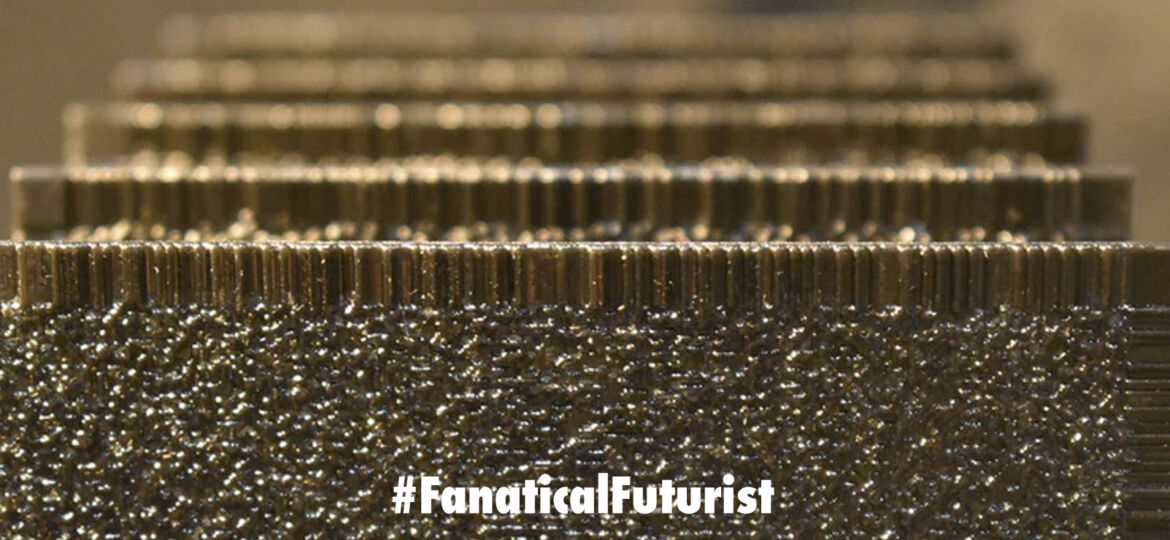

The process of creating the artificial neural network began with a computer-simulated design. Then, the researchers used a 3D printer to create very thin, 8 centimeter square polymer wafers. Each wafer has uneven surfaces, which help diffract light coming from the object in different directions. The layers look opaque to the eye but submillimeter-wavelength terahertz frequencies of light used in the experiments can travel through them. And each layer is composed of tens of thousands of artificial neurons — in this case, tiny pixels that the light travels through.

Together, a series of pixelated layers functions as an “optical network” that shapes how incoming light from the object travels through them. The network identifies an object because the light coming from the object is mostly diffracted toward a single pixel that is assigned to that type of object.

The researchers then trained the network using a computer to identify the objects in front of it by learning the pattern of diffracted light each object produces as the light from that object passes through the device. The “training” used a branch of artificial intelligence called deep learning, in which machines “learn” through repetition and over time as patterns emerge.

“This is intuitively like a very complex maze of glass and mirrors,” Ozcan said. “The light enters a diffractive network and bounces around the maze until it exits. The system determines what the object is by where most of the light ends up exiting.”

In their experiments, the researchers demonstrated that the device could accurately identify handwritten numbers and items of clothing — both of which are commonly used tests in AI studies. To do that, they placed images in front of a terahertz light source and let the device “see” those images through optical diffraction.

They also trained the device to act as a lens that projects the image of an object placed in front of the optical network to the other side of it — much like how a typical camera lens works, but using AI instead of physics. Because its components can be created by a 3D printer, the artificial neural network can be made with larger and additional layers, resulting in a device with hundreds of millions of artificial neurons. Those bigger devices could identify many more objects at the same time or perform more complex data analysis. And the components can be made inexpensively — the device created by the UCLA team could be reproduced for less than $50.

While the study used light in the terahertz frequencies, Ozcan said it would also be possible to create neural networks that use visible, infrared or other frequencies of light to identify different objects in new ways with even greater accuracy, and in the future AI neural networks could be made using lithography or other printing techniques, he said.

Source: UCLA