WHY THIS MATTERS IN BRIEF

Every year tens of millions of people around the world are diagnosed with skin cancer, and Stanford’s artificial intelligence app could save tens of thousands of lives.

It’s scary enough making a doctor’s appointment to see if a strange mole could be cancerous so imagine you were in the middle of nowhere, couldn’t take time off work or didn’t have the money to see a doctor for a diagnosis. Now, thanks to a team at Stanford University you’ll be able to whip out your phone, use an app and get a free, instant diagnosis.

Universal access to low cost, let alone free health care, certainly in the US is a challenge, and that was on the teams minds when they set off to create an artificially intelligent (AI) algorithm that could diagnose skin cancer as well as a certified dermatologist. In one way this, along with other deep learning breakthroughs, could be one step on the very very long road to democratising healthcare – or at least part of it.

In order to build their algorithm the team first made a database of nearly 130,000 skin disease images, and fed them into the algorithm as raw pixels with an associated disease label. Compared to other methods for training algorithms this method required very little processing or sorting of the images prior to classification, allowing the algorithm to work off a wider variety of data, faster. And, as the results showed the algorithm was amazingly accurate – not just at identifying cancer but also identifying the type.

Using AI to diagnose disease is part of an increasing trend that combines visual processing with deep learning, a type of AI modelled after neural networks in the brain. Deep learning has a decades long history in computer science but it’s only recently been applied to visual processing tasks.

“We realized early on that it was feasible, not just to do something well, but as well as a human dermatologist,” said Sebastian Thrun, an adjunct professor in the Stanford Artificial Intelligence Laboratory, “that’s when our thinking changed. That’s when we said, ‘Look, this is not just a class project for students, this is an opportunity to do something great for humanity.’”

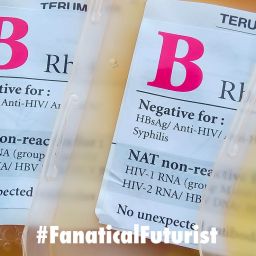

Traditionally, diagnosing skin cancer begins with a visual examination. A certified dermatologist takes a look at the suspicious lesion with the naked eye and with the aid of a dermatoscope, and if either of these methods are inconclusive or lead the dermatologist to believe the lesion is cancerous, a biopsy is the next step.

The final algorithm, which was the subject of a paper published in Nature, was then put up against 21 certified dermatologists, and its diagnosis of skin lesions – which represented the most common and deadliest of skin cancers – was as good as the dermatologists.

“We made a very powerful algorithm that learns from data,” said Andre Esteva, a graduate student involved in the project, “instead of writing into computer code exactly what to look for, we let the algorithm figure it out.”

Every year there are about 5.4 million new cases of skin cancer in the US alone, with one person dying every 54 minutes from it, and while the five year survival rate for melanoma detected in its earliest states is around 97 percent, that drops to approximately 14 percent if it’s detected in its latest stages, so early detection could have an enormous impact on skin cancer outcomes. And when you scale it out to other countries the number of people who could benefit from this “simple” technology could easily run into the tens of millions.