WHY THIS MATTERS IN BRIEF

As advanced as today’s AI’s are they still can’t reason, and that’s what’s going to hold them, and us, back from realising the milestone that is Artificial General Intelligence.

Artificial Intelligence (AI) has become pretty good at completing specific tasks over the past couple of years, whether it’s creating fake celebrities, finding and patching cyber vulnerabilities, or predicting when people will die, and all sorts of other things besides. That said though we’re still a long way off from realising Artificial General Intelligence (AGI), an AI with the kind of all around smarts that would let it navigate and “understand” the world the same way we do, and that’s despite a new General Intelligence breakthrough and the publication of a new AGI architecture by Google DeepMind last year.

One of the key elements of AGI is abstract reasoning – the ability to think beyond the here and now to see more nuanced patterns and relationships and to engage in complex thought, and last week researchers at DeepMind, who also recently created what amounts to a psychology test for their AI’s, published a research paper that detailed their attempt to measure their AI’s “abstract reasoning capabilities,” by creating tests that aren’t that dissimilar from the ones we use to measure our own reasoning capabilities.

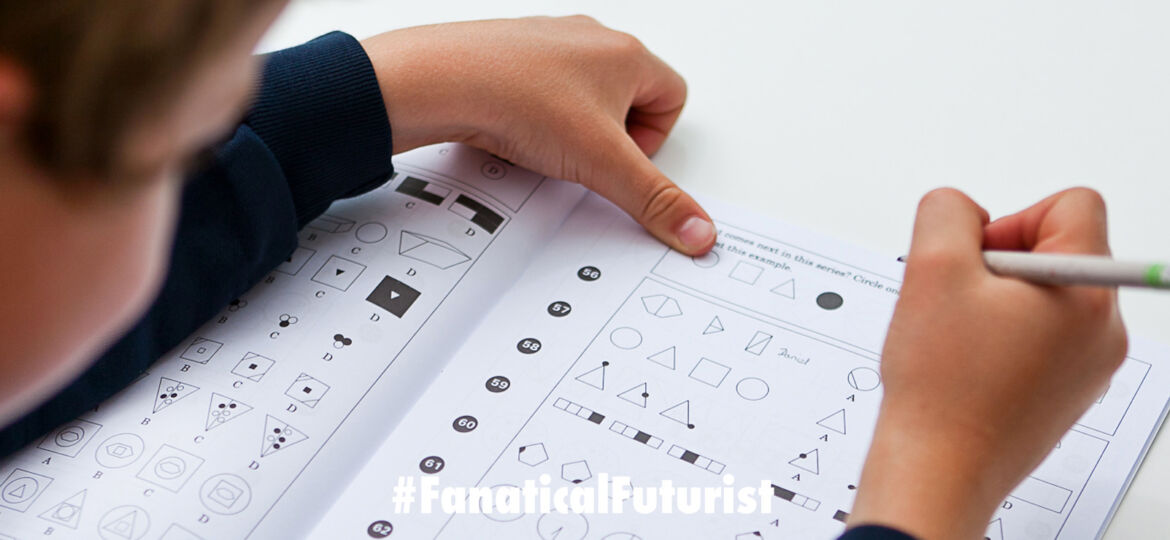

In humans we measure abstract reasoning using fairly straightforward visual IQ tests. One popular test in particular, called Raven’s Progressive Matrices, features several rows of images with the final row missing its final image. It’s up to the test taker to choose the image that should come next based on the pattern of the completed rows.

The test doesn’t outright tell the test taker what to look for in the images, sometimes the progression has to do with the number of objects within each image, their colour, or their placement. It’s then up to the user to figure out what’s missing for themselves using their ability to reason abstractly.

To apply this test to its AI’s the DeepMind team created a program that could generate unique matrix problems, and then they trained various different AI’s to solve them. Finally, they tested the systems.

In some cases they used test problems with the same abstract factors as the training set, like both training and testing the AI on problems that required it to consider the number of shapes in each image. While in other cases they used test problems that used different abstract factors than those in the original training set. For example, they might train the AI on problems that required it to consider the number of shapes in each image, but then test it on ones that required it to consider the shapes’ positions to figure out the right answer.

The results of the tests weren’t great though. When the training problems and test problems focused on the same abstract factors the systems fared just “alright” correctly answering the problems 75 percent of the time. However, the teams AI’s performed very poorly if the test set and the training sets were different, even when the differences were minor, for example, training on matrices that featured dark coloured objects and then testing the AI’s using matrices that featured light coloured objects.

Ultimately, the team’s AI “IQ test” shows that even some of today’s most advanced AI’s can’t figure out problems we haven’t trained them to solve, and that means we’re probably still a long way from AGI. But now though at least we have a straightforward way to monitor their progress and their ability to reason, and one day it’s likely they’ll ace them, and this new test will sit nicely alongside some other AI tests from other companies that will test how smart and dangerous AI algorithms are, as well as what their IQ’s might be…

Source: DeepMind