WHY THIS MATTERS IN BRIEF

Wanted: A machine that can tell the difference between Tuna and Turtles

Conservationists are increasingly turning to technology to help them protect Earth’s valuable bounty of plants, animals and resources – whether it’s robot deer to catch poachers in the northern states, or Tuna getting its own blockchain. Now the attention has turned to the great, open, high seas fisheries of the western and central tropical Pacific where it’s still a free for all – principally because only 2% of the fishing vessels are monitored by authorities. As a consequence, as much as 40% of all catches may be unintended or just plain illegal.

The Shen Lian Chen, however, with thousands of hooks trailing behind it, is playing by the rules. The vessel is one of four boats in the region that has allowed The Nature Conservancy (TNC) to install cameras recording every fish coming over the side.

It’s the first step by TNC to outfit all of the thousands of long line tuna vessels in the tropical Pacific fleet with a suite of cameras and sensors, says Matt Merrifield, the Chief Technology Officer at TNC of California. Within a few years, he wants to see 100% coverage.

“We can’t manage these stocks efficiently if we don’t have the data,” said Merrifield.

But watching all the video is a huge data challenge. On a typical two month fishing trip, each boat records about 800 hours of footage. Even a highly trained fisheries monitor would need about 10 weeks to watch all of it from a single boat and with thousands of boats, the effort looks nearly impossible.

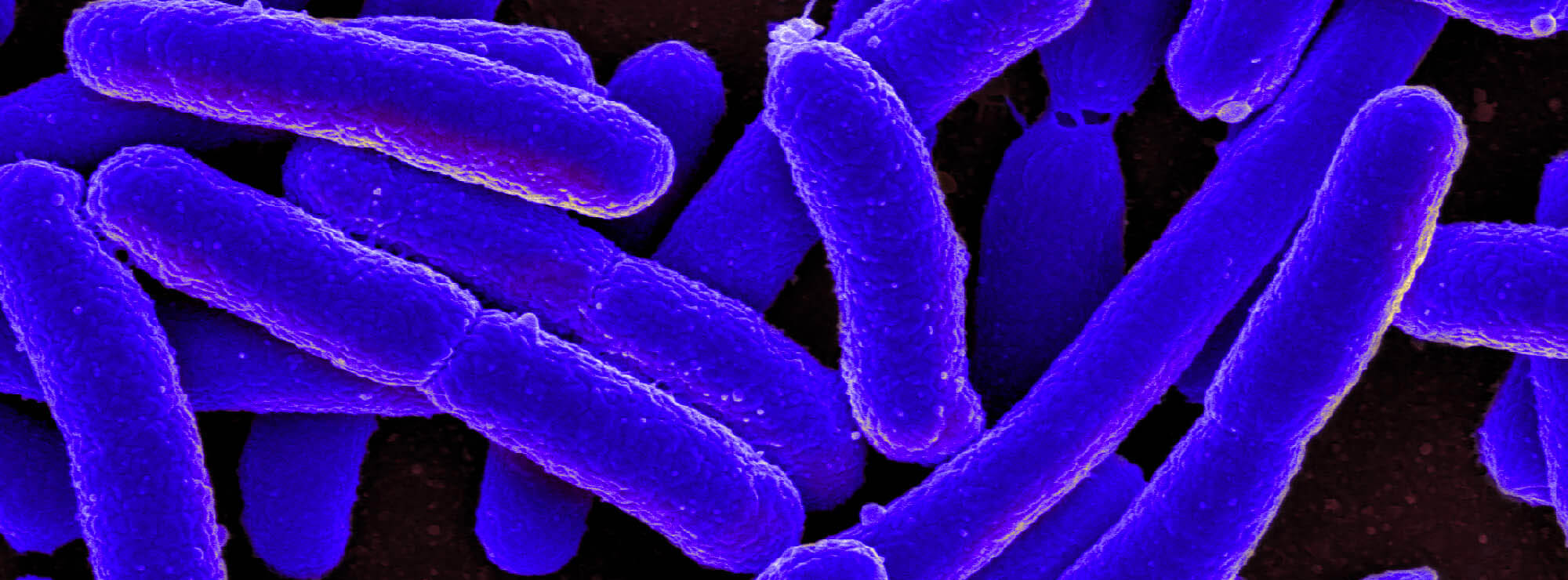

So TNC has turned to Silicon Valley. Computer vision advances are allowing computers to recognize images of birds, name plant species, catalog rainforest species and even name individual whales. The field has made strides by applying artificial neural networks – algorithms modelled on the human brain – to “learn” objects by seeing thousands or millions of examples. Every time an algorithm identifies a specific species, it increases the probability it will recognise the next one. Over time, these algorithms have made impressive progress. CalTech researchers recently announced they were able to use satellite and street level images, using Google Maps, to create inventory and identify 80,000 street trees with 80% accuracy.

TNC now wants to use them to see what’s on the end of a fishing line but building an algorithm to tell the difference between a tuna and a turtle is hard. Recognising the difference between a Big Eye and Yellow Fin tuna, as well as variations among adult and juvenile fish, is even harder.

To solve that challenge, the organization is sponsoring a $150,000 competition on the data competition platform Kaggle, and thousands of data scientists to test their algorithms against reams of video from the long line tuna fishery. Competitors must identify what, when and where a line is caught, catalog catches with 90% accuracy, and reduce the time needed to review the footage by about 40%. The organization has launched the “This is Our Future” campaign, including up-close-and-personal views of the fishery in VR to raise awareness.

If it works, TNCs plans to push for global electronic monitoring of fishing fleets. It’s starting with 24 vessels in Palau, Federated States of Micronesia, Marshall Islands, Japan and Solomon Islands. Fully outfitted, the fleet would send home more than 600,000 hours of footage per year. That’s a volume that a team of about 200 human observers could manage – with some help from intelligent machines.