WHY THIS MATTERS IN BRIEF

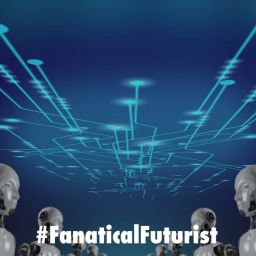

Today’s neural networks are getting enormous and complex, this is the smallest ever example of a neural network that managed to control a self-driving vehicle.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, connect, watch a keynote, or browse my blog.

Artificial Intelligence (AI) can become more efficient and reliable if it’s made to mimic biological models, so the theory goes, which is why researchers around the world are building robots with worm brains, which in turn opens the door to robots that are conscious, and are using Dragonfly brains to build next generation missile defence systems.

Modern AI has produced models that exceed human performance across countless tasks, and now an international research team is suggesting AI might become even more efficient and reliable if it learns to think more like worms.

In a paper recently published in Nature Machine Intelligence journal a team from MIT, TU Wien in Vienna, and IST Austria showed off a new form of AI that was modelled on the brains of tiny animals such as threadworms and that managed to control a self-driving car using a tiny number of artificial neurons, just 19 in total.

See the new AI in action

The researchers say the system has decisive advantages over other deep learning models because it copes much better with noisy input, and, because of its simplicity its operations can be explained in detail which alleviates AI’s so called “Black Box” problem that, in short, means no one really knows how many of these AI’s make the decisions they do because of the way they work and their complexity.

Explains TU Wien Cyber-Physical Systems head Professor Radu Grosu in a project press release: “For years, we have been investigating what we can learn from nature to improve deep learning. The nematode C. elegans, for example, lives its life with an amazingly small number of neurons, and still shows interesting behavioural patterns. This is due to the efficient and harmonious way the nematode’s nervous system processes information.”

“Nature shows us that there is still a lot of room for improvement,” adds MIT CSAIL Director Daniela Rus, who says the researchers’ goal was “to massively reduce complexity and develop a new kind of neural network architecture.”

The team developed new mathematical models of neurons and synapses and combined brain-inspired neural computation principles and scalable deep learning architectures to build compact neural controllers for task-specific compartments of a full-stack autonomous vehicle control system.

The proposed system comprises two main parts. A camera input is first processed by a convolutional neural network (CNN), which extracts structural features from pixels, decides which parts of the camera image are interesting and important, then passes signals to the crucial part of the network — a neural circuit policy (NCP) control system that steers the vehicle. The NCP consists of only 19 neurons.

The team chose a standard test task for self-driving cars, staying in a lane, and they performed the tests using a camera-equipped vehicle with the system receiving images of the road and deciding whether to steer to the right or left.

State-of-the-art functional autonomous vehicle systems typically use convolutional networks as a backbone with many additional upstream, task-specific networks. The researchers found that NCP achieved comparable results with previous SOTA models including CNN, CT-RNN, and LSTM in terms of training square error and test square error. Moreover, a full-stack NCP network is 63 times smaller than the SOTA CNN network, and NCP’s control network is 970 times sparser than that of LSTM and 241 times sparser than CT-RNN.

The researchers say interpretability and robustness are the two major advantages of their proposed model. What’s more, they say these new methods can reduce training time and make it possible to implement AI in relatively simple systems. They hope their findings will encourage the AI community to consider the principles of computation in biological nervous systems as a valuable resource for creating high-performance interpretable AI.

The paper Neural Circuit Policies Enabling Auditable Autonomy is on Nature. The code is available on the project GitHub.

Source: MIT