WHY THIS MATTERS IN BRIEF

AI has come full circle – designing new types of AI computer chips that accelerate the development of new AI’s …

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, future proof yourself with courses from XPotential University, connect, watch a keynote, or browse my blog.

AI has finally come full circle. A new suite of algorithms by Google Brain can now design its their computer chips – those specifically tailored for running AI software – that vastly outperform those designed by human experts. And the system works in just a few hours, dramatically slashing the weeks or months long process that normally gums up digital innovation.

At the heart of these robotic chip designers is a type of machine learning called deep reinforcement learning. This family of algorithms, loosely based on the human brain’s workings, has triumphed over its biological neural inspirations in games such as Chess, Go, and nearly the entire Atari catalog.

But game play was just these AI agents’ kindergarten training. More recently, they’ve grown to tackle new drugs for Covid-19, solve one of biology’s grandest challenges, and reveal secrets of the human brain.

In the new study, by crafting the hardware that allows it to run more efficiently, deep reinforcement learning is flexing its muscles in the real world once again. The team cleverly adopted elements of game play into the chip design challenge, resulting in conceptions that were utterly “strange and alien” to human designers, but nevertheless worked beautifully.

It’s not just theory. A number of the AI’s chip design elements were incorporated into Google’s Tensor Processing Unit (TPU), the company’s AI accelerator chip, which was designed to help AI algorithms run more quickly and efficiently.

“That was our vision with this work,” said study author Anna Goldie. “Now that machine learning has become so capable, that’s all thanks to advancements in hardware and systems, can we use AI to design better systems to run the AI algorithms of the future?”

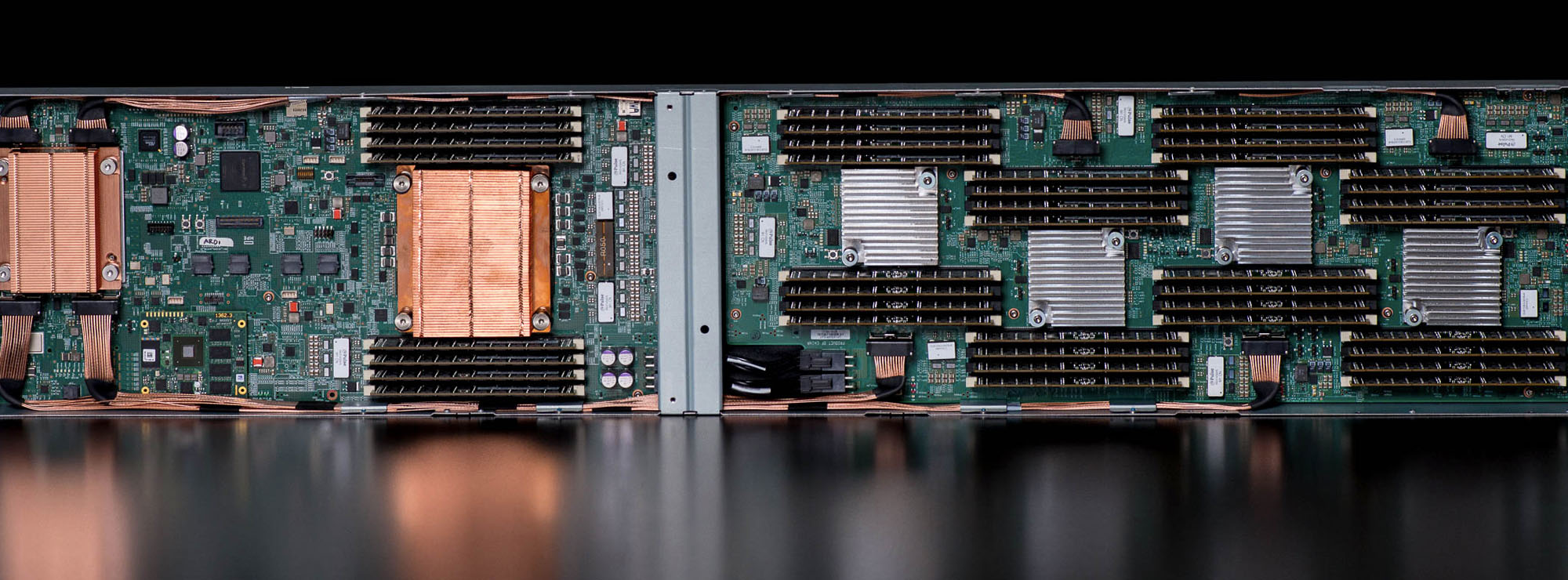

I don’t generally think about the microchips in my phone, laptop, and a gazillion other devices spread across my home. But they’re the bedrock – the hardware “brain” – that controls these beloved devices.

Often no larger than a fingernail, microchips are exquisite feats of engineering that pack tens of millions of components to optimize computations. In everyday terms, a badly-designed chip means slow loading times and the spinning wheel of death—something no one wants.

The crux of chip design is a process called “floorplanning,” said Dr. Andrew Kahng, at the University of California, San Diego, who was not involved in this study. Similar to arranging your furniture after moving into a new space, chip floorplanning involves shifting the location of different memory and logic components on a chip so as to optimize processing speed and power efficiency.

It’s a horribly difficult task. Each chip contains millions of logic gates, which are used for computation. Scattered alongside these are thousands of memory blocks, called macro blocks, which save data. These two main components are then interlinked through tens of miles of wiring so the chip performs as optimally as possible—in terms of speed, heat generation, and energy consumption.

“Given this staggering complexity, the chip-design process itself is another miracle – in which the efforts of engineers, aided by specialized software tools, keep the complexity in check,” explained Kahng. Often, floorplanning takes weeks or even months of painstaking trial and error by human experts. Yet even with six decades of study, the process is still a mixture of science and art.

“So far, the floorplanning task, in particular, has defied all attempts at automation,” said Kahng. One estimate shows that the number of different configurations for just the placement of “memory” macro blocks is about 102,500 – magnitudes larger than the number of stars in the universe. Given this complexity, it seems crazy to try automating the process. But Google Brain did just that, with a clever twist.

If you think of macro blocks and other components as chess pieces, then chip design becomes a sort of game, similar to those previously mastered by deep reinforcement learning. The agent’s task is to sequentially place macro blocks, one by one, onto a chip in an optimized manner to win the game. Of course, any naïve AI agent would struggle. As background learning, the team trained their agent with over 10,000 chip floorplans. With that library of knowledge, the agent could then explore various alternatives.

During the design, it worked with a type of “trial-and-error” process that’s similar to how we learn. At any stage of developing the floorplan, the AI agent assesses how it’s doing using a learned strategy, and decides on the most optimal way to move forward—that is, where to place the next component.

“It starts out with a blank canvas, and places each component of the chip, one at a time, onto the canvas. At the very end it gets a score – a reward – based on how well it did,” explained Goldie. The feedback is then used to update the entire artificial neural network, which forms the basis of the AI agent, and get it ready for another go-around.

The score is carefully crafted to follow the constraints of chip design, which aren’t always the same. Each chip is its own game. Some, for example, if deployed in a data center, will need to optimize power consumption. But a chip for self-driving cars should care more about latency so it can rapidly detect any potential dangers.

Using this approach, the team didn’t just find a single chip design solution. Their AI agent was able to adapt and generalize, needing just six extra hours of computation to identify optimized solutions for any specific needs – which in itself is amazing.

“Making our algorithm generalize across these different contexts was a much bigger challenge than just having an algorithm that would work for one specific chip,” said Goldie.

It’s a sort of “one-shot” mode of learning, said Kahng, in that it can produce floorplans “superior to those developed by human experts for existing chips.” A main through-line seemed to be that the AI agent laid down macro blocks in decreasing order of size. But what stood out was just how alien the designs were. The placements were “rounded and organic,” a massive departure from conventional chip designs with angular edges and sharp corners.

Human designers thought “there was no way that this is going to be high quality. They almost didn’t want to evaluate them,” said Goldie.

But the team pushed the project from theory to practice. In January, Google integrated some AI-designed elements into their next-generation AI processors. While specifics are being kept under wraps, the solutions were intriguing enough for millions of copies to be physically manufactured.

The team plans to release its code for the broader community to further optimize – and understand – the machine’s brain for chip design. What seems like magic today could provide insights into even better floorplan designs, extending the gradually slowing or dying Moore’s Law to further bolster our computational hardware. Even tiny improvements in speed or power consumption in computing could make a massive difference.

“We can…expect the semiconductor industry to redouble its interest in replicating the authors’ work, and to pursue a host of similar applications throughout the chip-design process,” said Kahng.

“The level of the impact that [a new generation of chips] can have on the carbon footprint of machine learning, given it’s deployed in all sorts of different data centers, is really valuable. Even one day earlier, it makes a big difference,” said Goldie.