WHY THIS MATTERS IN BRIEF

Forget thinking linearly, when you think exponentially everything’s possible – including taking photos through walls.

Love the Exponential Future? Join our XPotential Community, enjoy exclusive content, future proof yourself with XPotential University, connect, watch a keynote, or browse my blog.

Love the Exponential Future? Join our XPotential Community, enjoy exclusive content, future proof yourself with XPotential University, connect, watch a keynote, or browse my blog.

If you ask people what kind of superpower they’d like then naturally you’ll get answers like invisibility, which is now possible thanks to the development of invisibility cloaks and gene hacked human cells, infra red vision, laser vision, which are both also possible, and then perhaps X-Ray vision, which, thanks to another breakthrough, that one day could be packed into a smart contact lens, is also now possible – ironically albeit without the need for X-Rays. So, with no further ado, let me explain ….

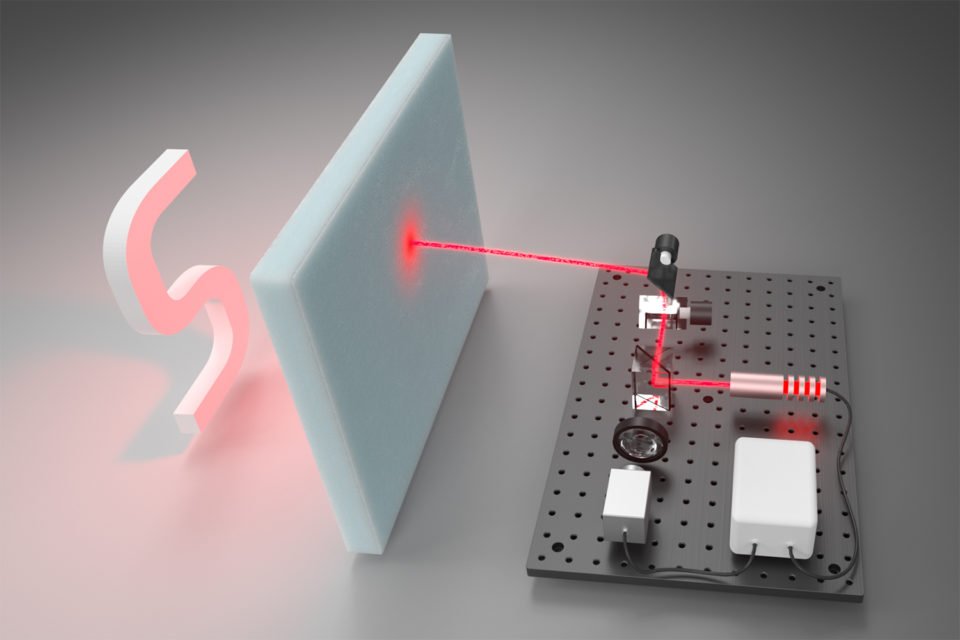

By using imaging hardware that’s similar to the tech that lets autonomous cars “see” the world around them and literally see around corners a team of researchers at Stanford University managed to tweak a previous experiment of theirs using a new algorithm to get it to reveal 3D objects hidden behind other objects by analysing nothing more than the faint light trails left by photons. In their tests, which were reported in Nature Communications, their system successfully reconstructed shapes that were hidden behind one inch thick foam which, to the human eye, is like seeing through walls.

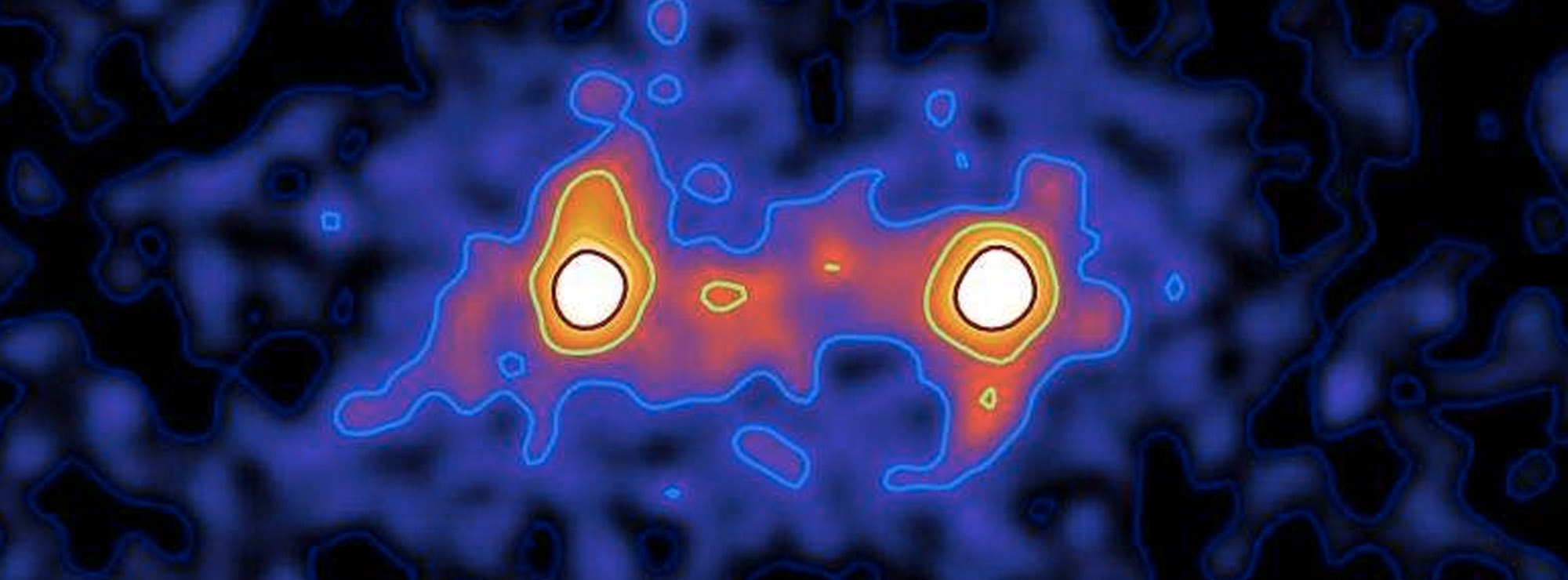

A 3D reconstruction of the reflective letter “S,” as seen through the 1-inch-thick foam. Courtesy: Stanford.

“A lot of [photon] imaging techniques make images look a little bit better, a little bit less noisy, but this is really something where we make the invisible visible,” says Gordon Wetzstein, assistant professor at Stanford and senior author of the paper. “This is really pushing the frontier of imaging and what may be possible with any kind of sensing system – it’s like superhuman vision.”

This technique complements other vision systems that can see through barriers on the microscopic scale – for applications in medicine – because it’s more focused on large-scale situations, such as navigating self-driving cars in fog or heavy rain and satellite imaging of the surface of Earth and other planets through hazy atmosphere.

The laser scanning process in action. Single photons that travel through the foam, bounce off the “S,” and back through the foam to the detector provide information for the algorithm’s reconstruction of the hidden object. Courtesy: Stanford

In order to see through environments that scatter light every-which-way, the system pairs a laser with a super-sensitive photon detector that records every bit of laser light that hits it. As the laser scans an obstruction like a wall of foam, an occasional photon will manage to pass through the foam, hit the objects hidden behind it, and pass back through the foam to reach the detector. The algorithm-supported software then uses those few photons—and information about where and when they hit the detector—to reconstruct the hidden objects in 3D.

This is not the first system with the ability to reveal hidden objects through scattering environments, but it circumvents limitations associated with other techniques. For example, some require knowledge about how far away the object of interest is. It is also common that these systems only use information from ballistic photons, which are photons that travel to and from the hidden object through the scattering field but without actually scattering along the way.

“We were interested in being able to image through scattering media without these assumptions and to collect all the photons that have been scattered to reconstruct the image,” says David Lindell, a graduate student in electrical engineering and lead author of the paper. “This makes our system especially useful for large-scale applications, where there would be very few ballistic photons.”

In order to make their algorithm amenable to the complexities of scattering, the researchers had to closely co-design their hardware and software, although the hardware components they used are only slightly more advanced than what is currently found in autonomous cars. Depending on the brightness of the hidden objects, scanning in their tests took anywhere from one minute to one hour, but the algorithm reconstructed the obscured scene in real-time and could be run on a laptop.

“You couldn’t see through the foam with your own eyes, and even just looking at the photon measurements from the detector, you really don’t see anything,” says Lindell. “But, with just a handful of photons, the reconstruction algorithm can expose these objects – and you can see not only what they look like, but where they are in 3D space.”

Someday, a descendant of this system could be sent through space to other planets and moons to help see through icy clouds to deeper layers and surfaces, but in the nearer term, the researchers would like to experiment with different scattering environments to simulate other circumstances where this technology could be useful.

“We’re excited to push this further with other types of scattering geometries,” says Lindell. “So, not just objects hidden behind a thick slab of material but objects that are embedded in densely scattering material, which would be like seeing an object that’s surrounded by fog.”

Source: Stanford University