WHY THIS MATTERS IN BRIEF

This new milestone will help to pave the way for fully autonomous drones, and vehicles, that can navigate their surroundings without the need for any specific maps or navigation aids.

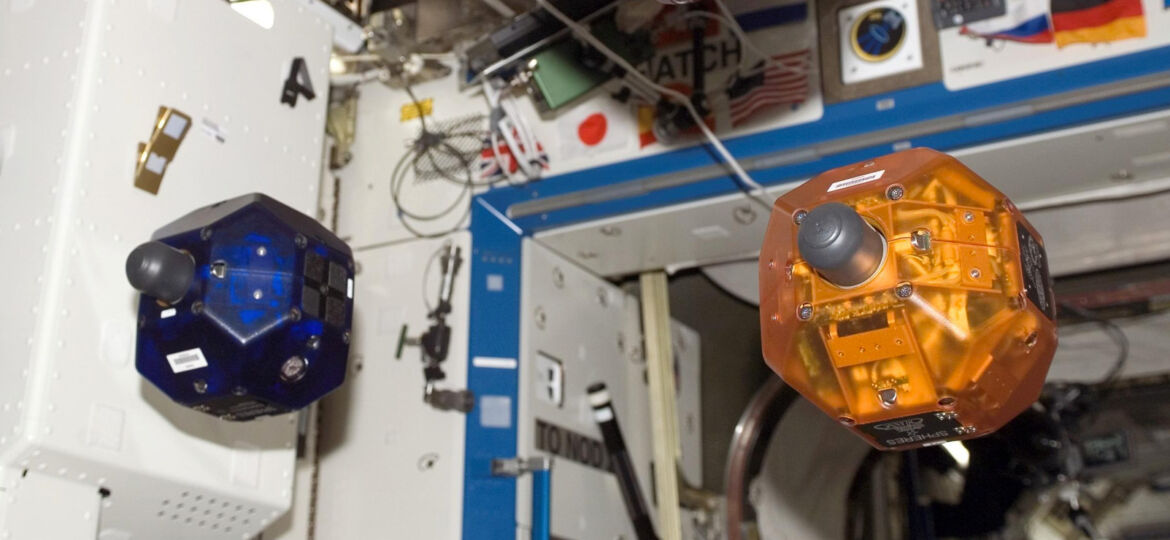

During an experiment this week on board the International Space Station (ISS) a small drone, named SPHERES successfully learned – all by itself – to see distances using only one eye and then learned how to navigate its way, in zero G, around the ISS. While humans do this effortlessly – at least it looks that way on the surface – it’s not clear how we learn how to master these kinds of navigational tasks so the fact that a drone has finally accomplished it is a big milestone. Now there’s very little stopping them from moving around at will all by themselves, and without the aid of maps or other navigational aids.

The little drones that successfully learned “to see” and navigate themselves around the ISS were three of the SPHERES (Synchronized Position Hold Engage and Reorient Experimental Satellite) drones on board the ISS. The SPHERES use a combination of twelve carbon dioxide thrusters to manoeuvre with great precision in the zero gravity environment of the station. The MIT Space Systems Laboratory, in conjunction with NASA, DARPA, and Aurora Flight Sciences, develope and operate the SPHERES system which they normally use as a platform to provide themselves with a safe and reusable zero gravity platform to test sensor, control and autonomy technologies that will eventually turn up in satellites.

SPHERE is the great great grandfather of the small, football sized autonomous drones you always see zipping around in the Star Wars films – except for the fact that this one doesn’t have a laser attached to it – yet. And, don’t think that these drones will just stay on board the ISS – the US Military and their SEALs teams have already expressed an interest in using them for surveillance missions.

The experiment was designed in collaboration between the Advanced Concepts Team (ACT) of the European Space Agency (ESA), MIT and the Micro Air Vehicles lab (MAV-lab) of Delft University of Technology (TU Delft), and was the final stage of a five years research effort that was designed to test and bring to life advanced artificial intelligence (AI) concepts for in-orbit applications.

The teams describe their approach and methodology in their catchy paper, titled “Self-supervised learning as an enabling technology for future space exploration robots: ISS experiments”, where they describe how during the experiment, a drone started navigating in the ISS while recording stereo vision information on its surroundings from its two camera “eyes”. It then started to learn about the distances to walls and obstacles encountered so that when the stereo vision camera would be switched off, it could start an autonomous exploratory behaviour using only one “eye”.

While humans can close one eye and still be able to tell whether a particular object is far, in robotics many would consider it as being extremely hard.

“It is a mathematical impossibility to extract distances to objects from one single image, if you haven’t experienced those objects before,” says Guido de Croon from Delft University of Technology and one of the principal investigators of the experiment, “but once we recognise something to be a car, we know its physical characteristics and we can use that information to estimate its distance from us. A similar logic is what we wanted the drones to learn during the experiments.”

Except for the fact that in an environment with no gravity, where there isn’t a particular, favourite direction this task is made even more difficult.

The self-supervised learning algorithm developed and used during the in-orbit experiment was thoroughly tested at the TU Delft CyberZoo using quadrotor drones.

“It was very exciting to see, for the first time, a drone in space learning using cutting edge AI methods”, added Dario Izzo who coordinated the scientific contribution from ESA’s Advanced Concepts Team.

“At ESA, and in particular here at the ACT, we worked towards this goal for the past 5 years. In space applications, machine learning is not considered as a reliable approach to autonomy – a “bad” learning result may result in a catastrophic failure of the entire mission. Our approach, based on the self-supervised learning paradigm, has a high degree of reliability and helps the drone operate autonomously – a similar learning algorithm was successfully applied to self-driving cars, a task where reliability is also of paramount importance.”

The drone experiments on earth were performed by Kevin van Hecke who visited the MIT Space Systems Lab to translate the code used in the quadcopters to something the SPHERES could use.

“It was my life-long dream to work on space technology, but that I would contribute to a learning robot in space even exceeds my wildest dreams!” he said. And the experiment seems to hold promise for the future.

“This is a further step in our quest for truly autonomous space systems, increasingly needed for deep space exploration, complex operations, for reducing costs, and increasing capabilities and science opportunities”, comments Leopold Summerer, head of ESA’s Advanced Concepts Team.