WHY THIS MATTERS IN BRIEF

Being able to predict the consequences of our actions is a vital human ability, and until now it’s one that robots have sorely lacked.

We tell our children all the time that their actions have consequences, and today, as you’d more and more robots are capable of reacting in real time, but as they increasingly interact with us and the world around them they lack one crucial ability – to envision the consequences of their actions. For example, if I asked you what would happen if you pushed a ball on the table in front of me the chances are you could probably tell me with some accuracy what direction it would take and where it would end up, but ask a robot the same question and it’ll be flummoxed. And the same goes for trying to predict human behaviours in the streets, something that will become increasingly important as we start seeing more self-driving cars appearing on our roads, and the list goes on.

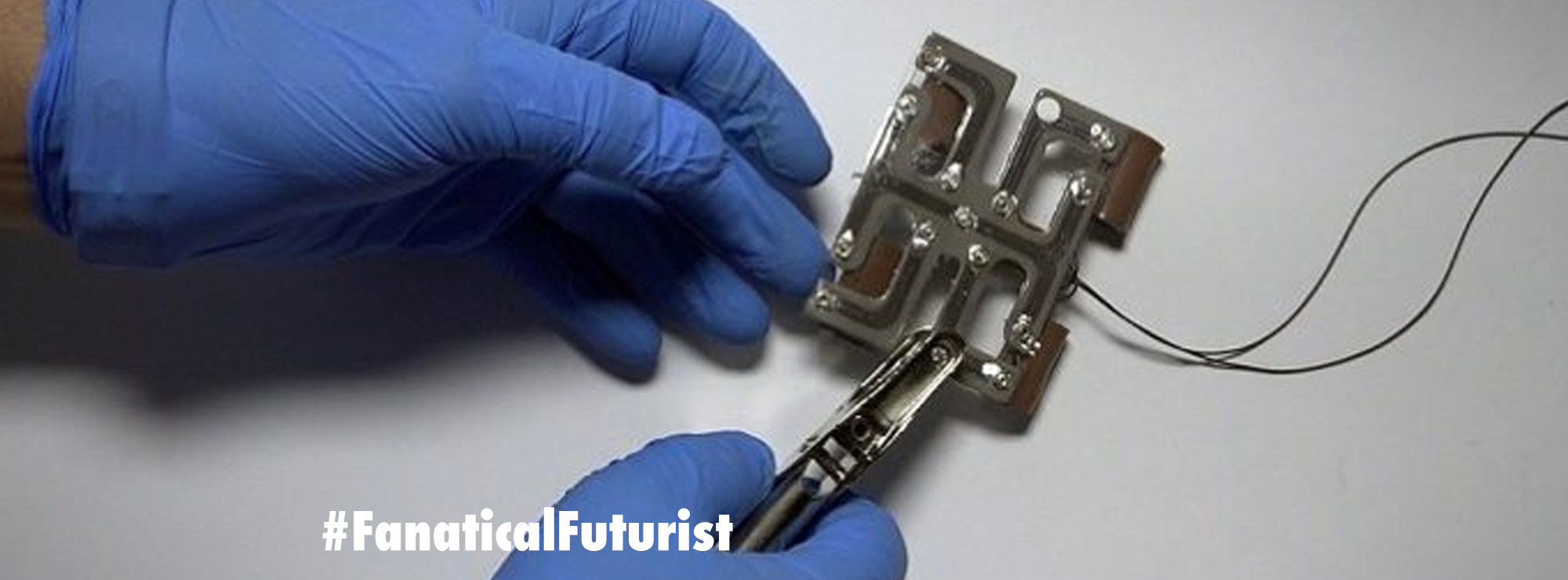

Now though researchers at the University of California, Berkeley have developed a system called Foresight that lets robots “imagine the future of their actions” so they can interact better with the objects around them and predict what will happen if they perform a particular sequence of movements.

What happens next?

“These robotic imaginations are still relatively simple for now – predictions made only several seconds into the future – but they are enough for the robot to figure out how to move objects around on a table without disturbing other obstacles. Crucially, the robots can learn to perform these tasks without any help from humans or prior knowledge about physics, its environment or what the objects are. That’s because the visual imagination is learned entirely from scratch from unattended and unsupervised exploration, where the robot plays with objects on a table. After this play phase, the robot builds a predictive model of the world, and can use this model to manipulate new objects that it has not seen before,” said Sergey Levine, assistant professor at Berkeley’s Department of Electrical Engineering and Computer Sciences, adding “in the same way that we can imagine how our actions will move the objects in our environment, our new method lets robots visualize how different behaviors will affect the world around them. This can enable intelligent planning of highly flexible skills in complex real-world situations.”

The system uses a technique called Convolutional Recurrent Video Prediction, something I’ve written about before that’s already helping AI’s predict what video sequences might come next during a particular event, and it lets the robots predict how the pixels in an image might change based on their actions.

The upshot of all this is, of course, that the robots can now play out scenarios “in their minds,” before they start touching or moving objects.

“In that past, robots have learned skills with a human supervisor helping and providing feedback. What makes this work exciting is that the robots can learn a range of visual object manipulation skills entirely on their own,” said Chelsea Finn, a doctoral student in Levine’s lab.

The robots don’t need any special information about their surroundings or any special sensors, using just a camera to analyse the scene before they decide to act – in a similar way to how we predict what will happen if we move objects on a table into each other.

“Children can learn about their world by playing with toys, moving them around, grasping, and so forth. Our aim with this research is to enable a robot to do the same – to learn about how the world works through autonomous interaction,” Levine said, “the capabilities of this robot are still limited, but its skills are learned entirely automatically, and allow it to predict complex physical interactions with objects that it has never seen before by building on previously observed patterns of interaction.”